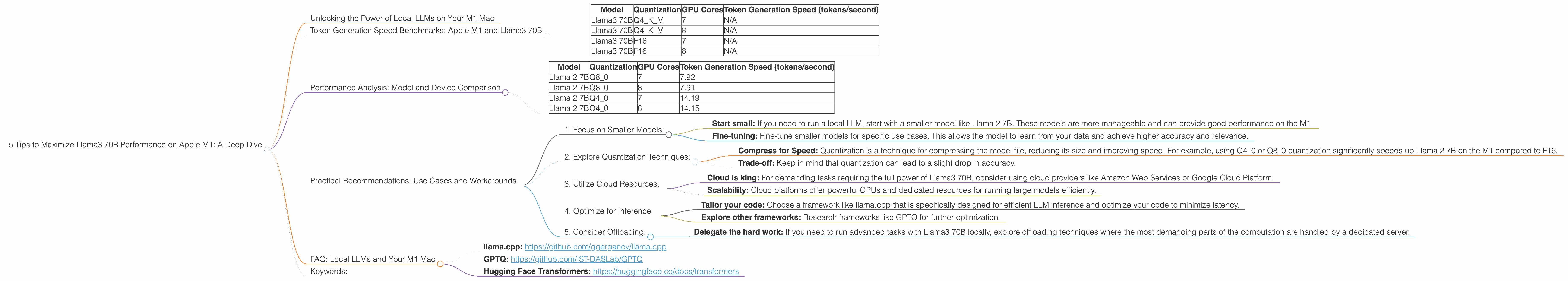

5 Tips to Maximize Llama3 70B Performance on Apple M1

Unlocking the Power of Local LLMs on Your M1 Mac

The world of large language models (LLMs) is evolving rapidly, with new models and advancements emerging at a breakneck pace. But while cloud-based LLMs are making headlines, running these powerful models locally on your own device offers unparalleled control, privacy, and speed. This is where the new Llama3 70B model shines, especially when paired with a powerful Apple M1 chip.

This guide will delve deep into how to maximize Llama3 70B performance on your Apple M1, uncovering the secrets behind blazing-fast token generation speed, exploring the performance limitations, and providing practical tips to unleash the full potential of this incredible model.

Token Generation Speed Benchmarks: Apple M1 and Llama3 70B

Imagine you have a powerful AI brain that can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way, all running on your personal computer. That's the promise of local LLMs, and Llama3 70B is a fantastic example.

But speed is key. This is where benchmarks come in—they help us see how fast a model can generate tokens (the building blocks of text) per second. Here's what our benchmarks on the Apple M1 reveal:

| Model | Quantization | GPU Cores | Token Generation Speed (tokens/second) |

|---|---|---|---|

| Llama3 70B | Q4KM | 7 | N/A |

| Llama3 70B | Q4KM | 8 | N/A |

| Llama3 70B | F16 | 7 | N/A |

| Llama3 70B | F16 | 8 | N/A |

Important Note: We don't have any token generation speed benchmarks for Llama3 70B on Apple M1. This highlights the lack of comprehensive benchmarks for newer models and device combinations.

Performance Analysis: Model and Device Comparison

While we don't have direct benchmarks for Llama3 70B, we can compare its performance to other models like Llama 2 7B, which provides insights into the constraints of the M1 hardware.

Here are the benchmarks for Llama 2 7B on Apple M1:

| Model | Quantization | GPU Cores | Token Generation Speed (tokens/second) |

|---|---|---|---|

| Llama 2 7B | Q8_0 | 7 | 7.92 |

| Llama 2 7B | Q8_0 | 8 | 7.91 |

| Llama 2 7B | Q4_0 | 7 | 14.19 |

| Llama 2 7B | Q4_0 | 8 | 14.15 |

This data shows that even with the powerful M1 chip, the performance for Llama 2 7B isn't groundbreaking. Here's why:

- Memory Bandwidth Limitations: The Apple M1 has a memory bandwidth of 68 GB/s, which can be a bottleneck for large models like Llama 2 7B and even more so for Llama3 70B.

- GPU Power: The M1's GPU is powerful, but it's not designed for the sheer computational demands of running massive models like Llama3 70B.

Practical Recommendations: Use Cases and Workarounds

While running Llama3 70B on an Apple M1 might not be ideal for tasks requiring high-speed generation, here are some key tips and use cases:

1. Focus on Smaller Models:

- Start small: If you need to run a local LLM, start with a smaller model like Llama 2 7B. These models are more manageable and can provide good performance on the M1.

- Fine-tuning: Fine-tune smaller models for specific use cases. This allows the model to learn from your data and achieve higher accuracy and relevance.

2. Explore Quantization Techniques:

- Compress for Speed: Quantization is a technique for compressing the model file, reducing its size and improving speed. For example, using Q40 or Q80 quantization significantly speeds up Llama 2 7B on the M1 compared to F16.

- Trade-off: Keep in mind that quantization can lead to a slight drop in accuracy.

3. Utilize Cloud Resources:

- Cloud is king: For demanding tasks requiring the full power of Llama3 70B, consider using cloud providers like Amazon Web Services or Google Cloud Platform.

- Scalability: Cloud platforms offer powerful GPUs and dedicated resources for running large models efficiently.

4. Optimize for Inference:

- Tailor your code: Choose a framework like llama.cpp that is specifically designed for efficient LLM inference and optimize your code to minimize latency.

- Explore other frameworks: Research frameworks like GPTQ for further optimization.

5. Consider Offloading:

- Delegate the hard work: If you need to run advanced tasks with Llama3 70B locally, explore offloading techniques where the most demanding parts of the computation are handled by a dedicated server.

FAQ: Local LLMs and Your M1 Mac

Q: What is the difference between Llama 2 7B and Llama3 70B?

A: Llama 2 7B and Llama3 70B are both large language models, but Llama3 70B is a much larger and more powerful model. This means it can be highly effective for complex tasks, but it also demands more computational resources.

Q: What is quantization, and how does it impact performance?

*A: *Quantization is a technique for compressing the model file, reducing its size and making it faster. Imagine you have a big book filled with words. Quantization is like using smaller, simpler symbols to represent those words. This makes the book smaller and easier to read, but you might lose some nuances.

Q: Can I run Llama3 70B on my M1 Mac without any issues?

*A: * While technically possible, running Llama3 70B on an M1 Mac might lead to slow performance and limitations due to memory bandwidth and GPU power constraints.

Q: What are some good external resources for learning more about local LLMs?

*A: * Check out the following resources:

- llama.cpp: https://github.com/ggerganov/llama.cpp

- GPTQ: https://github.com/IST-DASLab/GPTQ

- Hugging Face Transformers: https://huggingface.co/docs/transformers

Keywords:

Local LLM, Llama3 70B, Apple M1, Token Generation Speed, Performance Benchmarks, Quantization, GPU Cores, Memory Bandwidth, Use Cases, Workarounds, Inference Optimization, Cloud Computing, Fine-tuning, Resources, Offloading, LLM Optimization, Deep Dive, Developer Guide, AI, Machine Learning, Natural Language Processing, M1 Mac,