5 Surprising Facts About Running Llama3 8B on NVIDIA RTX 4000 Ada 20GB

Are you a developer looking to explore the world of local LLMs, but overwhelmed by the vast array of hardware choices and model variations? Strap in! This article dives deep into the performance of the Llama3 8B model running on the NVIDIA RTX4000Ada_20GB, uncovering insights and surprising facts that will help you make informed decisions for your projects.

Introducing the Powerhouse Duo: Llama3 8B and RTX4000Ada_20GB

The Llama3 8B is a powerful, open-source language model from Meta AI, boasting impressive capabilities in text generation, translation, and summarization. It's a smaller, more manageable sibling of the larger Llama3 models, making it a great starting point for exploring local LLM capabilities.

The NVIDIA RTX4000Ada_20GB is a high-performance graphics card designed for demanding tasks. Its powerful architecture and generous memory make it a strong contender in the local LLM game.

But can this power duo handle the demanding task of running a large language model? Let's find out!

Performance Analysis: Token Generation Speed Benchmarks

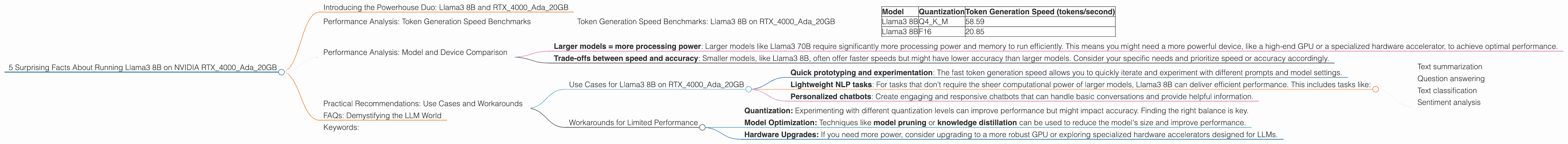

Token Generation Speed Benchmarks: Llama3 8B on RTX4000Ada_20GB

The token generation speed is a crucial metric, representing how quickly the model generates new text. Here's a breakdown of the performance for Llama3 8B on the RTX4000Ada_20GB:

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 58.59 |

| Llama3 8B | F16 | 20.85 |

Key Observations:

- Quantization: The model was tested using two quantization methods: Q4KM and F16. Quantization is a technique for compressing the model's weights, which can improve performance and reduce memory footprint. Q4KM is a more aggressive quantization scheme that significantly reduces model size but can impact accuracy. F16 offers a balance between accuracy and model size.

- Performance: The Q4KM quantization shows a clear advantage in token generation speed, with a rate of 58.59 tokens/second. This is almost three times faster than the F16 quantization, which manages 20.85 tokens/second. This highlights the importance of choosing the right quantization method for your specific needs.

To put these numbers in perspective, imagine a race between two runners. The Q4KM runner is sprinting at a blistering pace, while the F16 runner is a steady, reliable long-distance runner. When you need fast and furious text generation, the Q4KM runner is your pick. But if accuracy is paramount, the F16 runner might be the better choice despite a slower pace.

Performance Analysis: Model and Device Comparison

Unfortunately, we do not have data for the Llama3 70B model on this specific device. Therefore, we can't offer a direct comparison between the 8B and 70B models. However, we can share some general insights about the relationship between model size and performance:

- Larger models = more processing power: Larger models like Llama3 70B require significantly more processing power and memory to run efficiently. This means you might need a more powerful device, like a high-end GPU or a specialized hardware accelerator, to achieve optimal performance.

- Trade-offs between speed and accuracy: Smaller models, like Llama3 8B, often offer faster speeds but might have lower accuracy than larger models. Consider your specific needs and prioritize speed or accuracy accordingly.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on RTX4000Ada_20GB

The Llama3 8B model, with its impressive speed and performance, is well-suited for a variety of applications on the RTX4000Ada_20GB:

- Quick prototyping and experimentation: The fast token generation speed allows you to quickly iterate and experiment with different prompts and model settings.

- Lightweight NLP tasks: For tasks that don't require the sheer computational power of larger models, Llama3 8B can deliver efficient performance. This includes tasks like:

- Text summarization

- Question answering

- Text classification

- Sentiment analysis

- Personalized chatbots: Create engaging and responsive chatbots that can handle basic conversations and provide helpful information.

Workarounds for Limited Performance

While the RTX4000Ada_20GB is a capable device, its performance might not be ideal for all tasks. Here are some workarounds to consider:

- Quantization: Experimenting with different quantization levels can improve performance but might impact accuracy. Finding the right balance is key.

- Model Optimization: Techniques like model pruning or knowledge distillation can be used to reduce the model's size and improve performance.

- Hardware Upgrades: If you need more power, consider upgrading to a more robust GPU or exploring specialized hardware accelerators designed for LLMs.

FAQs: Demystifying the LLM World

Q: What is a "language model"?

A: A language model is a type of artificial intelligence that is trained on a massive dataset of text. They learn the patterns and structure of human language, which enables them to perform tasks like text generation, translation, and summarization.

Q: What is "quantization"?

A: Quantization is a technique used to compress the weights of a neural network, which are the parameters that define the model's behavior. Think of it as shrinking the model's brain without sacrificing too much intelligence. This can improve performance and reduce memory usage.

Q: What are "tokens"?

A: Tokens are the building blocks of text. Imagine a language model as a chef, and tokens as the individual ingredients. These tokens are combined in specific ways to create sentences and paragraphs, just like a chef combines ingredients to make a delicious dish.

Q: Why are "tokens/second" a relevant metric for comparing models?

A: The number of tokens a model can generate per second is a good indicator of its processing speed. A higher number of tokens per second means faster text generation, making it suitable for applications requiring quick responses, like real-time chatbots.

Keywords:

Llama3 8B, NVIDIA RTX4000Ada20GB, performance, token generation speed, quantization, Q4K_M, F16, local LLM, text generation, NLP, use cases, workarounds, model optimization.