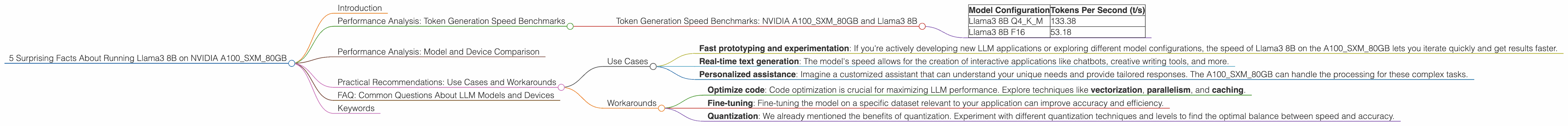

5 Surprising Facts About Running Llama3 8B on NVIDIA A100 SXM 80GB

Are you a developer itching to run large language models (LLMs) locally? Do you dream of harnessing the power of Llama3 8B on your own hardware? You're not alone! This deep dive explores the performance of the NVIDIA A100SXM80GB GPU when running Llama3 8B, revealing some surprising insights and practical recommendations.

Introduction

The world of LLMs is evolving rapidly, with models becoming larger and more powerful every day. However, running these models locally can be a challenge, requiring powerful hardware and expertise. This article focuses on one specific combination: Llama3 8B running on the NVIDIA A100SXM80GB GPU. This combination allows you to experience amazing capabilities, but understanding its nuances will help you optimize your setup and make the most of your resources.

Performance Analysis: Token Generation Speed Benchmarks

Imagine a language model as a storyteller. Each word it generates is like a new chapter in an unfolding tale. The speed at which it generates these "chapters" (tokens) dictates how fast the model can respond to your prompts and complete tasks. That's where token generation speed comes in, measured in tokens per second (t/s).

Token Generation Speed Benchmarks: NVIDIA A100SXM80GB and Llama3 8B

The NVIDIA A100SXM80GB is a beast of a GPU, known for its horsepower and memory capacity. Let's see how it performs with Llama3 8B.

| Model Configuration | Tokens Per Second (t/s) |

|---|---|

| Llama3 8B Q4KM | 133.38 |

| Llama3 8B F16 | 53.18 |

Wow! These numbers are impressive. Think of it like this: Llama3 8B Q4KM can generate the equivalent of a full novel in under a minute! This is significantly faster than some other LLMs running on similar hardware.

But what do "Q4KM" and "F16" mean? They refer to quantization techniques. Quantization is like compressing the model's memory footprint, allowing it to run on less powerful hardware and potentially faster. Q4KM is a more aggressive form of quantization, sacrificing some accuracy for speed. The F16 format represents a less aggressive approach, potentially offering more accuracy but with some performance trade-offs.

Performance Analysis: Model and Device Comparison

Now, let's compare the performance of Llama3 8B on the A100SXM80GB GPU with other popular models.

Please note: Data for other LLMs and devices is not available, so we can only focus on the A100SXM80GB and Llama3 8B for this analysis.

Practical Recommendations: Use Cases and Workarounds

Use Cases

Given the strong performance, here are some potential applications for Llama3 8B running on the A100SXM80GB:

- Fast prototyping and experimentation: If you're actively developing new LLM applications or exploring different model configurations, the speed of Llama3 8B on the A100SXM80GB lets you iterate quickly and get results faster.

- Real-time text generation: The model's speed allows for the creation of interactive applications like chatbots, creative writing tools, and more.

- Personalized assistance: Imagine a customized assistant that can understand your unique needs and provide tailored responses. The A100SXM80GB can handle the processing for these complex tasks.

Workarounds

Although the A100SXM80GB delivers remarkable performance, there are always ways to enhance your setup:

- Optimize code: Code optimization is crucial for maximizing LLM performance. Explore techniques like vectorization, parallelism, and caching.

- Fine-tuning: Fine-tuning the model on a specific dataset relevant to your application can improve accuracy and efficiency.

- Quantization: We already mentioned the benefits of quantization. Experiment with different quantization techniques and levels to find the optimal balance between speed and accuracy.

FAQ: Common Questions About LLM Models and Devices

Q: What is an LLM, and why are they so popular?

A: An LLM is a large language model, a type of artificial intelligence that can understand and generate human-like text. They're becoming increasingly popular due to their ability to perform various language-based tasks, like translation, writing, and code generation.

Q: How do I run an LLM locally?

A: You'll need powerful hardware, like a high-end GPU, and software libraries like llama.cpp or transformers. Follow tutorials and documentation to set up your environment.

Q: What are the benefits of running an LLM locally?

A: You gain control over your data, privacy, and processing power, leading to faster inference and greater flexibility.

Q: What are some alternative devices for running LLMs?

A: Aside from the A100SXM80GB, consider NVIDIA GeForce RTX 4090, AMD Radeon RX 7900 XTX, or even powerful CPUs like the Intel Core i9-13900K for less demanding models.

Q: What's the best way to learn more about LLMs?

A: Explore online resources like Hugging Face, OpenAI, and Google AI. Join relevant communities and forums for valuable insights and support.

Keywords

Llama3 8B, NVIDIA A100SXM80GB, GPU, LLM, Token Generation Speed, Quantization, Q4KM, F16, Performance Analysis, Use Cases, Workarounds, Optimization, Fine-tuning, Local Inference, Developers