5 Surprising Facts About Running Llama3 8B on NVIDIA 4090 24GB

Introduction: Unleashing the Power of Local LLMs

The world of large language models (LLMs) is buzzing with excitement. These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running LLMs locally, on your own hardware, has been a challenge. Until now.

This article delves into the fascinating world of local LLM execution, focusing on the performance of Llama3 8B on the NVIDIA 4090_24GB. We'll explore some surprising facts about this pairing, shedding light on its capabilities and limitations. Get ready for a deep dive into the world of local LLMs, where the lines between the cloud and your desktop are blurring.

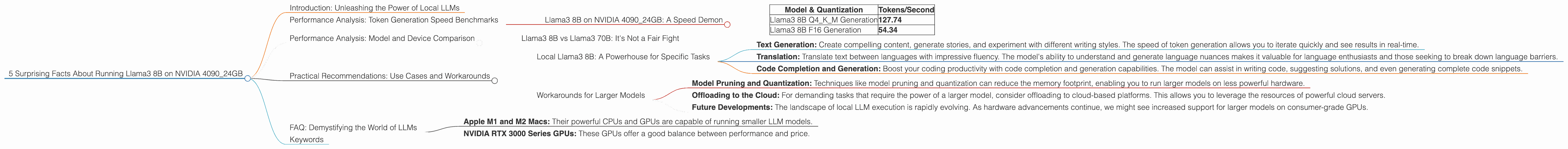

Performance Analysis: Token Generation Speed Benchmarks

Llama3 8B on NVIDIA 4090_24GB: A Speed Demon

The NVIDIA 4090_24GB is a powerhouse GPU, and it excels when paired with Llama3 8B. Let's break down the token generation speed benchmarks for different quantization levels:

| Model & Quantization | Tokens/Second |

|---|---|

| Llama3 8B Q4KM Generation | 127.74 |

| Llama3 8B F16 Generation | 54.34 |

Key takeaways:

- Q4KM Quantization Reigns Supreme: The Q4KM (quantization for kernel, matrix, and model) configuration achieves a remarkable 127.74 tokens per second. This is a significant speed boost compared to F16.

- F16 Quantization: A Trade-Off The F16 (half-precision floating point) configuration, while offering a smaller memory footprint, results in a slower token generation speed of 54.34 tokens per second.

Performance Analysis: Model and Device Comparison

Llama3 8B vs Llama3 70B: It's Not a Fair Fight

While the performance of Llama3 8B on the NVIDIA 4090_24GB is impressive, it's important to acknowledge that the larger Llama3 70B model is not supported on this specific device configuration. This is due to the limitations of memory capacity and the demands of the 70B model.

Think of it like this: Running Llama3 70B on the 4090_24GB is like trying to fit a giant elephant into a small car – it simply doesn't fit.

Practical Recommendations: Use Cases and Workarounds

Local Llama3 8B: A Powerhouse for Specific Tasks

The NVIDIA 4090_24GB shines when paired with Llama3 8B, making it suitable for a range of applications, including:

- Text Generation: Create compelling content, generate stories, and experiment with different writing styles. The speed of token generation allows you to iterate quickly and see results in real-time.

- Translation: Translate text between languages with impressive fluency. The model's ability to understand and generate language nuances makes it valuable for language enthusiasts and those seeking to break down language barriers.

- Code Completion and Generation: Boost your coding productivity with code completion and generation capabilities. The model can assist in writing code, suggesting solutions, and even generating complete code snippets.

Workarounds for Larger Models

While Llama3 70B is not directly supported, there are workarounds and considerations to keep in mind:

- Model Pruning and Quantization: Techniques like model pruning and quantization can reduce the memory footprint, enabling you to run larger models on less powerful hardware.

- Offloading to the Cloud: For demanding tasks that require the power of a larger model, consider offloading to cloud-based platforms. This allows you to leverage the resources of powerful cloud servers.

- Future Developments: The landscape of local LLM execution is rapidly evolving. As hardware advancements continue, we might see increased support for larger models on consumer-grade GPUs.

FAQ: Demystifying the World of LLMs

Q: What is quantization?

A: Quantization is a technique used to reduce the memory footprint of LLMs. It involves converting the original model's weights from 32-bit floating point to a smaller data type, such as 16-bit or 8-bit. This allows the model to run on devices with less memory and speeds up inference. Think of it like using fewer bits to represent numbers, similar to using a smaller dictionary with fewer words.

Q: What are the limitations of running LLMs locally?

A: Running LLMs locally can be challenging due to the memory requirements and processing power needed. The size of the models can be enormous, consuming significant amounts of RAM. Additionally, the computational demands for processing the data can lead to performance bottlenecks.

Q: What are some alternative devices for local LLMs?

A: While the NVIDIA 4090_24GB is a powerhouse, other devices can also handle local LLM execution. Depending on your needs, you might consider:

- Apple M1 and M2 Macs: Their powerful CPUs and GPUs are capable of running smaller LLM models.

- NVIDIA RTX 3000 Series GPUs: These GPUs offer a good balance between performance and price.

Q: What's the future of local LLMs?

A: The future of local LLMs is bright. We can expect advancements in hardware, software, and optimization techniques that will make it easier and more efficient to run larger models on everyday devices. This will open up new possibilities for developers and users alike.

Keywords

NVIDIA 409024GB, Llama3 8B, Llama3 70B, LLM, local LLM, token generation speed, quantization, Q4K_M, F16, GPU, performance analysis, use cases, translation, code completion, code generation, workarounds, model pruning, cloud computing, future of LLMs.