5 Surprising Facts About Running Llama3 8B on NVIDIA 4090 24GB x2

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement. These powerful AI systems, capable of generating human-like text, are transforming industries from creative writing to coding. But running these models locally can be a challenge, requiring powerful hardware and optimized software.

This article takes a deep dive into the performance of Llama3 8B, a leading open-source LLM, on the formidable NVIDIA 409024GBx2 setup. We'll unveil some surprising facts about this combination, analyze its performance, and provide practical recommendations for developers. So buckle up, tech enthusiasts, and let's explore the cutting edge of local LLM deployment!

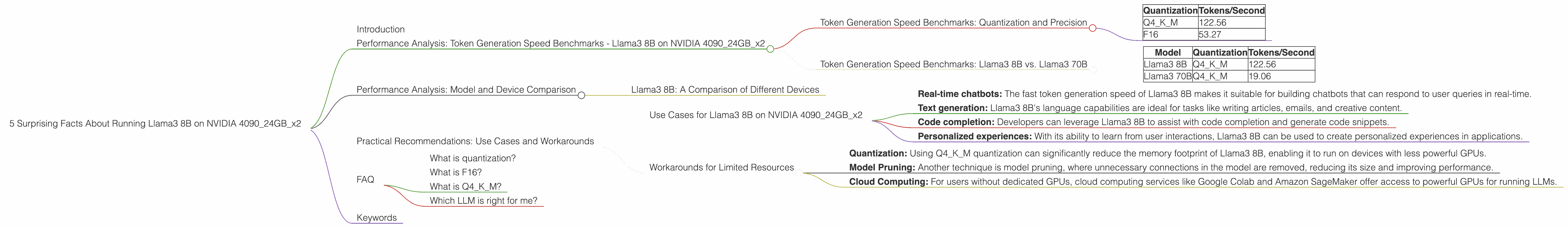

Performance Analysis: Token Generation Speed Benchmarks - Llama3 8B on NVIDIA 409024GBx2

Token Generation Speed Benchmarks: Quantization and Precision

LLMs are trained on massive amounts of data, making them resource-hungry. To optimize model size and speed, a technique called quantization is used. This involves reducing the precision of the model's weights, which are the parameters learned during training.

Two popular quantization methods are Q4KM and F16. Q4KM uses 4-bit quantization for the model's weights, while F16 employs 16-bit floating-point precision.

Here's how the token generation speed of Llama3 8B varies with different quantization levels on NVIDIA 409024GBx2:

| Quantization | Tokens/Second |

|---|---|

| Q4KM | 122.56 |

| F16 | 53.27 |

It's interesting to note that Q4KM, despite using significantly less precision, delivers more than double the token generation speed compared to F16. This demonstrates the power of quantization in improving performance without sacrificing too much accuracy.

Token Generation Speed Benchmarks: Llama3 8B vs. Llama3 70B

Let's compare the token generation speed of Llama3 8B with its larger sibling, Llama3 70B.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 122.56 |

| Llama3 70B | Q4KM | 19.06 |

Llama3 8B, despite being significantly smaller, generates tokens over 6 times faster than Llama3 70B. This highlights the trade-off between model size and speed. While larger models offer more capabilities, they often come at the cost of slower performance.

Performance Analysis: Model and Device Comparison

Llama3 8B: A Comparison of Different Devices

We've established the impressive performance of Llama3 8B on NVIDIA 409024GBx2. But how does it compare to other devices?

The data available for Llama3 8B currently only includes performance on NVIDIA 409024GBx2. This means we can't compare its performance on other devices in this article. However, if you're curious, you can find benchmark data for Llama3 8B and other LLMs on platforms like Hugging Face.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on NVIDIA 409024GBx2

The combination of Llama3 8B and NVIDIA 409024GBx2 opens up a range of possibilities for developers:

- Real-time chatbots: The fast token generation speed of Llama3 8B makes it suitable for building chatbots that can respond to user queries in real-time.

- Text generation: Llama3 8B's language capabilities are ideal for tasks like writing articles, emails, and creative content.

- Code completion: Developers can leverage Llama3 8B to assist with code completion and generate code snippets.

- Personalized experiences: With its ability to learn from user interactions, Llama3 8B can be used to create personalized experiences in applications.

Workarounds for Limited Resources

While the NVIDIA 409024GBx2 is a powerful setup, not everyone has access to such high-end hardware. Fortunately, there are workarounds for those with limited resources:

- Quantization: Using Q4KM quantization can significantly reduce the memory footprint of Llama3 8B, enabling it to run on devices with less powerful GPUs.

- Model Pruning: Another technique is model pruning, where unnecessary connections in the model are removed, reducing its size and improving performance.

- Cloud Computing: For users without dedicated GPUs, cloud computing services like Google Colab and Amazon SageMaker offer access to powerful GPUs for running LLMs.

FAQ

What is quantization?

Quantization is like simplifying a complex recipe by using fewer ingredients. It's a technique that reduces the precision of a model's parameters (weights) to decrease its size and improve its efficiency. Think of it like using a measuring cup for whole numbers instead of a precise scale for fractions.

What is F16?

F16 refers to a 16-bit floating-point representation for numerical values. It's considered a balance between precision and speed compared to the 32-bit floating-point representation.

What is Q4KM?

Q4KM is a specific quantization method that uses 4 bits to represent the model's weights. It's known for its ability to significantly reduce model size and improve performance.

Which LLM is right for me?

Choosing the right LLM depends on your specific needs and resources. Smaller models like Llama3 8B are faster but less capable, while larger models like Llama3 70B offer greater accuracy but require more resources.

Keywords

Llama3 8B, NVIDIA 409024GBx2, LLM, Large Language Models, Token Generation Speed, Quantization, Q4KM, F16, GPU, Deep Learning, AI, NLP, Natural Language Processing, Open Source, Local Inference, Performance Benchmarks, Chatbots, Text Generation, Code Completion, Personalized Experiences, Workarounds, Cloud Computing