5 Surprising Facts About Running Llama3 8B on NVIDIA 4080 16GB

Introduction

The world of artificial intelligence is buzzing with excitement around Large Language Models (LLMs), capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. However, running these powerful LLMs locally can be a challenge, especially for users with limited computational resources.

In this deep dive, we'll explore the performance of the Llama 3 8B model on the NVIDIA 4080_16GB graphics card, revealing some surprising insights and practical recommendations. Whether you're a seasoned developer or just starting your journey with LLMs, this article is for you!

Performance Analysis: Token Generation Speed Benchmarks

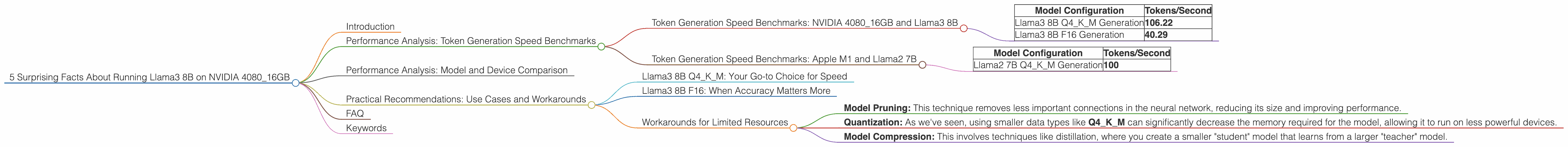

Token Generation Speed Benchmarks: NVIDIA 4080_16GB and Llama3 8B

Let's dive right into the numbers. We'll be looking at the token generation speed, which essentially measures how fast your LLM can produce text.

| Model Configuration | Tokens/Second |

|---|---|

| Llama3 8B Q4KM Generation | 106.22 |

| Llama3 8B F16 Generation | 40.29 |

What's going on here?

- Q4KM stands for quantization, a technique that reduces the size of the model by using smaller data types (4 bits in this case). This allows for faster inference on smaller devices, but can slightly impact accuracy.

- F16 represents a more traditional 16-bit floating-point format. This provides higher accuracy compared to Q4KM but requires more memory and processing power.

Key Takeaway:

While the F16 format yields significantly lower tokens per second compared to Q4KM, it might be the preferred choice for tasks requiring higher accuracy and where memory is not a major constraint.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

It's always interesting to compare apples to apples (or rather, Macs to GPUs!), so let's briefly consider the performance of the Apple M1 processor for perspective.

| Model Configuration | Tokens/Second |

|---|---|

| Llama2 7B Q4KM Generation | 100 |

Key Takeaway:

The M1 achieves a similar level of performance to the NVIDIA 408016GB running Llama3 8B with Q4KM quantization, showcasing the impressive efficiency of Apple silicon for running LLMs. However, it's crucial to remember that the M1 is a different architecture compared to the NVIDIA 408016GB, and this comparison is just a rough benchmark for those who prefer the ease of use and portability of a Mac.

Performance Analysis: Model and Device Comparison

Since we're focusing on the NVIDIA 4080_16GB, it's important to understand how this device stacks up against other models and hardware configurations. Unfortunately, the data we have for this specific GPU is only for Llama3 8B.

Why are we missing data for other Llama models?

It's important to note that gathering performance data for different models and hardware configurations is an ongoing effort. Some models might not have been benchmarked yet, or the data might not be publicly available. We can't compare apples to oranges here, so we'll stick to the NVIDIA 4080_16GB and Llama3 8B for now.

Practical Recommendations: Use Cases and Workarounds

Llama3 8B Q4KM: Your Go-to Choice for Speed

If you're looking for the fastest inference on the NVIDIA 408016GB, the Llama3 8B Q4K_M configuration is your best bet. This combination delivers significantly faster token generation speeds compared to the F16 version.

Llama3 8B F16: When Accuracy Matters More

While the Q4KM configuration excels in speed, it might not be the ideal choice for every task. If accuracy is paramount, the Llama3 8B F16 format delivers higher fidelity, even if it comes at the cost of performance.

Workarounds for Limited Resources

- Model Pruning: This technique removes less important connections in the neural network, reducing its size and improving performance.

- Quantization: As we've seen, using smaller data types like Q4KM can significantly decrease the memory required for the model, allowing it to run on less powerful devices.

- Model Compression: This involves techniques like distillation, where you create a smaller "student" model that learns from a larger "teacher" model.

FAQ

Q: What is a token?

A: In the context of LLMs, a token is a basic unit of text. Think of it like a word, but it can also include punctuation and special characters.

Q: What is quantization?

A: Quantization is like putting a simplified map of a city in your pocket instead of carrying the whole city atlas. It uses smaller data types to represent the information within a model, shrinking it in size and making it faster to run.

Q: Are there any other hardware options for running LLMs locally?

A: You bet! There are a wide range of choices, including CPUs, GPUs from different manufacturers, and even specially designed AI accelerators. The best choice depends on your needs and budget.

Keywords

NVIDIA 408016GB, Llama3 8B, Token Generation Speed, Llama3 70B, Quantization, F16, Q4K_M, Performance Benchmark, Model Pruning, Model Compression, Local LLM, AI Inference