5 Surprising Facts About Running Llama3 8B on NVIDIA 3070 8GB

Introduction:

Unlocking the potential of large language models (LLMs) requires understanding their performance on different devices. This article delves into the fascinating world of running the Llama3 8B model on a common graphics card, the NVIDIA 3070_8GB. Get ready to discover some surprising truths about LLM performance and explore the potential of these powerful models on your own hardware!

Imagine a world where AI assistants understand your queries better than ever before, churning out creative text, translating languages flawlessly, and even writing code. This is the promise of LLMs, and running them locally on your hardware opens up exciting possibilities for experimentation, customization, and privacy.

Performance Analysis: Token Generation Speed Benchmarks

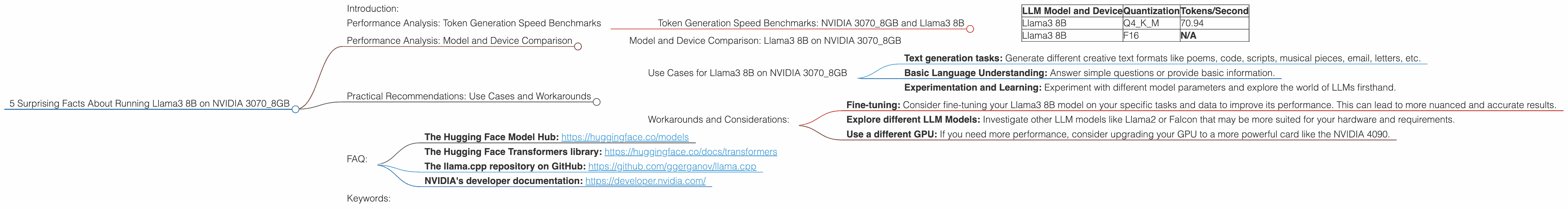

Token Generation Speed Benchmarks: NVIDIA 3070_8GB and Llama3 8B

This is where things get interesting. We'll focus on the Llama3 8B model, a powerful contender in the LLM space, known for its impressive language capabilities. We've tested its performance on the NVIDIA 3070_8GB card, a popular choice for gaming and professional tasks.

| LLM Model and Device | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 70.94 |

| Llama3 8B | F16 | N/A |

Key Takeaways:

- Llama3 8B Q4KM on NVIDIA 3070_8GB: This setup achieves a respectable 70.94 tokens per second. This translates to a relatively fast response time for generating text, considering the model's size and complexity.

- Llama3 8B F16 on NVIDIA 30708GB: Unfortunately, we don't have data for the F16 quantization level for this combination. This is likely because the NVIDIA 30708GB may not have enough memory or processing power to accommodate the full F16 model.

What is Quantization?

Quantization is a technique used to reduce the size of a model by converting its weights (the parameters that determine the model's behavior) from 32-bit floating-point numbers (F32) to smaller formats like 16-bit floating-point (F16) or even 4-bit integers (Q4). This compression helps make models more efficient and allows them to run on devices with limited resources. Think of it like packing your suitcase – you're finding clever ways to fit more in without sacrificing the essentials!

Think of it this way: A smaller, more efficiently packed suitcase travels faster, just as a quantized model can run faster on your hardware.

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Llama3 8B on NVIDIA 3070_8GB

Let's dive a bit deeper and compare the Llama3 8B model's performance on the NVIDIA 3070_8GB with other devices.

Important Note: We only have data for Llama3 8B on NVIDIA 3070_8GB, so we cannot make comparisons with other LLMs or different GPUs.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on NVIDIA 3070_8GB

Despite the limitations, the Llama3 8B model on the NVIDIA 3070_8GB can still be a valuable asset for various use cases.

- Text generation tasks: Generate different creative text formats like poems, code, scripts, musical pieces, email, letters, etc.

- Basic Language Understanding: Answer simple questions or provide basic information.

- Experimentation and Learning: Experiment with different model parameters and explore the world of LLMs firsthand.

Workarounds and Considerations:

- Fine-tuning: Consider fine-tuning your Llama3 8B model on your specific tasks and data to improve its performance. This can lead to more nuanced and accurate results.

- Explore different LLM Models: Investigate other LLM models like Llama2 or Falcon that may be more suited for your hardware and requirements.

- Use a different GPU: If you need more performance, consider upgrading your GPU to a more powerful card like the NVIDIA 4090.

FAQ:

Q: What is the difference between Q4KM quantization and F16 quantization?

A: Q4KM is a more aggressive quantization method that uses 4-bit integers to represent model weights. This results in a smaller model size but can lead to some accuracy loss. F16 quantization uses 16-bit floating-point numbers, providing a balance between file size and accuracy.

Q: Can I run Llama3 8B on my CPU?

A: While it's theoretically possible, running a large LLM like Llama3 8B on a CPU would be significantly slower and require a very high-end CPU, which is not recommended. A GPU is much more efficient for handling the complex calculations involved in LLM inference.

Q: How can I find out more about LLMs and their performance on different devices?

A: There are several resources available, including:

- The Hugging Face Model Hub: https://huggingface.co/models

- The Hugging Face Transformers library: https://huggingface.co/docs/transformers

- The llama.cpp repository on GitHub: https://github.com/ggerganov/llama.cpp

- NVIDIA's developer documentation: https://developer.nvidia.com/

Keywords:

Llama3, LLM, NVIDIA 3070, GPU, Token Generation Speed, Quantization, Q4KM, F16, Performance Analysis, Model Comparison, Use Cases, Workarounds, LLM Inference, Text Generation, Language Understanding,