5 Surprising Facts About Running Llama3 8B on Apple M1 Max

Introduction

The world of large language models (LLMs) is evolving rapidly, with new models and advancements emerging constantly. One of the key trends is the increasing focus on local LLM models, allowing users to run these powerful tools on personal devices. This shift opens up exciting possibilities for developers and enthusiasts exploring the boundaries of AI and machine learning.

In this article, we'll delve into the performance of Llama3 8B, a notable LLM, on the Apple M1_Max chip. We'll uncover some surprising facts about its capabilities and explore potential applications for developers. Buckle up, because we're going on a deep dive into the fascinating world of local LLMs!

Performance Analysis: Token Generation Speed Benchmarks - Apple M1_Max and Llama2 7B

The Apple M1_Max is a powerhouse of a chip known for its exceptional performance. But how does it handle the computationally demanding tasks of running LLMs like Llama3 8B? Let's analyze the token generation speed benchmarks.

Llama2 7B on Apple M1_Max

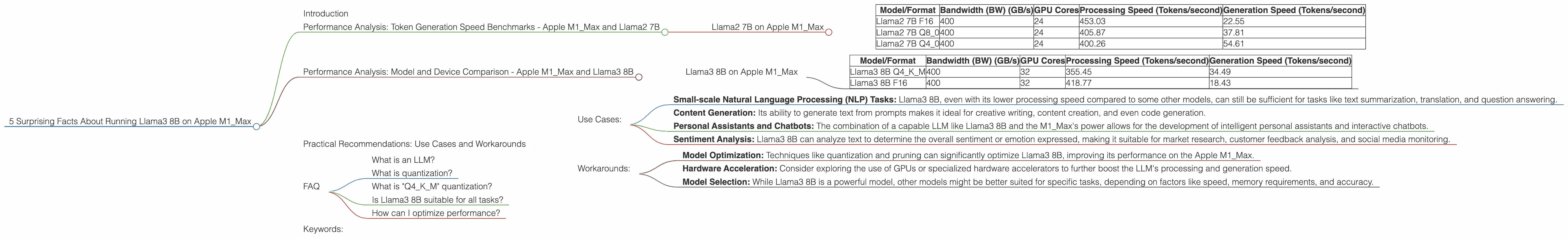

| Model/Format | Bandwidth (BW) (GB/s) | GPU Cores | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|---|---|

| Llama2 7B F16 | 400 | 24 | 453.03 | 22.55 |

| Llama2 7B Q8_0 | 400 | 24 | 405.87 | 37.81 |

| Llama2 7B Q4_0 | 400 | 24 | 400.26 | 54.61 |

Key Takeaways:

- Processing vs. Generation: The Apple M1_Max demonstrates a significant difference between processing and generation speed. This is because processing involves calculations related to the model’s weights and biases while generation involves outputting text, which requires fewer computations but still demands high latency.

- Quantization: As you might observe in the table above, the quantization of Llama2 7B plays a crucial role in its performance. By converting floating-point numbers (F16) into smaller, more efficient data types like Q80 and Q40, we see a slight improvement in processing speed and a noticeable increase in generation speed, especially for Q80 and Q40. This demonstrates the power of quantization in optimizing local LLM performance on resource constrained devices.

- Bandwidth: With a bandwidth of 400 GB/s, the Apple M1_Max excels in handling data transfer, allowing it to quickly load and process information from memory.

Performance Analysis: Model and Device Comparison - Apple M1_Max and Llama3 8B

Now, focusing on the title subject - Llama3 8B - let's compare its performance to Llama2 7B on Apple M1_Max.

Llama3 8B on Apple M1_Max

| Model/Format | Bandwidth (BW) (GB/s) | GPU Cores | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|---|---|

| Llama3 8B Q4KM | 400 | 32 | 355.45 | 34.49 |

| Llama3 8B F16 | 400 | 32 | 418.77 | 18.43 |

Key Takeaways:

- Llama3 8B vs. Llama2 7B: Llama3 8B shows slightly lower processing speed compared to Llama2 7B (at Q40). However, it demonstrates a significant leap in generation speed, particularly with the Q4K_M format. This difference could be attributed to the model's architecture and the way it handles text generation.

- Quantization: Similar to Llama2 7B, Llama3 8B also benefits from quantization. The Q4KM format, which uses kernel/matrix quantization, has a better balance of processing and generation speed compared to the full F16 format.

- GPU Cores: With a larger number of GPU cores (32), the M1_Max delivers better performance than the 24-core variant.

Practical Recommendations: Use Cases and Workarounds

Now that we have a clear understanding of Llama3 8B's performance on Apple M1_Max, let's explore practical use cases and workarounds for developers.

Use Cases:

- Small-scale Natural Language Processing (NLP) Tasks: Llama3 8B, even with its lower processing speed compared to some other models, can still be sufficient for tasks like text summarization, translation, and question answering.

- Content Generation: Its ability to generate text from prompts makes it ideal for creative writing, content creation, and even code generation.

- Personal Assistants and Chatbots: The combination of a capable LLM like Llama3 8B and the M1_Max's power allows for the development of intelligent personal assistants and interactive chatbots.

- Sentiment Analysis: Llama3 8B can analyze text to determine the overall sentiment or emotion expressed, making it suitable for market research, customer feedback analysis, and social media monitoring.

Workarounds:

- Model Optimization: Techniques like quantization and pruning can significantly optimize Llama3 8B, improving its performance on the Apple M1_Max.

- Hardware Acceleration: Consider exploring the use of GPUs or specialized hardware accelerators to further boost the LLM's processing and generation speed.

- Model Selection: While Llama3 8B is a powerful model, other models might be better suited for specific tasks, depending on factors like speed, memory requirements, and accuracy.

FAQ

What is an LLM?

LLMs, or large language models, are AI models trained on massive datasets of text and code, allowing them to understand, generate, and manipulate human language. They can perform various tasks like generating text, translating languages, writing different kinds of creative content, and answering your questions in an informative way.

What is quantization?

Quantization simplifies the data stored in an LLM by reducing the precision of numbers. Imagine representing the height of a person with a very precise measurement like 1.75432 meters. Quantization would round that down to 1.75 meters. This doesn’t affect the overall accuracy much, but it significantly reduces the amount of data that needs to be stored and processed.

What is "Q4KM" quantization?

The Q4KM format uses quantization for the model's kernels and matrices, which are the core components of the neural network. This method is like reducing the number of bits used to represent the "weights" of the network, leading to faster processing and generation.

Is Llama3 8B suitable for all tasks?

No, Llama3 8B is not a one-size-fits-all solution. It depends heavily on the specific task's requirements. Choose the LLM that's best suited for your needs.

How can I optimize performance?

Consider factors like quantization, pruning, hardware acceleration, and even choosing a different model if a smaller, faster model will meet your needs.

Keywords:

Apple M1Max, Llama3 8B, LLM, Local LLM, Token Generation Speed, Processing Speed, Generation Speed, Quantization, Q4K_M, Bandwidth, GPU Cores, NLP, Natural Language Processing, Use Cases, Workarounds, Performance Analysis, Model Optimization, Hardware Acceleration, Model Selection.