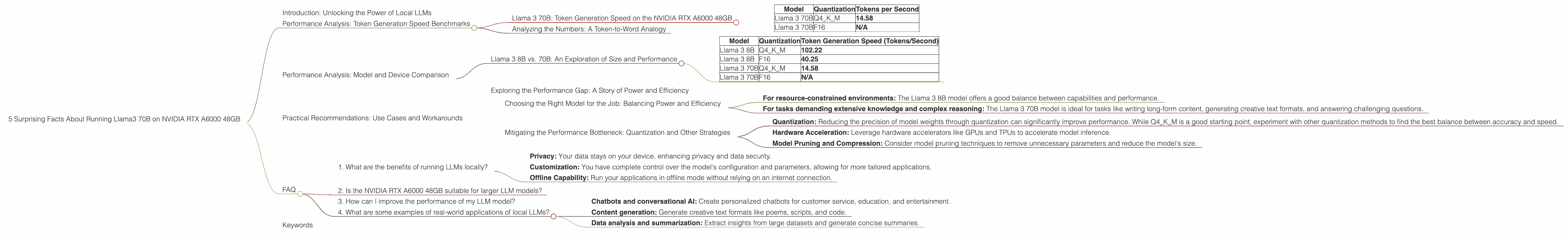

5 Surprising Facts About Running Llama3 70B on NVIDIA RTX A6000 48GB

Are you ready to unleash the power of large language models (LLMs) on your local machine? The world of LLMs is exploding, and running these models locally is becoming increasingly popular. But how does a behemoth like Llama 3 70B perform on a top-tier GPU like the NVIDIA RTX A6000 48GB? Let's dive deep into the data and uncover some surprising facts.

Introduction: Unlocking the Power of Local LLMs

Imagine having access to the incredible capabilities of LLMs like ChatGPT, but running them directly on your own hardware. This opens a world of possibilities for developers, researchers, and individuals seeking more control over their AI interactions.

However, running massive LLMs like Llama 3 70B locally requires powerful hardware, and even then, it can be a challenge to optimize for the best performance. In this article, we'll explore the performance of Llama 3 70B on the NVIDIA RTX A6000 48GB GPU, analyzing key aspects like token generation speed and model comparison.

We'll also share practical recommendations for using this powerful combination to achieve impressive results in various applications. Hold on tight, because the journey into the heart of local LLMs is about to begin!

Performance Analysis: Token Generation Speed Benchmarks

Llama 3 70B: Token Generation Speed on the NVIDIA RTX A6000 48GB

Let's start with the heart of the matter: token generation speed. This is the rate at which the model can generate new text, which directly impacts the responsiveness and efficiency of your LLM applications.

| Model | Quantization | Tokens per Second |

|---|---|---|

| Llama 3 70B | Q4KM | 14.58 |

| Llama 3 70B | F16 | N/A |

The benchmark results show that the Llama 3 70B model with Q4KM quantization can generate 14.58 tokens per second on the NVIDIA RTX A6000 48GB. While this may seem impressive, it's important to consider the context:

- The bigger the model, the slower the generation speed: The Llama 3 70B model is significantly larger than the 8B variant, demanding more computational power.

- Quantization impacts speed: Q4KM quantization, which reduces the precision of the model's weights, helps improve performance. However, it also introduces some loss in accuracy.

Analyzing the Numbers: A Token-to-Word Analogy

Imagine you're trying to write a novel using a typewriter. Each keystroke represents a token, and the speed at which you can type determines how quickly you can generate words. Similarly, a large language model's token generation speed dictates how quickly it can produce text.

With a relatively high speed of 14.58 tokens/second, the Llama 3 70B model on the RTX A6000 is like a skilled typist, generating words at a decent pace. But if you're working on a complex project, you might wish for things to move a little faster.

Performance Analysis: Model and Device Comparison

Llama 3 8B vs. 70B: An Exploration of Size and Performance

To gain a more comprehensive understanding of the performance landscape, let's compare the 8B variant of Llama 3 with its larger 70B sibling on the same NVIDIA RTX A6000 48GB GPU.

| Model | Quantization | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama 3 8B | Q4KM | 102.22 |

| Llama 3 8B | F16 | 40.25 |

| Llama 3 70B | Q4KM | 14.58 |

| Llama 3 70B | F16 | N/A |

As you can see, the smaller Llama 3 8B model exhibits considerably faster token generation speeds. This highlights the trade-off between model size and performance: larger models offer greater capabilities but require more computational resources and often result in slower processing.

Exploring the Performance Gap: A Story of Power and Efficiency

The performance difference between the 8B and 70B models is a direct result of the sheer scale of the models. The 70B model, with its massive parameter count, demands significantly more processing power. This is analogous to trying to run a marathon versus a 5k race. The marathon requires far greater endurance and stamina, just as the 70B model requires more computational resources to function effectively.

Practical Recommendations: Use Cases and Workarounds

Choosing the Right Model for the Job: Balancing Power and Efficiency

When it comes to selecting a model for your local LLM application, the size of the model is crucial. Consider the following:

- For resource-constrained environments: The Llama 3 8B model offers a good balance between capabilities and performance.

- For tasks demanding extensive knowledge and complex reasoning: The Llama 3 70B model is ideal for tasks like writing long-form content, generating creative text formats, and answering challenging questions.

Mitigating the Performance Bottleneck: Quantization and Other Strategies

While the performance of the Llama 3 70B model on the RTX A6000 is impressive, it is still limited by the model's immense size. Here are some practical tips to optimize performance:

- Quantization: Reducing the precision of model weights through quantization can significantly improve performance. While Q4KM is a good starting point, experiment with other quantization methods to find the best balance between accuracy and speed.

- Hardware Acceleration: Leverage hardware accelerators like GPUs and TPUs to accelerate model inference.

- Model Pruning and Compression: Consider model pruning techniques to remove unnecessary parameters and reduce the model's size.

FAQ

1. What are the benefits of running LLMs locally?

Running LLMs locally offers several advantages:

- Privacy: Your data stays on your device, enhancing privacy and data security.

- Customization: You have complete control over the model's configuration and parameters, allowing for more tailored applications.

- Offline Capability: Run your applications in offline mode without relying on an internet connection.

2. Is the NVIDIA RTX A6000 48GB suitable for larger LLM models?

The NVIDIA RTX A6000 48GB is a powerful GPU designed for demanding tasks, including running LLMs. However, even with this GPU, larger models like Llama 3 70B may exhibit limitations in speed and resource consumption.

3. How can I improve the performance of my LLM model?

Optimizing performance involves exploring techniques like quantization, hardware acceleration, and model pruning. Experiment with different strategies to find the most effective combination for your specific application.

4. What are some examples of real-world applications of local LLMs?

Local LLMs have a wide range of applications, including:

- Chatbots and conversational AI: Create personalized chatbots for customer service, education, and entertainment.

- Content generation: Generate creative text formats like poems, scripts, and code.

- Data analysis and summarization: Extract insights from large datasets and generate concise summaries.

Keywords

Large Language Models, LLMs, Llama 3, NVIDIA RTX A6000 48GB, Token Generation Speed, Quantization, Performance Analysis, Model Comparison, Practical Recommendations, Use Cases, Workarounds, GPU, Inference, Hardware Acceleration, Model Pruning, Compression, Local AI, Offline Capability, Privacy, Customization, Chatbots, Content Generation, Data Analysis