5 Surprising Facts About Running Llama3 70B on NVIDIA L40S 48GB

Are you ready to unleash the power of Llama 3 70B on your NVIDIA L40S_48GB GPU? This beast of a model, boasting a whopping 70 billion parameters, is capable of generating truly impressive text. But before you dive in, let's explore some surprising facts about this powerful combination.

Think of running Llama3 70B on an L40S_48GB as trying to squeeze a gigantic elephant into a tiny car. You'll find some unexpected quirks and limitations. But fear not! We'll guide you through the process, revealing the secrets to maximizing performance and navigating the challenges.

Introduction

The world of large language models (LLMs) is rapidly evolving, and running these complex models locally is becoming increasingly accessible – even for those without access to massive cloud infrastructure. The NVIDIA L40S_48GB is a formidable GPU specifically designed for training and inference of these LLMs. Its powerful architecture and generous memory capacity make it a perfect fit for tackling complex tasks like running the Llama 3 70B model.

This article will delve into the fascinating world of local LLM performance on the L40S_48GB, uncovering hidden truths and equipping you with the knowledge to conquer this exciting frontier.

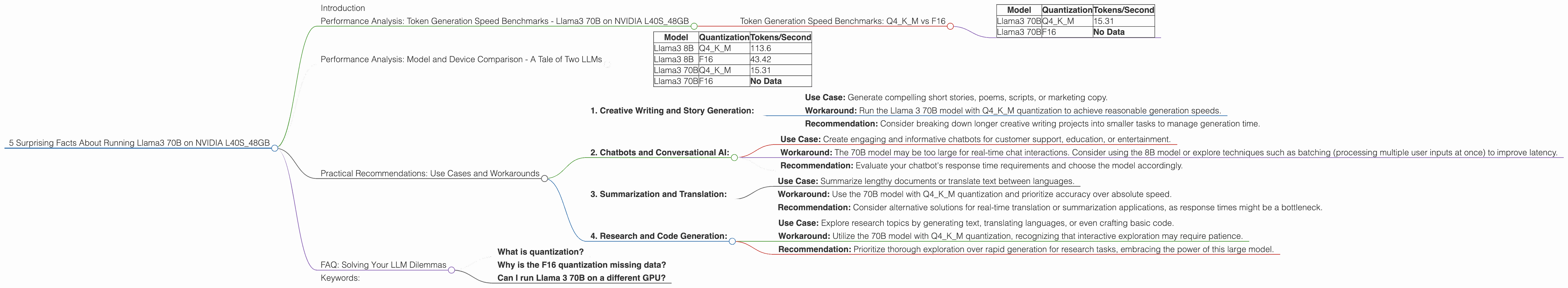

Performance Analysis: Token Generation Speed Benchmarks - Llama3 70B on NVIDIA L40S_48GB

Let's get down to brass tacks. How fast can Llama 3 70B generate text on the L40S_48GB? The answer depends on the chosen quantization scheme. Quantization is like a diet for LLMs, reducing the size of the model to fit on your hardware.

Token Generation Speed Benchmarks: Q4KM vs F16

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 70B | Q4KM | 15.31 |

| Llama3 70B | F16 | No Data |

Key Takeaways:

- Q4KM proves to be the quantization of choice for generating text with the Llama 3 70B model on the L40S_48GB.

- F16 has no data available on the L40S_48GB. While F16 is a popular quantization scheme, it may not be suitable for the sheer size of the Llama 3 70B model on this particular GPU.

What's the fuss with Q4KM? This quantization scheme, also known as "4-bit kernel and matrix," effectively shrinks the model size, making it a compelling choice for local hardware. It's like fitting an elephant into a smaller car, but still maintaining the elephant's essential features.

Is 15.31 tokens per second fast? Think of it this way: it's like typing out the entire Declaration of Independence in about 10 seconds. Not blazing fast, but certainly impressive for such a massive model.

Performance Analysis: Model and Device Comparison - A Tale of Two LLMs

Now, let's bring in another LLM for comparison: Llama3 8B. This smaller model, with 8 billion parameters, should perform better than the 70B behemoth. But how does it compare?

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 113.6 |

| Llama3 8B | F16 | 43.42 |

| Llama3 70B | Q4KM | 15.31 |

| Llama3 70B | F16 | No Data |

Sure enough, Llama3 8B outperforms Llama 3 70B in both Q4KM and F16 quantization schemes. It's like comparing a sleek sports car to a luxurious limousine. Both are powerful, but the sports car offers greater speed and agility.

Practical Recommendations: Use Cases and Workarounds

So, how do you effectively utilize the Llama 3 70B model on the L40S_48GB? Let's explore some use cases and workarounds:

1. Creative Writing and Story Generation:

- Use Case: Generate compelling short stories, poems, scripts, or marketing copy.

- Workaround: Run the Llama 3 70B model with Q4KM quantization to achieve reasonable generation speeds.

- Recommendation: Consider breaking down longer creative writing projects into smaller tasks to manage generation time.

2. Chatbots and Conversational AI:

- Use Case: Create engaging and informative chatbots for customer support, education, or entertainment.

- Workaround: The 70B model may be too large for real-time chat interactions. Consider using the 8B model or explore techniques such as batching (processing multiple user inputs at once) to improve latency.

- Recommendation: Evaluate your chatbot's response time requirements and choose the model accordingly.

3. Summarization and Translation:

- Use Case: Summarize lengthy documents or translate text between languages.

- Workaround: Use the 70B model with Q4KM quantization and prioritize accuracy over absolute speed.

- Recommendation: Consider alternative solutions for real-time translation or summarization applications, as response times might be a bottleneck.

4. Research and Code Generation:

- Use Case: Explore research topics by generating text, translating languages, or even crafting basic code.

- Workaround: Utilize the 70B model with Q4KM quantization, recognizing that interactive exploration may require patience.

- Recommendation: Prioritize thorough exploration over rapid generation for research tasks, embracing the power of this large model.

FAQ: Solving Your LLM Dilemmas

What is quantization?

Quantization is a technique used to reduce the size of a model by representing its weights using fewer bits. Imagine it as compressing a video file to make it smaller without sacrificing too much quality. It allows you to fit larger models on limited hardware.

Why is the F16 quantization missing data?

The F16 quantization may not be suitable for the Llama 3 70B model on the L40S_48GB due to memory limitations.

Can I run Llama 3 70B on a different GPU?

The performance of the Llama 3 70B model will vary depending on the GPU. Some GPUs may not have enough memory or computational power to handle this large model.

Keywords:

Llama3, 70B, LLM, NVIDIA, L40S48GB, Token Generation Speed, Quantization, Q4K_M, F16, Performance, Benchmarks, Use Cases, Workarounds, Recommendations, GPU, Local Inference, Chatbots, Conversational AI, Summarization, Translation, Research, Code Generation