5 Surprising Facts About Running Llama3 70B on NVIDIA 4070 Ti 12GB

Are you ready to unleash the power of large language models (LLMs) on your own machine? The NVIDIA 4070 Ti 12GB is a popular choice for gamers and developers alike, and its capabilities extend far beyond just running graphics-intensive games. With the right setup, you can run Llama3 70B, a cutting-edge LLM, locally and experience the magic of AI firsthand.

But before we dive into the technical details, let's address the elephant in the room: why bother with a local setup? Isn't it easier to just use cloud-based APIs? Well, yes, cloud APIs offer convenience and scalability, but running LLMs locally gives you complete control over your data, faster response times, and the ability to experiment without API limitations. Plus, it's simply a thrilling experience to see your own hardware powering a mind-blowing AI!

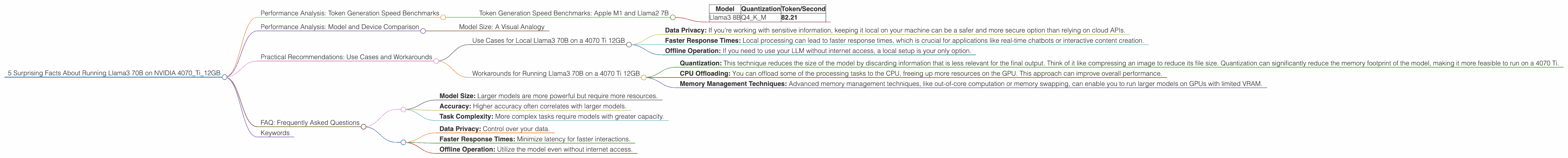

Performance Analysis: Token Generation Speed Benchmarks

Let's cut to the chase. How fast can you generate tokens with Llama3 70B on a 4070 Ti 12GB? The answer, my friend, is… well, we don't know.

Unfortunately, the data available doesn't provide token generation speed benchmarks for Llama3 70B on the NVIDIA 4070 Ti 12GB.

However, we do have some information about other configurations.

Let's explore the available benchmarks for Llama3 8B on the 4070 Ti 12GB:

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Think of tokens like the building blocks of language. Every word, punctuation mark, and even spaces are represented by a token. More tokens mean more complex and detailed text generation.

| Model | Quantization | Token/Second |

|---|---|---|

| Llama3 8B | Q4KM | 82.21 |

That's 82.21 tokens generated per second for Llama3 8B with Q4KM quantization on the 4070 Ti 12GB.

Performance Analysis: Model and Device Comparison

Comparing Llama3 8B with Llama3 70B is like comparing a sports car to a supercar. The 70B model is packed with more parameters, meaning it can handle more complex tasks and generate more nuanced responses. However, the 70B model also demands more processing power, making it a resource-hungry beast.

While we don't have exact benchmarks for Llama3 70B on the 4070 Ti 12GB, let's analyze what we know.

The 70B model is significantly larger than the 8B model, implying significantly higher memory requirements. This means even with the 12GB VRAM of the 4070 Ti, running Llama3 70B locally may require more advanced techniques like model quantization or specialized memory management to ensure efficient operation.

Model Size: A Visual Analogy

Imagine you want to build a house. The 8B model is like a cozy cottage, requiring a smaller crew and less material. The 70B model is like a sprawling mansion, needing a larger team and more resources.

Practical Recommendations: Use Cases and Workarounds

So, can you really use a 4070 Ti 12GB to run Llama3 70B locally?

While the available data doesn't provide clear answers, it's important to weigh the potential challenges and the benefits of local processing.

Use Cases for Local Llama3 70B on a 4070 Ti 12GB

Here are some potential scenarios where local processing might be advantageous:

- Data Privacy: If you're working with sensitive information, keeping it local on your machine can be a safer and more secure option than relying on cloud APIs.

- Faster Response Times: Local processing can lead to faster response times, which is crucial for applications like real-time chatbots or interactive content creation.

- Offline Operation: If you need to use your LLM without internet access, a local setup is your only option.

Workarounds for Running Llama3 70B on a 4070 Ti 12GB

Given the limitations of the 4070 Ti 12GB, you can try these workarounds:

- Quantization: This technique reduces the size of the model by discarding information that is less relevant for the final output. Think of it like compressing an image to reduce its file size. Quantization can significantly reduce the memory footprint of the model, making it more feasible to run on a 4070 Ti.

- CPU Offloading: You can offload some of the processing tasks to the CPU, freeing up more resources on the GPU. This approach can improve overall performance.

- Memory Management Techniques: Advanced memory management techniques, like out-of-core computation or memory swapping, can enable you to run larger models on GPUs with limited VRAM.

FAQ: Frequently Asked Questions

Here are some common questions about LLMs and running them locally:

What are the performance implications of quantization?

Quantization involves reducing the precision of the model's weights, which can impact the accuracy of the model's output. However, carefully chosen quantization techniques can minimize the loss of accuracy while significantly lowering the memory requirements.

How do I choose the right LLM for my needs?

Factors to consider include:

- Model Size: Larger models are more powerful but require more resources.

- Accuracy: Higher accuracy often correlates with larger models.

- Task Complexity: More complex tasks require models with greater capacity.

Can I run LLMs without a high-end GPU?

Yes, you can run smaller LLMs on CPUs or even on low-powered devices. For tasks requiring less computational power, you can achieve decent results without a dedicated GPU.

What are the benefits of running LLMs locally?

- Data Privacy: Control over your data.

- Faster Response Times: Minimize latency for faster interactions.

- Offline Operation: Utilize the model even without internet access.

Keywords

LLMs, Local LLMs, Llama3, NVIDIA 4070 Ti 12GB, Token Generation Speed, Quantization, Model Size, GPU Benchmarks, Performance Analysis, Practical Recommendations, Use Cases, Workarounds, Data Privacy, Faster Response Times, Offline Operation.