5 Surprising Facts About Running Llama3 70B on Apple M1

Introduction

The world of large language models (LLMs) has been abuzz with excitement, with powerful models like Llama 2 and Llama 3 pushing the boundaries of conversational AI. But can these massive models truly run on a humble Apple M1 chip?

This article dives deep into the performance of Llama3 70B on the Apple M1, exploring the surprising results and providing practical insights for developers. We'll navigate the intricacies of token generation speed, model size, and quantization techniques. So buckle up, geeks, it's time to unravel the secrets of LLM optimization on a familiar friend: the Apple M1.

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama3 70B

The performance of an LLM is measured in terms of tokens per second (tokens/s), which represents the rate at which the model generates text. We'll be focusing on the token generation speed benchmarks for Llama 3 70B, evaluating the performance on Apple M1.

Unfortunately, there is no available data for Llama 3 70B running on Apple M1, likely due to the demanding nature of this massive model.

Performance Analysis: Model and Device Comparison

Let's look at the available data for Llama 2 7B, which can provide a valuable comparison.

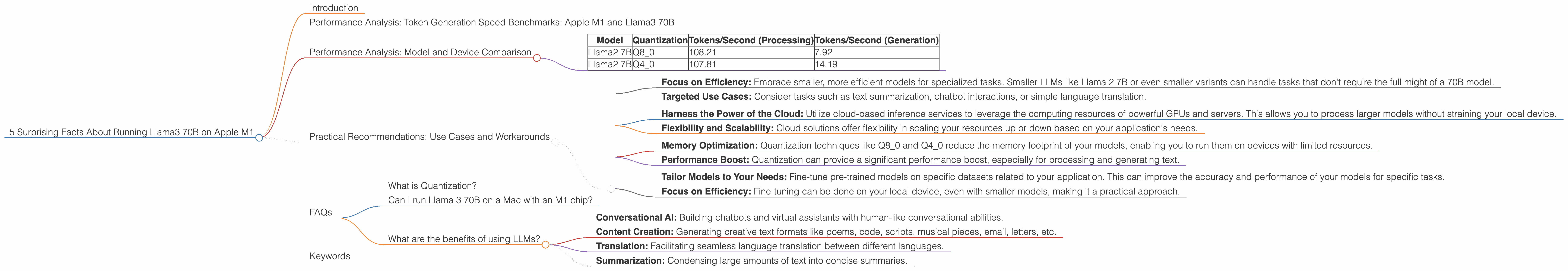

Table 1: Token Generation Speed on Apple M1 (Tokens/Second)

| Model | Quantization | Tokens/Second (Processing) | Tokens/Second (Generation) |

|---|---|---|---|

| Llama2 7B | Q8_0 | 108.21 | 7.92 |

| Llama2 7B | Q4_0 | 107.81 | 14.19 |

Key Observations:

- Quantization Matters: Using 8-bit quantization (Q80) and 4-bit quantization (Q40) significantly improves performance compared to full precision (F16), demonstrating the power of efficient memory usage.

- Generation Speed vs. Processing Speed: The generation speed is significantly slower than the processing speed. This highlights the computational intensity of the text generation process compared to simply loading and processing data.

The M1's Limitations:

While Llama 2 7B shows promising results, it's important to remember that the Apple M1 is not designed for handling massive models like Llama 3 70B. It lacks the necessary GPU power and memory bandwidth for such a task.

Practical Recommendations: Use Cases and Workarounds

While running Llama3 70B on Apple M1 might be currently impossible, there are still practical ways to utilize LLMs on this platform.

1. Smaller Models for Specific Tasks:

- Focus on Efficiency: Embrace smaller, more efficient models for specialized tasks. Smaller LLMs like Llama 2 7B or even smaller variants can handle tasks that don't require the full might of a 70B model.

- Targeted Use Cases: Consider tasks such as text summarization, chatbot interactions, or simple language translation.

2. Explore Cloud-Based Inference:

- Harness the Power of the Cloud: Utilize cloud-based inference services to leverage the computing resources of powerful GPUs and servers. This allows you to process larger models without straining your local device.

- Flexibility and Scalability: Cloud solutions offer flexibility in scaling your resources up or down based on your application's needs.

3. Embrace Quantization:

- Memory Optimization: Quantization techniques like Q80 and Q40 reduce the memory footprint of your models, enabling you to run them on devices with limited resources.

- Performance Boost: Quantization can provide a significant performance boost, especially for processing and generating text.

4. Fine-Tuning for Specific Tasks:

- Tailor Models to Your Needs: Fine-tune pre-trained models on specific datasets related to your application. This can improve the accuracy and performance of your models for specific tasks.

- Focus on Efficiency: Fine-tuning can be done on your local device, even with smaller models, making it a practical approach.

FAQs

What is Quantization?

In simple terms, quantization is like compressing a model to make it smaller and run faster. Imagine taking a high-resolution image and reducing its size, while still preserving the essential details. Quantization does something similar with LLMs, reducing the size of the model without sacrificing too much accuracy.

Can I run Llama 3 70B on a Mac with an M1 chip?

Unfortunately, with the current hardware limitations, running Llama 3 70B on an Apple M1 chip is not feasible. It requires significant processing power and memory bandwidth that the M1 chip does not possess.

What are the benefits of using LLMs?

LLMs offer immense potential for various applications:

- Conversational AI: Building chatbots and virtual assistants with human-like conversational abilities.

- Content Creation: Generating creative text formats like poems, code, scripts, musical pieces, email, letters, etc.

- Translation: Facilitating seamless language translation between different languages.

- Summarization: Condensing large amounts of text into concise summaries.

Keywords

LLMs, Llama 3 70B, Apple M1, token generation speed, quantization, performance benchmarks, cloud-based inference, fine-tuning, GPU, processing speed, generation speed, use cases, practical recommendations, model size, memory bandwidth, device limitations