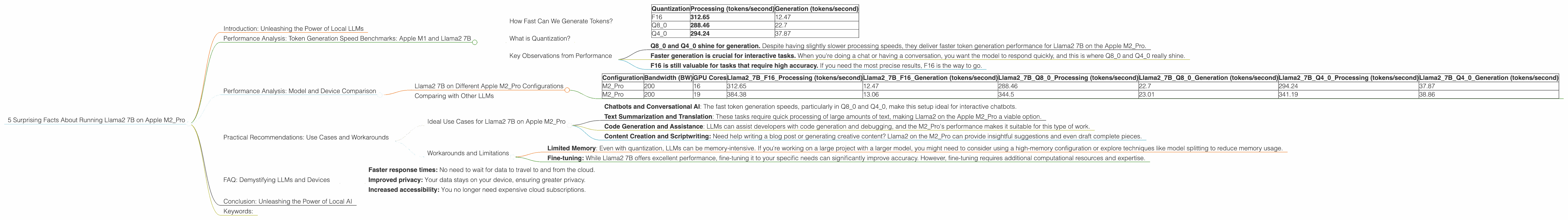

5 Surprising Facts About Running Llama2 7B on Apple M2 Pro

Introduction: Unleashing the Power of Local LLMs

The world of large language models (LLMs) is exploding, with new models and capabilities emerging every day. But harnessing the power of these models often requires relying on cloud services, introducing latency and potential privacy concerns. The exciting news is that we can now run LLMs locally on our own devices, bringing the future of AI closer than ever.

In this deep dive, we'll explore the surprising performance of the Llama2 7B model running on the Apple M2_Pro, a powerful chip designed to run computationally intensive tasks like machine learning. We'll uncover the fascinating world of quantized models, benchmark token generation speeds, and reveal practical use cases for this powerful combination. So grab your coffee, get ready for a geek-out session, and let's dive in!

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

How Fast Can We Generate Tokens?

Token generation speed is a key metric for evaluating LLM performance. Essentially, it tells us how many tokens (words or parts of words) the model can generate per second. Remember, LLMs process text as tokens, which are like small chunks of information.

Let's take a look at the token generation speeds for Llama2 7B on the Apple M2_Pro in different quantization settings:

| Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| F16 | 312.65 | 12.47 |

| Q8_0 | 288.46 | 22.7 |

| Q4_0 | 294.24 | 37.87 |

A few things jump out:

- F16 (half-precision floating point) delivers the fastest processing speed, but it also comes with the slowest generation speed. Think of it like this: F16 is the Ferrari on the track, blazing through calculations, but then it takes a while to actually spit out those words.

- Q8_0 (8-bit quantization) gives a decent balance between processing and generation speed, making it a good middle ground option. It's like the reliable Toyota Camry, getting the job done consistently.

- Q4_0 (4-bit quantization) offers the fastest generation speed at the cost of some processing speed. It's like the city-slicker scooter, getting you around quickly but with a little less horsepower.

What is Quantization?

Quantization is the process of reducing the size of a model, making it more efficient and requiring less memory. Imagine compressing a large file to reduce its file size. In LLMs, quantization reduces the number of bits used to store model parameters.

The table above shows the effect of quantization on Llama2 7B. F16 uses the full accuracy of the model, while Q80 and Q40 trade off accuracy for size and speed benefits.

Key Observations from Performance

- Q80 and Q40 shine for generation. Despite having slightly slower processing speeds, they deliver faster token generation performance for Llama2 7B on the Apple M2_Pro.

- Faster generation is crucial for interactive tasks. When you're doing a chat or having a conversation, you want the model to respond quickly, and this is where Q80 and Q40 really shine.

- F16 is still valuable for tasks that require high accuracy. If you need the most precise results, F16 is the way to go.

Performance Analysis: Model and Device Comparison

Llama2 7B on Different Apple M2_Pro Configurations

Now, let's explore performance differences on various Apple M2_Pro configurations. This data can help you choose the best setup for your specific needs.

| Configuration | Bandwidth (BW) | GPU Cores | Llama27BF16_Processing (tokens/second) | Llama27BF16_Generation (tokens/second) | Llama27BQ80Processing (tokens/second) | Llama27BQ80Generation (tokens/second) | Llama27BQ40Processing (tokens/second) | Llama27BQ40Generation (tokens/second) |

|---|---|---|---|---|---|---|---|---|

| M2_Pro | 200 | 16 | 312.65 | 12.47 | 288.46 | 22.7 | 294.24 | 37.87 |

| M2_Pro | 200 | 19 | 384.38 | 13.06 | 344.5 | 23.01 | 341.19 | 38.86 |

Key observations:

- More GPU cores means more processing power. The M2_Pro with 19 GPU cores outperforms the 16-core version in all quantization configurations. This is expected since a higher number of cores means more processing power.

- Bandwidth is a crucial factor in performance. When comparing the two M2_Pro configurations, both have the same bandwidth (200 GB/s), suggesting that bandwidth is not the limiting factor in performance.

Comparing with Other LLMs

It's important to note that the data for other LLMs (like Llama2 70B) is not available in this dataset. Therefore, we can't provide a direct comparison with those models. However, the performance results for Llama2 7B on the Apple M2_Pro are impressive, suggesting that this combination can handle a variety of tasks.

Practical Recommendations: Use Cases and Workarounds

Ideal Use Cases for Llama2 7B on Apple M2_Pro

- Chatbots and Conversational AI: The fast token generation speeds, particularly in Q80 and Q40, make this setup ideal for interactive chatbots.

- Text Summarization and Translation: These tasks require quick processing of large amounts of text, making Llama2 on the Apple M2_Pro a viable option.

- Code Generation and Assistance: LLMs can assist developers with code generation and debugging, and the M2_Pro's performance makes it suitable for this type of work.

- Content Creation and Scriptwriting: Need help writing a blog post or generating creative content? Llama2 on the M2_Pro can provide insightful suggestions and even draft complete pieces.

Workarounds and Limitations

- Limited Memory: Even with quantization, LLMs can be memory-intensive. If you're working on a large project with a larger model, you might need to consider using a high-memory configuration or explore techniques like model splitting to reduce memory usage.

- Fine-tuning: While Llama2 7B offers excellent performance, fine-tuning it to your specific needs can significantly improve accuracy. However, fine-tuning requires additional computational resources and expertise.

FAQ: Demystifying LLMs and Devices

Q: What is a large language model, and why are they important?

A: A large language model is a type of artificial intelligence trained on massive amounts of text data. They can understand and generate human-like text, perform various tasks like translation, summarization, and even write creative content. They are becoming increasingly essential in fields like customer service, education, and content creation.

Q: How do LLMs work?

A: Imagine a complex network of neurons, similar to the human brain. LLMs are based on this concept, using deep learning algorithms and tons of data to learn patterns and relationships in language. They can then use this knowledge to generate text, translate languages, or answer your questions.

Q: Why are local LLMs becoming popular?

A: Local LLMs allow you to run these powerful models directly on your own device, eliminating the need for cloud services. This offers several advantages, including:

- Faster response times: No need to wait for data to travel to and from the cloud.

- Improved privacy: Your data stays on your device, ensuring greater privacy.

- Increased accessibility: You no longer need expensive cloud subscriptions.

Q: How can I get started with local LLMs?

A: Several resources are available to help you get started with local LLMs. You can explore libraries like llama.cpp or transformers to run models on your device. There are also online tutorials and communities dedicated to helping you learn more about local LLMs.

Conclusion: Unleashing the Power of Local AI

The combination of Llama2 7B and the Apple M2_Pro holds immense potential for those seeking to harness the power of LLMs locally. The surprising performance results highlight the increasing accessibility and value of running LLMs on our own devices.

Whether you're building a chatbot, generating creative text, or exploring the world of AI development, the Apple M2_Pro and Llama2 7B are powerful tools capable of driving innovation and unlocking the possibilities of local AI.

Keywords:

Llama2, Llama 7B, Apple M2Pro, Local LLMs, Token Generation Speed, Quantization, F16, Q80, Q4_0, LLM Inference, AI, Machine Learning, Deep Learning, GPU, Performance Benchmarks, Use Cases, Applications, Chatbots, Content Generation, Code Generation, Workarounds, Limitations, Fine-tuning, Privacy, Accessibility