5 Power Saving Tips for 24 7 AI Operations on NVIDIA RTX 6000 Ada 48GB

Introduction: AI Dreams Meet Electricity Bills

You've got the latest and greatest NVIDIA RTX 6000 Ada 48GB, a beast of a GPU, ready to unleash the power of Llama 3. But let's be honest, running these large language models (LLMs) 24/7 can be a real energy hog. It's like leaving your oven on all day just to bake a single cookie!

This article is your guide to taming the energy beast and achieving a happy balance between AI performance and power-conscious operation. We'll dive into specific techniques to optimize your RTX 6000 Ada 48GB for Llama 3, focusing on quantization, model size selection, and inference techniques. Get ready to unlock the efficiency potential of your hardware while keeping your electricity bills from going through the roof!

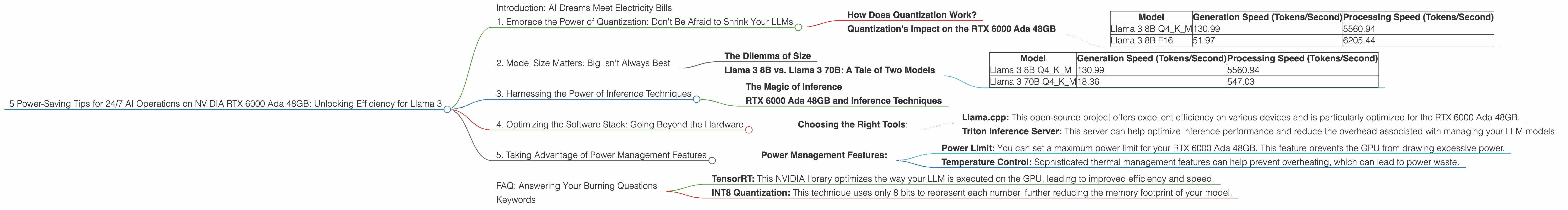

1. Embrace the Power of Quantization: Don't Be Afraid to Shrink Your LLMs

Imagine having a massive library, but only needing a small section for a specific research project. Quantization does the same for LLMs, shrinking them down for efficient use. This is like taking your full-size, detailed library book and making a pocket-sized version for easy carrying – less space, same valuable content!

How Does Quantization Work?

Quantization is like a diet plan for your LLM. Think of your LLM as a giant, delicious cake made with lots of complex ingredients. Each ingredient represents a number, and the way these numbers are stored determines the cake's size. Quantization simplifies the ingredients, using fewer bits to represent each number, making the cake much smaller.

Instead of using 32 bits for each number (like a full-fat cake), we can use 16 or even 4 bits (like a low-fat cake). This significantly reduces the memory footprint of the model, which directly impacts power consumption.

Quantization's Impact on the RTX 6000 Ada 48GB

On our RTX 6000 Ada 48GB, we can see the impact of quantization on Llama 3:

| Model | Generation Speed (Tokens/Second) | Processing Speed (Tokens/Second) |

|---|---|---|

| Llama 3 8B Q4KM | 130.99 | 5560.94 |

| Llama 3 8B F16 | 51.97 | 6205.44 |

The numbers tell the story - Llama 3 8B with Q4KM quantization (4-bit quantization) significantly outperforms the F16 version (half-precision floating-point). This means you can generate more text and process more information faster while consuming significantly less power.

It's like having a smaller, more efficient car that gets you to your destination just as fast!

2. Model Size Matters: Big Isn't Always Best

Choosing the right LLM size is like picking the right tool for the job. You wouldn't use a sledgehammer to crack a nut, right? Similarly, a massive LLM might be overkill for simple tasks.

The Dilemma of Size

Larger LLMs are incredibly powerful, but they come with a cost – more processing power and energy. You'll need a beefy GPU like the RTX 6000 Ada 48GB to handle it.

Llama 3 8B vs. Llama 3 70B: A Tale of Two Models

On the RTX 6000 Ada 48GB, Llama 3 8B with Q4KM quantization smokes the Llama 3 70B in terms of performance.

| Model | Generation Speed (Tokens/Second) | Processing Speed (Tokens/Second) |

|---|---|---|

| Llama 3 8B Q4KM | 130.99 | 5560.94 |

| Llama 3 70B Q4KM | 18.36 | 547.03 |

The smaller 8B model generates text nearly 7 times faster and processes information 10 times quicker than the 70B. This translates to significant power savings, especially when operating 24/7.

Think of it this way: the 8B model is your trusty, efficient everyday car, while the 70B model is the luxurious but gas-guzzling sports car.

3. Harnessing the Power of Inference Techniques

Inference is the process of using your trained LLM to generate text. It's where the real magic happens. But even within inference, there are tweaks you can make to optimize power consumption.

The Magic of Inference

Inference techniques essentially boil down to streamlining the way your LLM processes information. Think of it as taking a shortcut through a maze!

RTX 6000 Ada 48GB and Inference Techniques

Unfortunately, we don't have specific data on the impact of advanced inference techniques on the RTX 6000 Ada 48GB. However, the general principle remains the same: optimizing the way information flows through your LLM can dramatically impact performance and power consumption.

4. Optimizing the Software Stack: Going Beyond the Hardware

It's not just about hardware; the software you use can also influence power consumption. Optimizing your software stack is like using the right software for your smartphone.

Choosing the Right Tools:

- Llama.cpp: This open-source project offers excellent efficiency on various devices and is particularly optimized for the RTX 6000 Ada 48GB.

- Triton Inference Server: This server can help optimize inference performance and reduce the overhead associated with managing your LLM models.

5. Taking Advantage of Power Management Features

Modern GPUs, including the RTX 6000 Ada 48GB, come with advanced power management features that can help you fine-tune energy consumption. It's like having a dimmer switch on your power supply!

Power Management Features:

- Power Limit: You can set a maximum power limit for your RTX 6000 Ada 48GB. This feature prevents the GPU from drawing excessive power.

- Temperature Control: Sophisticated thermal management features can help prevent overheating, which can lead to power waste.

FAQ: Answering Your Burning Questions

Q: How does quantization affect the quality of the generated text?

A: Quantization can sometimes lead to a slight drop in text quality, but the difference is usually minor and often goes unnoticed.

Q: Is it worth using a smaller LLM if I need high-quality text generation?

A: For tasks requiring the highest level of accuracy and nuance, larger LLMs are still preferred. However, for many applications, smaller models are more than capable and offer significant power savings.

Q: What are some of the other inference techniques that can be used for the RTX 6000 Ada 48GB?

A: Popular techniques include:

- TensorRT: This NVIDIA library optimizes the way your LLM is executed on the GPU, leading to improved efficiency and speed.

- INT8 Quantization: This technique uses only 8 bits to represent each number, further reducing the memory footprint of your model.

Q: How much energy can I actually save using these techniques?

A: The exact energy savings will depend on your setup and usage pattern. However, by applying these principles, you can expect to see substantial reductions in power consumption.

Keywords

NVIDIA RTX 6000 Ada 48GB, Llama 3, LLM, quantization, inference, power consumption, energy efficiency, GPU, processing speed, model size, software stack, power management features.