5 Power Saving Tips for 24 7 AI Operations on NVIDIA 4090 24GB x2

Introduction

Running large language models (LLMs) like Llama 3.0 on powerful GPUs like the NVIDIA 409024GBx2 can be an incredible experience – imagine generating creative text, translating languages, or writing code, all powered by the magic of AI. But with the sheer computational power of these models, the cost of running them 24/7 can be a significant factor.

Don't worry though, savvy AI enthusiasts! We're going to dive into some power-saving tips that will help you keep your AI dreams alive without breaking the bank.

The Balancing Act of Performance and Power

Let's face it, these mammoth LLMs are like hungry beasts – they need a lot of energy to stay humming. The NVIDIA 409024GBx2 is an absolute powerhouse, but its power consumption can be a major concern for prolonged operations. Think of it this way: running an LLM on this GPU is like driving a Formula 1 car – it's incredibly fast, but that speed comes at a price.

Power-Saving Tips for your NVIDIA 409024GBx2

1. Embrace Quantization: The Art of Shrinking LLMs

Imagine squeezing a whole library into a tiny backpack – that's the essence of quantization for LLMs. It's like downsizing a model without sacrificing too much performance. Instead of using 32-bit floating-point numbers, we can use 4-bit integers (Q4), which dramatically reduces model size and memory requirements.

Think of it as using smaller building blocks to build the same structure - it might not be exactly the same, but it's surprisingly close in the world of AI.

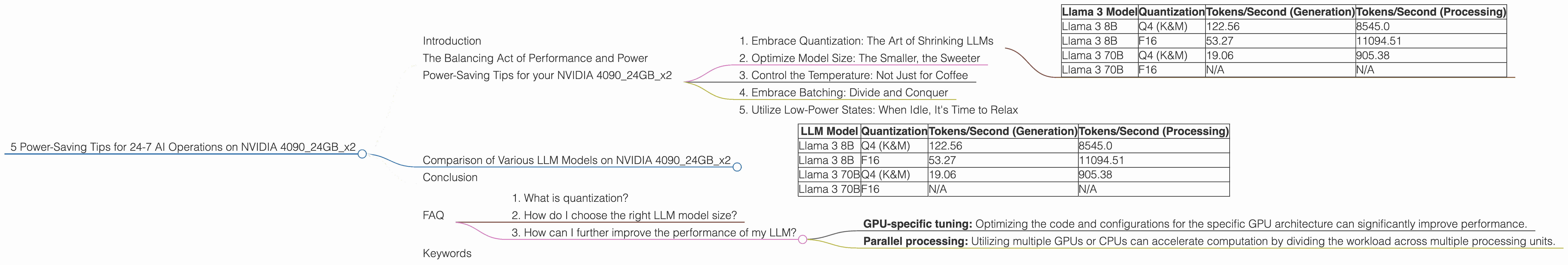

Let's look at the performance numbers of Llama 3 on the NVIDIA 409024GBx2:

| Llama 3 Model | Quantization | Tokens/Second (Generation) | Tokens/Second (Processing) |

|---|---|---|---|

| Llama 3 8B | Q4 (K&M) | 122.56 | 8545.0 |

| Llama 3 8B | F16 | 53.27 | 11094.51 |

| Llama 3 70B | Q4 (K&M) | 19.06 | 905.38 |

| Llama 3 70B | F16 | N/A | N/A |

As you can see from the table, the Llama 3 8B Q4 (K&M) has a significantly faster token generation speed than the F16 version, while also achieving a good token processing speed. This means that both the speed of generating new text and the ability to process existing text are efficient.

While the F16 version exhibits a faster processing speed for Llama 3 8B, it's important to note that this comes at the cost of lower generation speed. This trade-off might be acceptable depending on your specific use case.

2. Optimize Model Size: The Smaller, the Sweeter

Choosing the right model size for your needs is like picking the perfect pair of shoes – the right fit makes all the difference. If you're not requiring the immense processing power of a 70B parameter model for your tasks, opting for the smaller 8B version can significantly reduce power consumption.

Remember, bigger isn't always better, especially when it comes to power efficiency!

3. Control the Temperature: Not Just for Coffee

LLMs are known for their creative flair, but sometimes that creativity can get a little wild. Similar to controlling the heat on your stove, we can use the "temperature" parameter to manage the level of randomness in generated text.

Lowering the temperature can sometimes result in more predictable and coherent outputs while still maintaining a level of creativity. This parameter can be adjusted to fine-tune the balance between predictability and randomness, allowing you to find the sweet spot that fits your specific needs.

4. Embrace Batching: Divide and Conquer

Batching is like organizing your grocery shopping – instead of making multiple trips, you bring everything together. In LLM operations, batching involves processing multiple inputs at once, reducing the overhead of individual requests.

Consider it like a "bulk order" for your AI – it gets the job done faster and with more energy efficiency.

5. Utilize Low-Power States: When Idle, It's Time to Relax

Just like your phone goes to sleep when not in use, GPUs can also enter low-power states when they're not actively processing information. Leveraging these states can significantly reduce energy consumption during periods of inactivity.

Think of it as giving your GPU a well-deserved "nap" – it's still there, ready to jump back into action whenever needed, but sipping energy instead of guzzling it down.

Comparison of Various LLM Models on NVIDIA 409024GBx2

While we've focused on power-saving tips, let's delve into a comparison of some popular LLMs running on the NVIDIA 409024GBx2:

| LLM Model | Quantization | Tokens/Second (Generation) | Tokens/Second (Processing) |

|---|---|---|---|

| Llama 3 8B | Q4 (K&M) | 122.56 | 8545.0 |

| Llama 3 8B | F16 | 53.27 | 11094.51 |

| Llama 3 70B | Q4 (K&M) | 19.06 | 905.38 |

| Llama 3 70B | F16 | N/A | N/A |

As you can see, the performance varies considerably between models and quantization levels. The Llama 3 8B Q4 (K&M) model demonstrates impressively high speed for both token generation and processing. This suggests a great balance between performance and power efficiency.

Comparing the performance of the Llama 3 70B Q4 (K&M) model to the Llama 3 8B Q4 (K&M), we see a significant reduction in token generation speed. This is due to the larger size of the 70B model, which places a higher demand on computational resources.

Conclusion

Running LLMs like Llama 3 on a powerhouse like the NVIDIA 409024GBx2 is an exciting venture, but it's essential to be mindful of power consumption. By implementing the tips discussed above, you can optimize your AI operations for both performance and energy efficiency.

Remember, the key is to strike a balance between pushing the boundaries of AI and keeping your energy bill manageable. With these strategies, you can explore the world of LLMs without sacrificing your financial well-being.

FAQ

1. What is quantization?

Quantization is a technique used in machine learning to compress models by converting weights from 32-bit floating-point numbers to lower-precision formats, such as 4-bit integers. This reduces the size of the model and memory usage, which can lead to faster inference, especially on devices with limited resources.

2. How do I choose the right LLM model size?

The optimal model size depends on your specific needs and the tasks you intend to perform. Smaller models are generally faster and use less power, while larger models are capable of handling more complex tasks. Consider the computational resources available, the complexity of the tasks, and the trade-off between performance and energy efficiency.

3. How can I further improve the performance of my LLM?

Beyond the tips discussed, you can explore further optimizations such as:

- GPU-specific tuning: Optimizing the code and configurations for the specific GPU architecture can significantly improve performance.

- Parallel processing: Utilizing multiple GPUs or CPUs can accelerate computation by dividing the workload across multiple processing units.

Keywords

LLM, Llama 3, NVIDIA 409024GBx2, Quantization, Power Saving, AI, GPU, Token Speed, Energy Efficiency, Model Size, Temperature, Batching, Low-Power States, Inference, Performance, Optimization, Parallel Processing