5 Power Saving Tips for 24 7 AI Operations on NVIDIA 3090 24GB x2

Are you dreaming of running your very own LLM (Large Language Model) like a boss, but the thought of your electricity bill makes you sweat more than a summer in the Sahara? Fear not, fellow AI enthusiast! This guide will help you keep your LLM humming along while keeping those energy bills in check. We'll focus on the mighty NVIDIA 309024GBx2 setup, the power couple of AI hardware, and explore how to squeeze the maximum performance with the minimum power consumption.

Understanding the Power of LLMs

Imagine a computer program that can understand and generate human-like text. That's the essence of a Large Language Model (LLM). These models are trained on massive datasets of text and code, allowing them to perform diverse tasks like writing stories, answering questions, and translating languages. Think of them as the ultimate language wizards, but they require power to work their magic.

The NVIDIA 309024GBx2: A Beastly Duo

The NVIDIA 309024GB is a beast of a graphics card, originally designed for gaming. But it's also a powerhouse for LLM processing, with its massive 24GB of GDDR6X memory and an impressive 10,496 CUDA cores. Now imagine having two of those working in tandem! That's the magic of the 309024GB_x2 setup - double the power, double the potential. But like any powerful engine, it demands its fair share of juice.

Power-Saving Strategies: Harnessing the Beast

Here are five potent tips to optimize your 309024GBx2 setup and make your LLM run efficiently:

1. Quantization: Shrinking the Model Without Sacrificing Smarts

Think of quantization as a weight-loss program for your LLM. It reduces the size of the model's parameters, making it faster and more efficient. Imagine a model like a massive castle. Quantization removes the unnecessary decorations while retaining the castle's core structure and functionality.

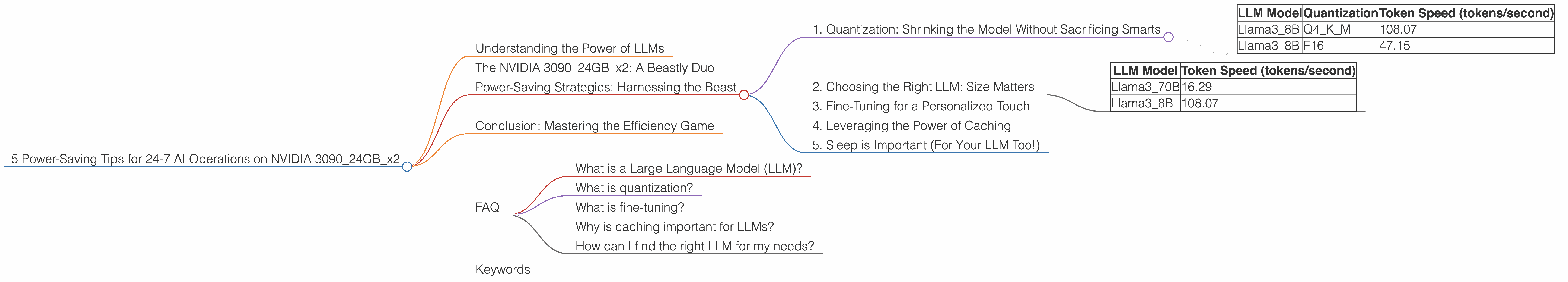

The NVIDIA 309024GBx2 can handle various quantization levels depending on the LLM. For example, using Q4KM quantization on the Llama3_8B model yields impressive results. The model achieves a remarkable token generation speed of 108.07 tokens/second, surpassing the F16 version by a significant margin.

| LLM Model | Quantization | Token Speed (tokens/second) |

|---|---|---|

| Llama3_8B | Q4KM | 108.07 |

| Llama3_8B | F16 | 47.15 |

2. Choosing the Right LLM: Size Matters

Not all LLMs are created equal. Some are massive behemoths, requiring intense processing power, while others are more compact and nimble. Selecting the right LLM for your task and hardware is crucial.

For instance, smaller models like Llama38B can be more efficient on the 309024GBx2 setup than larger models like Llama370B. While the 309024GBx2 can handle both, you'll notice a significant increase in power consumption and potentially slower performance with the larger model.

| LLM Model | Token Speed (tokens/second) |

|---|---|

| Llama3_70B | 16.29 |

| Llama3_8B | 108.07 |

3. Fine-Tuning for a Personalized Touch

Think of fine-tuning as giving your LLM a personal training session. It adjusts the model's parameters to perform a specific task better, potentially saving you energy in the long run. Imagine teaching a dog to fetch a specific toy. You wouldn't need to teach it a new trick each time; you'd focus on refining its existing skills.

Fine-tuning can be a valuable tool for optimizing your LLM's performance while minimizing energy consumption. It's like streamlining your workflow and focusing on the essentials.

4. Leveraging the Power of Caching

Caching is like having a secret stash of snacks readily available when you're hungry. It speeds up your LLM's operation by storing frequently accessed data in a fast access memory location. This reduces the time spent fetching information from slower storage, saving power and improving efficiency.

Imagine you're craving a specific cookie. Instead of searching the entire cookie jar, you can quickly grab it from the cookie jar's secret compartment (the cache) reserved for your favorite cookies.

5. Sleep is Important (For Your LLM Too!)

Just like you, your LLM needs a break! If you're not actively using it, stop it! Don't let it run in the background, consuming unnecessary power. This might seem obvious, but sometimes it's the simplest things we forget.

Think of it as turning off the lights when you leave a room. Every little bit helps!

Conclusion: Mastering the Efficiency Game

Optimizing your LLM's performance on the 309024GBx2 setup doesn't require a magic spell. By following these five power-saving tips, you can unlock the full potential of your AI duo while keeping those energy bills in check.

FAQ

What is a Large Language Model (LLM)?

A Large Language Model (LLM) is a type of artificial intelligence (AI) that can understand and generate human-like text. It's trained on vast amounts of data, enabling it to perform various language-related tasks.

What is quantization?

Quantization is a technique used to reduce the size of an LLM's parameters, making it more efficient in terms of memory and processing power. It's like simplifying the model's weights without compromising its ability to generate coherent text.

What is fine-tuning?

Fine-tuning is a process of adjusting an LLM's parameters to make it better at a specific task. It's like teaching a model to perform a specific action, like writing poetry or translating languages.

Why is caching important for LLMs?

Caching helps speed up LLM operations by storing frequently accessed data in a fast-access memory location. It reduces the time spent retrieving data from slower storage, saving energy and improving performance.

How can I find the right LLM for my needs?

Consider your specific needs and the available hardware. Smaller models like Llama38B may be more efficient on a 309024GBx2 setup than larger models like Llama370B.

Keywords

LLM, AI, NVIDIA 309024GBx2, power saving, energy efficiency, quantization, fine-tuning, caching, Llama38B, Llama370B, token speed, GPU, performance optimization, AI hardware, AI operations.