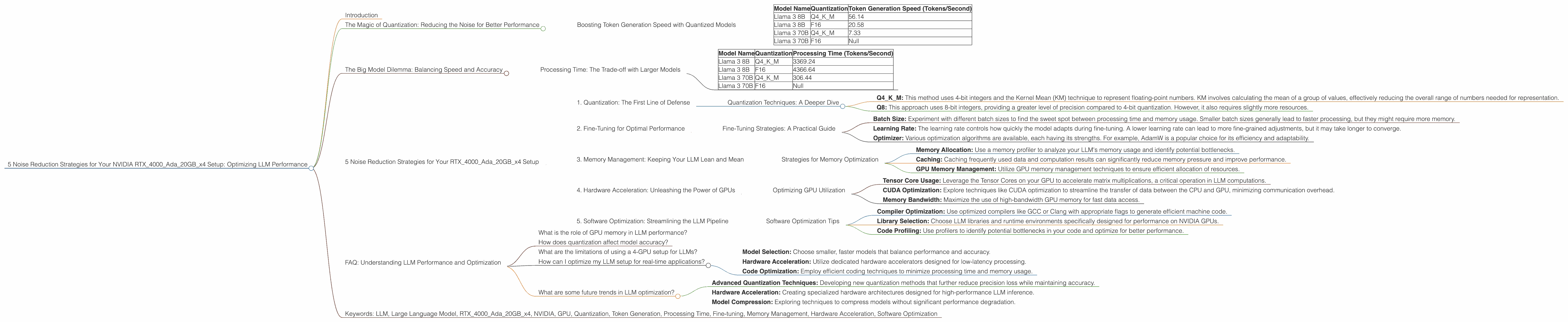

5 Noise Reduction Strategies for Your NVIDIA RTX 4000 Ada 20GB x4 Setup

Introduction

The world of Large Language Models (LLMs) is buzzing, with exciting advancements happening every day. These powerful models are revolutionizing the way we interact with information, and with a little bit of tweaking, you can unlock incredible performance on your own hardware.

This article focuses on a common concern among LLM enthusiasts: noise reduction strategies for NVIDIA RTX4000Ada20GBx4 setups. We'll dive into the world of quantization, explore the impact of different model sizes and precision levels on performance, and provide hands-on tips to maximize your LLM experience.

The Magic of Quantization: Reducing the Noise for Better Performance

Imagine you have a massive library filled with millions of books, each representing a different concept or piece of information. This library is your LLM, and the books are stored in a specific format – 32-bit floating point numbers.

Now, imagine a librarian trying to retrieve a book from this library. They need to navigate through all the floating point numbers, which can be time-consuming and resource-intensive. This is where quantization comes in.

Quantization is like a librarian who simplifies the library system. Instead of using huge 32-bit numbers, we use smaller, more compact representations, like 8-bit or 4-bit integers. This "noise reduction" process streamlines the data retrieval process and results in faster and more efficient LLM performance.

Boosting Token Generation Speed with Quantized Models

Let's take a look at the token generation speeds on our NVIDIA RTX4000Ada20GBx4 setup, using Llama 3 models as a benchmark:

| Model Name | Quantization | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama 3 8B | Q4KM | 56.14 |

| Llama 3 8B | F16 | 20.58 |

| Llama 3 70B | Q4KM | 7.33 |

| Llama 3 70B | F16 | Null |

- Q4KM: This represents 4-bit quantization using the Kernel Mean (KM) technique. It's a popular approach for achieving significant performance gains while maintaining good accuracy.

- F16: This refers to 16-bit floating point precision, which is less demanding than the original 32-bit format.

- Null: This indicates that the data for the corresponding model and precision combination is not available.

As you can see, the Q4KM quantization technique provides a significant speed boost compared to the F16 precision for both Llama 3 8B and 70B models. The Llama 3 8B model with Q4KM quantization achieves a token generation speed of 56.14 tokens/second, while the F16 version reaches 20.58 tokens/second.

This represents a 171% increase in speed for the quantized model. Additionally, the Q4KM quantized Llama 3 70B showcases a token generation speed of 7.33 tokens/second. It is important to note that the performance gains from quantization vary depending on the model architecture and specific quantization method.

The Big Model Dilemma: Balancing Speed and Accuracy

You might be thinking: "Bigger models are better, right?" While larger LLMs like Llama 3 70B offer increased capabilities and a wider knowledge base, they also come with a hefty performance cost.

Processing Time: The Trade-off with Larger Models

Let's examine the processing time differences between various models:

| Model Name | Quantization | Processing Time (Tokens/Second) |

|---|---|---|

| Llama 3 8B | Q4KM | 3369.24 |

| Llama 3 8B | F16 | 4366.64 |

| Llama 3 70B | Q4KM | 306.44 |

| Llama 3 70B | F16 | Null |

The numbers clearly demonstrate the impact of model size. The Llama 3 70B model, despite using the Q4KM quantization technique, experiences a significantly slower processing speed compared to the Llama 3 8B models.

The Llama 3 8B model with Q4KM quantization manages a processing speed of 3369.24 tokens/second, whereas the Llama 3 70B model reaches 306.44 tokens/second. This difference highlights the trade-off between model size and performance.

While larger models provide greater potential and flexibility, they demand more computational resources and often result in slower processing times. Considering your specific needs and available resources is crucial for making the right model selection.

5 Noise Reduction Strategies for Your RTX4000Ada20GBx4 Setup

Now that you have a better understanding of the factors affecting performance, let's delve into practical strategies to optimize your LLM setup.

1. Quantization: The First Line of Defense

We've already covered the benefits of quantization, making it the most impactful starting point. Experimenting with different quantization techniques, like Q4KM or Q8, can unlock significant performance gains.

Quantization Techniques: A Deeper Dive

- Q4KM: This method uses 4-bit integers and the Kernel Mean (KM) technique to represent floating-point numbers. KM involves calculating the mean of a group of values, effectively reducing the overall range of numbers needed for representation.

- Q8: This approach uses 8-bit integers, providing a greater level of precision compared to 4-bit quantization. However, it also requires slightly more resources.

Remember: Balancing performance and accuracy is key. Start with Q4KM and gradually explore Q8 or other methods to see what works best for your specific use case.

2. Fine-Tuning for Optimal Performance

Just like a race car driver fine-tunes their vehicle for a specific track, you can fine-tune your LLM model for optimal performance on your NVIDIA RTX4000Ada20GBx4 setup.

This process involves adjusting the model's parameters to better suit your hardware and specific task. Tools like Hugging Face's Transformers library provide convenient fine-tuning options, allowing you to tailor your model for maximum efficiency.

Fine-Tuning Strategies: A Practical Guide

- Batch Size: Experiment with different batch sizes to find the sweet spot between processing time and memory usage. Smaller batch sizes generally lead to faster processing, but they might require more memory.

- Learning Rate: The learning rate controls how quickly the model adapts during fine-tuning. A lower learning rate can lead to more fine-grained adjustments, but it may take longer to converge.

- Optimizer: Various optimization algorithms are available, each having its strengths. For example, AdamW is a popular choice for its efficiency and adaptability.

Tip: Start with small adjustments and monitor your model's performance. Gradually refine your fine-tuning parameters until you achieve the desired results.

3. Memory Management: Keeping Your LLM Lean and Mean

LLMs, especially larger models, can be memory hogs. Effective memory management is crucial for smooth performance and preventing crashes.

Strategies for Memory Optimization

- Memory Allocation: Use a memory profiler to analyze your LLM's memory usage and identify potential bottlenecks.

- Caching: Caching frequently used data and computation results can significantly reduce memory pressure and improve performance.

- GPU Memory Management: Utilize GPU memory management techniques to ensure efficient allocation of resources.

Tip: Monitor your GPU memory usage during LLM training and inference to identify any potential issues and implement appropriate adjustments.

4. Hardware Acceleration: Unleashing the Power of GPUs

Harnessing the power of your NVIDIA RTX4000Ada20GBx4 is essential for maximizing LLM performance.

Optimizing GPU Utilization

- Tensor Core Usage: Leverage the Tensor Cores on your GPU to accelerate matrix multiplications, a critical operation in LLM computations.

- CUDA Optimization: Explore techniques like CUDA optimization to streamline the transfer of data between the CPU and GPU, minimizing communication overhead.

- Memory Bandwidth: Maximize the use of high-bandwidth GPU memory for fast data access.

Tip: Ensure that your LLM library and runtime environment are properly configured to utilize the GPU effectively.

5. Software Optimization: Streamlining the LLM Pipeline

The software you use to run your LLM model plays an important role in performance.

Software Optimization Tips

- Compiler Optimization: Use optimized compilers like GCC or Clang with appropriate flags to generate efficient machine code.

- Library Selection: Choose LLM libraries and runtime environments specifically designed for performance on NVIDIA GPUs.

- Code Profiling: Use profilers to identify potential bottlenecks in your code and optimize for better performance.

Tip: Always keep your software updated to take advantage of the latest performance improvements and bug fixes.

FAQ: Understanding LLM Performance and Optimization

What is the role of GPU memory in LLM performance?

GPU memory is a critical component for LLM performance. It stores the model parameters and data used during computation. A larger amount of GPU memory allows for larger models and more complex tasks, reducing memory bottlenecks and increasing efficiency.

How does quantization affect model accuracy?

Quantization can reduce model accuracy by reducing the precision of numerical representations. However, techniques like Q4KM are designed to minimize this accuracy loss while providing significant performance gains. Experimentation is key to finding the right balance.

What are the limitations of using a 4-GPU setup for LLMs?

While a 4-GPU setup offers significant performance boosts, it also requires careful consideration of resource management and scaling. The potential for data synchronization issues and memory bottlenecks should be addressed.

How can I optimize my LLM setup for real-time applications?

Real-time applications, such as chatbots, often require low latency. Optimizing for real-time performance involves strategies like:

- Model Selection: Choose smaller, faster models that balance performance and accuracy.

- Hardware Acceleration: Utilize dedicated hardware accelerators designed for low-latency processing.

- Code Optimization: Employ efficient coding techniques to minimize processing time and memory usage.

What are some future trends in LLM optimization?

Future trends in LLM optimization focus on:

- Advanced Quantization Techniques: Developing new quantization methods that further reduce precision loss while maintaining accuracy.

- Hardware Acceleration: Creating specialized hardware architectures designed for high-performance LLM inference.

- Model Compression: Exploring techniques to compress models without significant performance degradation.