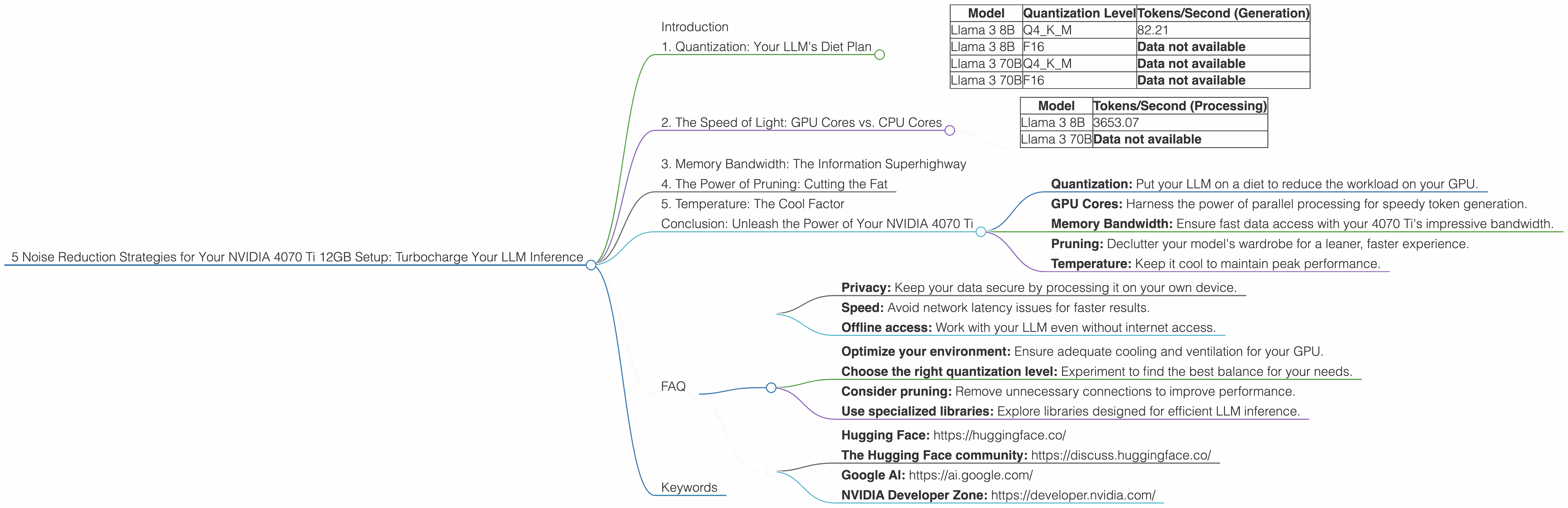

5 Noise Reduction Strategies for Your NVIDIA 4070 Ti 12GB Setup

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and rightfully so! These AI marvels can generate human-like text, translate languages, write different kinds of creative content, and even answer your questions in an informative way. But, running these models on your local machine can be a bit like trying to hold a conversation in a crowded room – noisy!

Your NVIDIA 4070 Ti 12GB graphics card is a beast, but even it can struggle to keep up with the demands of LLMs, especially the larger ones. This article will explore 5 key strategies to tame the noise and maximize your NVIDIA 4070 Ti's performance for smooth, efficient, and super-fast LLM inference. Think of it like learning to filter out the background chatter so you can actually enjoy the conversation – or in this case, enjoy the power of your LLM without the lag.

1. Quantization: Your LLM's Diet Plan

Imagine this: you're trying to cook a complicated dish with a mountain of ingredients, but your kitchen is tiny. It's a recipe for disaster! LLMs, like our delicious recipe, are packed with data. Each ingredient needs to be processed, and this takes time and resources.

Quantization is like putting your LLM on a diet – it shrinks the model by representing the data with fewer bits. It's like using smaller containers for your ingredients. This has a powerful effect on your GPU's performance. Think of it like optimizing your kitchen space – the smaller the ingredients, the more you can fit!

For your NVIDIA 4070 Ti, quantization is a must. Let's look at the numbers:

| Model | Quantization Level | Tokens/Second (Generation) |

|---|---|---|

| Llama 3 8B | Q4KM | 82.21 |

| Llama 3 8B | F16 | Data not available |

| Llama 3 70B | Q4KM | Data not available |

| Llama 3 70B | F16 | Data not available |

Q4KM is our recommended quantization level for the 4070 Ti. It finds the sweet spot between preserving accuracy and speeding up processing.

Note:

- F16 is a lower quantization level with potential for faster processing.

- Unfortunately, we don't have data for F16 quantization on our specific model and GPU.

Key Takeaway: Quantization is a crucial optimization for LLMs. It can dramatically improve your performance, but it's important to find the right balance for your specific model and device.

2. The Speed of Light: GPU Cores vs. CPU Cores

You've probably heard the saying "Time is money". Well, in the LLM world, Tokens are money. And your NVIDIA 4070 Ti is like a printing press for those tokens.

GPU cores are designed for massive parallel processing – they're like many individual workers on an assembly line, all doing the same task simultaneously. This makes them ideal for handling the complex calculations involved in LLM inference.

Here's the breakdown for your 4070 Ti:

| Model | Tokens/Second (Processing) |

|---|---|

| Llama 3 8B | 3653.07 |

| Llama 3 70B | Data not available |

Remember: We don't have data for the Llama 3 70B model on the 4070 Ti.

Key Takeaway: The more GPU cores you have, the more tokens you can process. It's like having a larger assembly line to churn out those tokens. Your 4070 Ti's powerful GPU cores are your secret weapon for speed.

3. Memory Bandwidth: The Information Superhighway

Imagine a supermarket checkout line – you need a fast cashier to handle all the goods (data), and you need wide lanes (memory bandwidth) to get everything through smoothly.

Memory bandwidth refers to the speed at which your GPU can access data. Having enough bandwidth is critical for smooth LLM operations – think of it as the highway connecting your GPU to the LLM "supermarket".

The NVIDIA 4070 Ti boasts impressive memory bandwidth. This translates to faster access to data, which is crucial for keeping those tokens flowing efficiently.

Key Takeaway: High memory bandwidth is like having a wide superhighway to speed up data transfer, making your LLM inference smoother. Your 4070 Ti is well-equipped for the task with its high bandwidth.

4. The Power of Pruning: Cutting the Fat

Imagine you have a huge pile of clothes, but you only wear a small fraction regularly. You could spend hours searching for what you need or you could pare down your closet!

LLM pruning is like decluttering your model's wardrobe. It removes the unnecessary connections and weights, leaving only the most relevant ones. This results in a smaller model with fewer computations to perform, leading to faster inference.

Pruning is a powerful optimization technique, but it can be complex. It requires careful attention to avoid sacrificing accuracy.

Key Takeaway: Pruning can significantly improve your LLM's performance, but it's like fine-tuning your clothes collection – you need to be careful about what you cut! It's a process that needs to be done with precision and consideration.

5. Temperature: The Cool Factor

Think about a fast-food restaurant. When the kitchen gets too hot, things start to slow down. The same is true for your LLM – high temperatures can impact performance due to throttling.

Your NVIDIA 4070 Ti has a powerful cooling system to prevent overheating. This ensures the optimal temperature for your LLM to run at full speed!

Key Takeaway: Just like a well-ventilated kitchen, keeping your GPU cool is critical for peak performance. Your 4070 Ti's robust cooling system ensures a smooth and efficient LLM experience.

Conclusion: Unleash the Power of Your NVIDIA 4070 Ti

Your NVIDIA 4070 Ti is a powerful tool for running LLMs. By applying these 5 noise reduction strategies, you can unlock its full potential and experience the speed, efficiency, and joy of working with these incredible models. Remember:

- Quantization: Put your LLM on a diet to reduce the workload on your GPU.

- GPU Cores: Harness the power of parallel processing for speedy token generation.

- Memory Bandwidth: Ensure fast data access with your 4070 Ti's impressive bandwidth.

- Pruning: Declutter your model's wardrobe for a leaner, faster experience.

- Temperature: Keep it cool to maintain peak performance.

With these strategies in hand, you'll be able to push your NVIDIA 4070 Ti to its limits and enjoy the power of LLMs without compromise.

FAQ

Q: What are the benefits of running LLM models locally?

A: Local inference offers several advantages, including:

- Privacy: Keep your data secure by processing it on your own device.

- Speed: Avoid network latency issues for faster results.

- Offline access: Work with your LLM even without internet access.

Q: What are the best practices for minimizing noise in my LLM setup?

A:

- Optimize your environment: Ensure adequate cooling and ventilation for your GPU.

- Choose the right quantization level: Experiment to find the best balance for your needs.

- Consider pruning: Remove unnecessary connections to improve performance.

- Use specialized libraries: Explore libraries designed for efficient LLM inference.

Q: How do I know if my LLM is running efficiently?

A: Monitor your GPU usage, memory consumption, and token generation speed. Look for any bottlenecks or performance dips.

Q: What resources are available for learning more about LLM optimization?

A: Many online resources, forums, and communities offer valuable insights and guidance. Here are a few:

- Hugging Face: https://huggingface.co/

- The Hugging Face community: https://discuss.huggingface.co/

- Google AI: https://ai.google.com/

- NVIDIA Developer Zone: https://developer.nvidia.com/

Keywords

LLM, Large Language Model, NVIDIA 4070 Ti, GPU, Quantization, Memory Bandwidth, Token Generation, Pruning, Temperature, GPU Cores, Inference, Performance, Speed, Efficiency, Optimization, Noise Reduction, Local Inference, Privacy, Offline Access, Resources, Libraries, Community, Hugging Face, Google AI, NVIDIA Developer Zone.