5 Limitations of Apple M3 Max for AI (and How to Overcome Them)

Introduction

The Apple M3 Max chip is a powerhouse for creative professionals, offering incredible performance for video editing, 3D rendering, and other demanding tasks. But how does it fare in the world of AI? While the M3 Max packs a punch, it’s not without its limitations when it comes to running large language models (LLMs) like Llama 2 or Llama 3.

This article will explore five key limitations of the Apple M3 Max for AI work and provide practical ways to overcome them. Whether you’re a developer experimenting with LLMs or simply curious about the capabilities of the M3 Max, this guide will equip you with the knowledge to make the most of this powerful chip.

Apple M3 Max Token Generation Speed: Not as Fast as We'd Like

The M3 Max, with its 40 GPU cores and 400 GB/s bandwidth, is a beast of a chip. However, when it comes to token generation speed for large language models, it falls short of some expectations.

Let's use an analogy: Imagine a marathon runner with incredible strength and endurance but lacking the speed to break the finish line record. The M3 Max is like that runner - it can handle the workload of running LLMs, but the speed of token generation, which determines how quickly the model processes and generates text, isn’t quite at the top of the leaderboard.

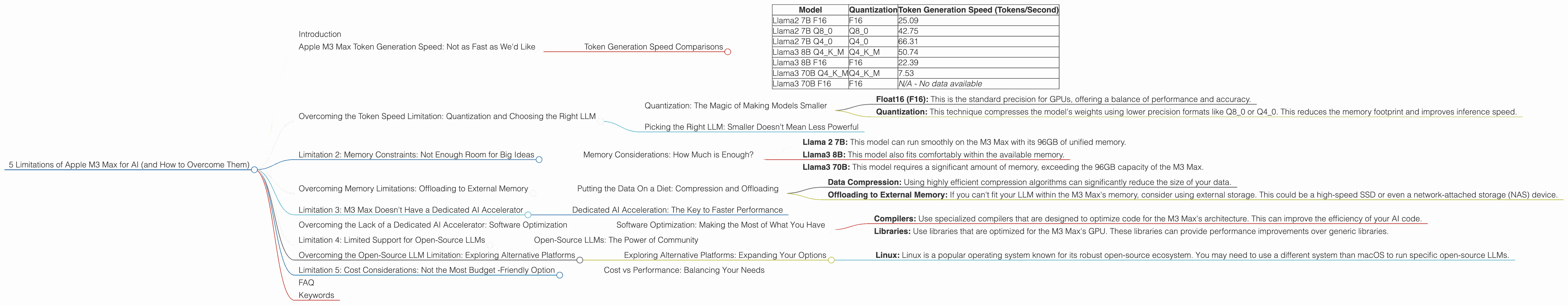

Token Generation Speed Comparisons

Below are some comparison points for Llama 2 and Llama 3 models using different quantization levels:

| Model | Quantization | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama2 7B F16 | F16 | 25.09 |

| Llama2 7B Q8_0 | Q8_0 | 42.75 |

| Llama2 7B Q4_0 | Q4_0 | 66.31 |

| Llama3 8B Q4KM | Q4KM | 50.74 |

| Llama3 8B F16 | F16 | 22.39 |

| Llama3 70B Q4KM | Q4KM | 7.53 |

| Llama3 70B F16 | F16 | N/A - No data available |

Key Observations:

- Smaller Models Faster: As you can see, the smaller Llama2 7B model shows faster token generation speeds compared to the larger Llama3 8B and Llama3 70B models. This is expected because larger models have more parameters and require more computational resources.

- Quantization Matters: The impact of quantization on token generation speed is significant. Lower-precision quantization levels (Q40 and Q4K_M) result in noticeably faster speeds compared to F16. This is because quantization reduces the size of the model, allowing for faster processing.

- Llama3 70B Limitations: Unfortunately, there is no available data for the Llama3 70B model with F16 quantization.

Overcoming the Token Speed Limitation: Quantization and Choosing the Right LLM

Quantization: The Magic of Making Models Smaller

Imagine you have a giant book full of knowledge, but it's so heavy you can barely lift it. Quantization is like taking that giant book and compressing it into a smaller, more manageable version. This doesn't change the content, just makes it more efficient.

In the context of LLMs, quantization reduces the size of the model's weights, which are essentially the numbers that store the model's knowledge. By reducing the size of these weights, we can process and generate tokens faster.

Here's how it works:

- Float16 (F16): This is the standard precision for GPUs, offering a balance of performance and accuracy.

- Quantization: This technique compresses the model's weights using lower precision formats like Q80 or Q40. This reduces the memory footprint and improves inference speed.

As the table above shows, using Q80 and Q40 quantization significantly improves token generation speeds, even for larger models.

Picking the Right LLM: Smaller Doesn't Mean Less Powerful

Sometimes, the most powerful tool for the job isn't always the biggest one. It's like using a sledgehammer to crack a nut - you can do it, but it's not the optimal solution.

For your AI tasks, you might not actually need the massive size and complexity of a Llama 70B model. Consider starting with a more manageable model like Llama 2 7B or even a smaller, fine-tuned version of it. These smaller models can provide impressive results while offering much faster speeds.

Limitation 2: Memory Constraints: Not Enough Room for Big Ideas

The M3 Max boasts a sizable 96GB of unified memory, which is a lot for most tasks. However, when dealing with large LLMs like Llama 3 70B, even 96GB can feel a bit cramped. This memory constraint is a limitation because it prevents you from running larger models or finetuning them on your device, effectively limiting your options.

Memory Considerations: How Much is Enough?

Think of memory like a workspace. The bigger the workspace, the more projects you can work on simultaneously without running out of space.

- Llama 2 7B: This model can run smoothly on the M3 Max with its 96GB of unified memory.

- Llama3 8B: This model also fits comfortably within the available memory.

- Llama3 70B: This model requires a significant amount of memory, exceeding the 96GB capacity of the M3 Max.

Overcoming Memory Limitations: Offloading to External Memory

Putting the Data On a Diet: Compression and Offloading

Just like you might compress your travel souvenirs to fit more in your suitcase, you can compress the data used by your LLM.

- Data Compression: Using highly efficient compression algorithms can significantly reduce the size of your data.

- Offloading to External Memory: If you can't fit your LLM within the M3 Max's memory, consider using external storage. This could be a high-speed SSD or even a network-attached storage (NAS) device.

Note: While offloading data to external memory can improve the performance of an LLM, it will impact the overall speed as the data needs to be transferred between the memory and storage.

Limitation 3: M3 Max Doesn't Have a Dedicated AI Accelerator

AI accelerators, like specialized GPUs, speed up the AI processing by offloading it from the main CPU. Think of it as having a dedicated team of super-fast workers just for your AI projects. The M3 Max, while powerful, lacks a dedicated AI accelerator. This limitation can lead to slower speeds than a dedicated AI-optimized chip.

Dedicated AI Acceleration: The Key to Faster Performance

Specialized AI accelerators can significantly boost the performance of AI tasks. These accelerators are designed to handle the complex calculations involved in training and running large models.

For example, the NVIDIA A100 GPU is widely used in AI research and development because of its dedicated AI architecture. While the M3 Max has a powerful GPU, it lacks the specific AI optimizations found in dedicated accelerators.

Overcoming the Lack of a Dedicated AI Accelerator: Software Optimization

Software Optimization: Making the Most of What You Have

While you can't add a dedicated AI accelerator to the M3 Max, you can optimize the software running on it.

- Compilers: Use specialized compilers that are designed to optimize code for the M3 Max's architecture. This can improve the efficiency of your AI code.

- Libraries: Use libraries that are optimized for the M3 Max's GPU. These libraries can provide performance improvements over generic libraries.

Note: Software optimization can be a complex process, but it can lead to significant performance gains.

Limitation 4: Limited Support for Open-Source LLMs

The M3 Max primarily utilizes Apple's own software ecosystem. This can sometimes limit the availability and support for open-source LLMs. While you can still run open-source models on the M3 Max, you might encounter challenges with compatibility or accessing the latest updates.

Open-Source LLMs: The Power of Community

Open-source LLMs are like a collaborative effort - a community contributing to building and improving models. This collaboration often leads to faster advancements and greater accessibility.

Note: While the M3 Max may currently have limitations with open-source LLMs, the community is actively developing and improving support for different platforms, so this limitation may become less significant over time.

Overcoming the Open-Source LLM Limitation: Exploring Alternative Platforms

Exploring Alternative Platforms: Expanding Your Options

Consider expanding your work to platforms that provide wider support for open-source LLMs.

- Linux: Linux is a popular operating system known for its robust open-source ecosystem. You may need to use a different system than macOS to run specific open-source LLMs.

Note: This option might require setting up a dual-boot system or using a virtual machine, potentially adding complexity to your workflow.

Limitation 5: Cost Considerations: Not the Most Budget -Friendly Option

While the M3 Max offers impressive performance, it's a premium chip. This high cost might make it less appealing for budget-conscious individuals or those who need to run large models on multiple devices.

Cost vs Performance: Balancing Your Needs

When choosing a device for AI work, it's crucial to balance cost with performance. The M3 Max provides high performance, but it comes at a premium price.

Note: You might consider alternative devices or cloud-based solutions that offer a better price-to-performance ratio, depending on your specific needs and budget.

FAQ

Q: Can I run the Llama 70B model on the M3 Max?

A: Unfortunately, the M3 Max's 96GB of unified memory is not enough to run the Llama 70B model directly. You could try offloading data to external storage, but it will likely impact performance significantly.

Q: Is quantization really necessary for LLMs?

A: Quantization can be a game-changer for running large LLMs on devices with limited memory. It can significantly improve token generation speed, allowing you to run larger models or even fine-tune them.

Q: What's the best alternative to the M3 Max for AI work?

A: That depends on your specific needs. Devices like the NVIDIA A100 GPU are specifically designed for AI tasks and offer exceptional performance. However, they are expensive, so consider cloud-based services as a more budget-friendly alternative.

Q: Is there any future for open-source LLMs on Apple silicon?

A: The Apple community is actively working on improving support for open-source LLMs. As Apple continues to develop its silicon and software, we can expect better compatibility and performance for open-source LLMs.

Keywords

Apple M3 Max, AI, LLM, Llama 2, Llama 3, token generation speed, quantization, memory limitations, AI accelerator, open-source LLMs, software optimization, cost considerations, alternative platforms, NVIDIA A100, GPU, cloud-based services