5 Limitations of Apple M3 for AI (and How to Overcome Them)

Introduction

The Apple M3 chip, with its enhanced performance, next-level graphics, and energy efficiency, holds immense promise for accelerating AI workloads. But just like any other technology, it's not without its limitations when it comes to running large language models (LLMs) locally. While the M3 is a powerhouse for many tasks, it's crucial to understand its limitations and explore strategies to optimize your AI experience.

This article dives into the specific limitations of the M3 chip for AI, particularly for running LLMs like Llama 2 7B. We'll use specific performance data to illustrate these limitations and then discuss various solutions to overcome them. Buckle up, geeks!

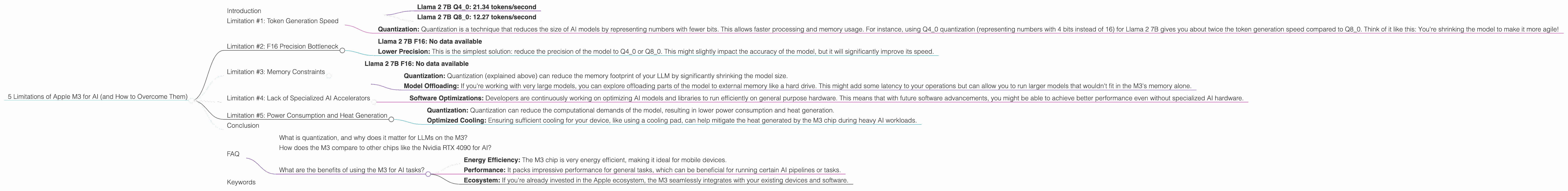

Limitation #1: Token Generation Speed

The M3 chip, despite its processing power, can struggle with generating tokens at a blazing-fast pace, especially when using quantized models like Llama 2 7B.

Let's break down the numbers. The M3 boasts a theoretical bandwidth of 100 GB/s and 10 GPU cores, yet its token generation rate is relatively slow, particularly when using Llama 2 7B with F16 precision.

Comparison:

- Llama 2 7B Q4_0: 21.34 tokens/second

- Llama 2 7B Q8_0: 12.27 tokens/second

This means that even with a powerful chip like the M3, you might experience some lag when generating text, especially for longer sequences.

How to overcome this limitation:

- Quantization: Quantization is a technique that reduces the size of AI models by representing numbers with fewer bits. This allows faster processing and memory usage. For instance, using Q40 quantization (representing numbers with 4 bits instead of 16) for Llama 2 7B gives you about twice the token generation speed compared to Q80. Think of it like this: You're shrinking the model to make it more agile!

Limitation #2: F16 Precision Bottleneck

The M3 chip, with its focus on speed, might not be the ideal choice for running large language models with high precision (F16).

Explanation: F16 precision, while offering better accuracy for LLMs, requires more computational power. The M3 chip, though powerful, might not be able to keep up with the demands of F16 precision for larger models, especially when dealing with token generation.

Comparison:

- Llama 2 7B F16: No data available

This indicates that the M3 chip might not be able to handle Llama 2 7B with F16 precision at a reasonable speed.

How to overcome this limitation:

- Lower Precision: This is the simplest solution: reduce the precision of the model to Q40 or Q80. This might slightly impact the accuracy of the model, but it will significantly improve its speed.

Limitation #3: Memory Constraints

The M3 chip's memory, while substantial, can still be a bottleneck for running large language models with high precision.

Explanation: LLMs, especially those with high precision, require a significant amount of RAM. The M3's memory, while impressive, might not be enough to handle the memory requirements of larger models with F16 precision.

Comparison:

- Llama 2 7B F16: No data available

The lack of data indicates that running Llama 2 7B with F16 precision on the M3 could face memory challenges, especially if you're dealing with extensive context lengths.

How to overcome this limitation:

- Quantization: Quantization (explained above) can reduce the memory footprint of your LLM by significantly shrinking the model size.

- Model Offloading: If you're working with very large models, you can explore offloading parts of the model to external memory like a hard drive. This might add some latency to your operations but can allow you to run larger models that wouldn't fit in the M3's memory alone.

Limitation #4: Lack of Specialized AI Accelerators

The M3 chip, while powerful for general computation, might not have the specialized hardware that's optimized for AI tasks.

Explanation: In contrast to other chips dedicated to AI, the M3 chip might not have specialized hardware like tensor cores or dedicated AI accelerators. This means that your LLM will be processed on general purpose cores, which might not be as efficient for AI workloads.

How to overcome this limitation:

- Software Optimizations: Developers are continuously working on optimizing AI models and libraries to run efficiently on general purpose hardware. This means that with future software advancements, you might be able to achieve better performance even without specialized AI hardware.

Limitation #5: Power Consumption and Heat Generation

The M3 chip's high performance can lead to higher power consumption and heat generation, especially when running large language models.

Explanation: Running LLMs, particularly those with high precision, can be computationally intensive, leading to the M3 chip using more power and generating more heat. This can be problematic for mobile devices where battery life and thermal limitations are concerns.

How to overcome this limitation:

- Quantization: Quantization can reduce the computational demands of the model, resulting in lower power consumption and heat generation.

- Optimized Cooling: Ensuring sufficient cooling for your device, like using a cooling pad, can help mitigate the heat generated by the M3 chip during heavy AI workloads.

Conclusion

The Apple M3 chip is a powerful engine for AI, but it's important to be aware of its limitations. By understanding these limitations and implementing the strategies outlined above, you can optimize your AI workflow and get the most out of your M3 device. Remember, using quantization, exploring software optimizations, and ensuring proper cooling are essential steps to overcome these limitations and unleash the full potential of the M3 chip for AI.

FAQ

What is quantization, and why does it matter for LLMs on the M3?

Quantization is like a diet for your AI model. It reduces the model's size by representing numbers with fewer bits, similar to how a diet can reduce your weight. This makes the model faster and more memory-efficient, which is crucial for the M3 chip, especially when dealing with large models and limited memory.

How does the M3 compare to other chips like the Nvidia RTX 4090 for AI?

The Nvidia RTX 4090, designed specifically for AI, is a monster for AI workloads. It has specialized AI accelerators (tensor cores) and a massive amount of memory, making it significantly faster for training and inference of large models. The M3 chip, while powerful, can't compete with the dedicated AI hardware in the RTX 4090.

What are the benefits of using the M3 for AI tasks?

The M3 chip offers many advantages for AI tasks despite the aforementioned limitations:

- Energy Efficiency: The M3 chip is very energy efficient, making it ideal for mobile devices.

- Performance: It packs impressive performance for general tasks, which can be beneficial for running certain AI pipelines or tasks.

- Ecosystem: If you're already invested in the Apple ecosystem, the M3 seamlessly integrates with your existing devices and software.

Keywords

Apple M3, AI, Llama 2, Large Language Models, LLM, Quantization, Token Generation, F16, Q40, Q80, Memory, Memory Constraints, GPU Cores, Bandwidth, Tensor Cores, AI Accelerators, Power Consumption, Heat Generation, Software Optimization, Nvidia RTX 4090