5 Key Factors to Consider When Choosing Between NVIDIA RTX A6000 48GB and NVIDIA L40S 48GB for AI

Introduction

The world of large language models (LLMs) is exploding, offering incredible capabilities for natural language processing, code generation, and a whole lot more. But running these massive models locally requires some serious hardware firepower. Two popular choices for developers and AI enthusiasts are the NVIDIA RTX A6000 48GB and the NVIDIA L40S 48GB.

This article will guide you through the five key factors to consider when deciding which GPU is the right fit for your LLM needs. We'll dive deep into their performance, capabilities, and the type of LLM models you'll be running. Get ready to unleash the power of your LLMs!

Performance Analysis: A Head-to-Head Comparison

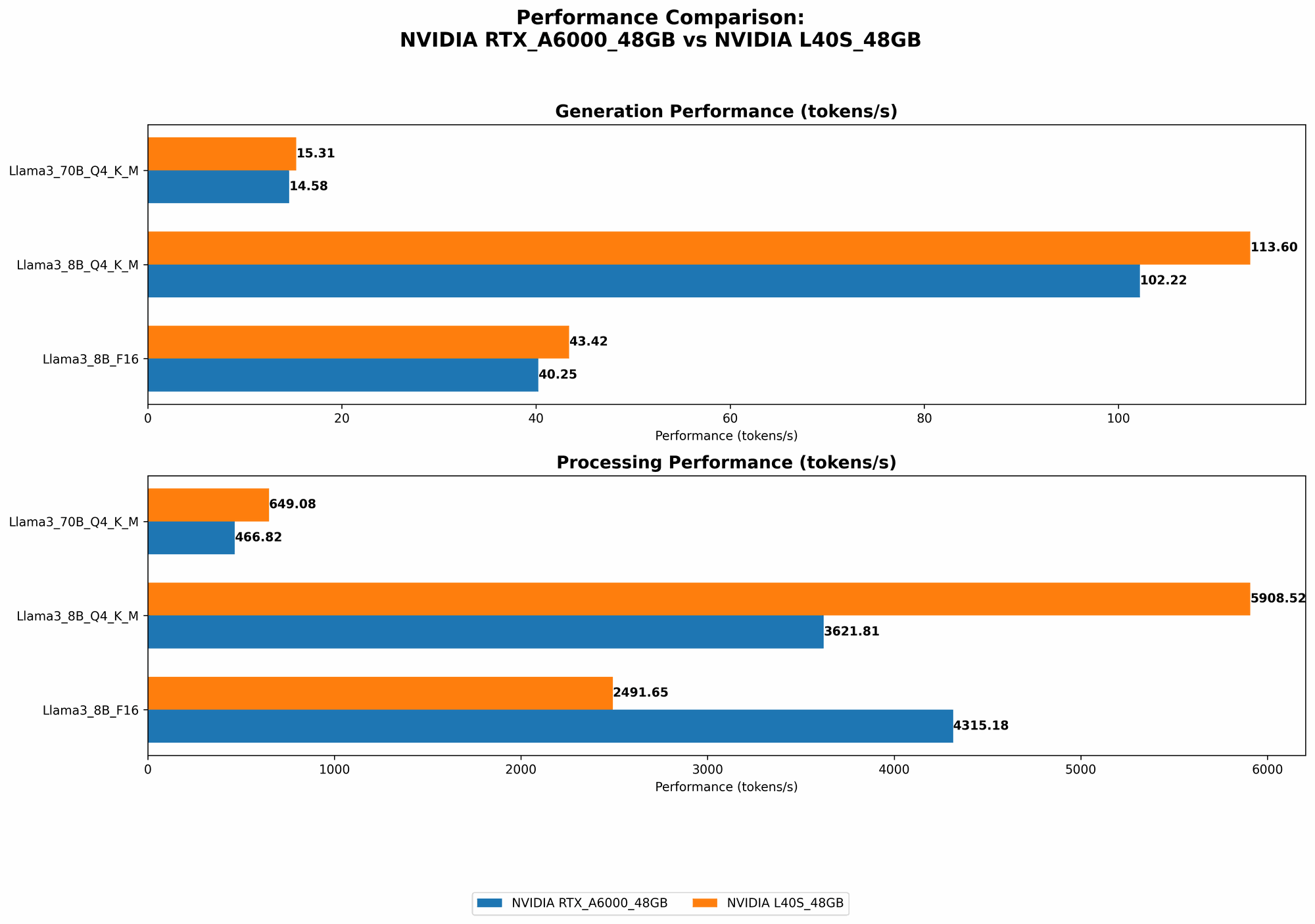

Comparison of NVIDIA RTX A6000 48GB and NVIDIA L40S 48GB Performance for Llama 3 8B Models

Let's kick things off with the Llama 3 8B models. This is a popular choice for developers because it's relatively smaller, making it easier to run on local GPUs. The table below compares the performance of the two GPUs for both quantized (Q4KM) and FP16 modes:

| Metric | NVIDIA RTX A6000 48GB | NVIDIA L40S 48GB |

|---|---|---|

| Llama3 8B Q4KM Generation | 102.22 tokens/second | 113.6 tokens/second |

| Llama3 8B F16 Generation | 40.25 tokens/second | 43.42 tokens/second |

| Llama3 8B Q4KM Processing | 3621.81 tokens/second | 5908.52 tokens/second |

| Llama3 8B F16 Processing | 4315.18 tokens/second | 2491.65 tokens/second |

Analysis

- Generation Speed: The L40S consistently outperforms the A6000 in both Q4KM and F16 modes. This means you'll get faster text generation with the L40S.

- Processing Speed: When it comes to processing tokens, the L40S is significantly faster than the A6000 in Q4KM mode. However, the A6000 outperforms the L40S in F16 mode.

Key takeaway: The L40S is a better choice for Llama 3 8B models if you need faster text generation. If processing speed is your priority, and you're comfortable running in F16 mode, the A6000 might be a better choice.

Comparison of NVIDIA RTX A6000 48GB and NVIDIA L40S 48GB Performance for Llama 3 70B Models

Now, let's move on to the more demanding Llama 3 70B model. This model is significantly larger and therefore requires more GPU power. The table below shows the performance comparison:

| Metric | NVIDIA RTX A6000 48GB | NVIDIA L40S 48GB |

|---|---|---|

| Llama3 70B Q4KM Generation | 14.58 tokens/second | 15.31 tokens/second |

| Llama3 70B F16 Generation | N/A | N/A |

| Llama3 70B Q4KM Processing | 466.82 tokens/second | 649.08 tokens/second |

| Llama3 70B F16 Processing | N/A | N/A |

Analysis

- Generation Speed: Similar to the 8B models, the L40S offers slightly faster generation speeds for the 70B model.

- Processing Speed: Again, the L40S beats the A6000 in processing speed.

Key Takeaway: The L40S seems to be a more consistent performer for both smaller and larger Llama 3 models. If you're working with 70B models, the L40S appears to be the clear winner.

5 Key Factors to Consider When Choosing Between NVIDIA RTX A6000 48GB and NVIDIA L40S 48GB for AI

Now let's break down the five key factors that influence your decision:

Performance: As we've seen, the L40S generally offers faster performance for both text generation and processing, especially for larger models. This means you'll be able to experiment with more complex models and get faster results.

Memory: Both GPUs boast a generous 48GB of HBM2e memory. This is crucial for running large LLMs, as these models require a significant amount of memory to store their parameters and activations. You won't have to worry about running out of memory with either of these GPUs.

Power Consumption: The L40S is a more power-hungry beast compared to the A6000. This means it will generate more heat and require a more powerful power supply. Consider the power consumption of your setup and its potential impact on your electricity bill.

Price: The NVIDIA L40S is generally more expensive than the RTX A6000. You'll need to weigh the performance gains against your budget when making a decision.

Availability: The RTX A6000 is a well-established GPU and more readily available. The L40S is a newer model that might be harder to get your hands on, especially during shortages. Check with vendors for availability before making your purchase.

Use Case Considerations

Research and Development: If you're experimenting with different LLM models and require the fastest possible throughput, the L40S is a strong contender.

Production Deployment: If you're deploying LLMs for production use and need to optimize for cost-effectiveness, the A6000 might be a better option, especially if you're okay with running models in F16 mode for maximum processing speed.

Budget-Constrained Environments: If you're on a tighter budget, the A6000 is a more affordable option with still impressive performance.

Choosing the Best GPU for You

The choice between the NVIDIA RTX A6000 48GB and NVIDIA L40S 48GB ultimately depends on your specific requirements and budget. If you prioritize performance and are willing to pay a premium, the L40S is a great choice. But if you're looking for a more budget-friendly option with solid performance, the A6000 is a worthy contender.

Quantization: Making LLMs More Accessible

Quantization is a technique that reduces the precision of the weights and activations in a neural network, thereby decreasing the memory footprint and computational requirements.

Think of it like this: imagine you have a very detailed map of a city. You can reduce the size of the map by using less precise details, like lines instead of detailed roads. You lose some precision, but the map is much smaller and easier to use.

LLMs often have extremely large weights, which can take up a lot of memory. Quantization allows us to store these weights with less precision, making them smaller and faster to load and process. This means you can run larger models on less powerful hardware, making LLMs accessible to a broader audience.

Conclusion

The NVIDIA RTX A6000 48GB and NVIDIA L40S 48GB are both powerful GPUs that excel at running large language models. The L40S offers faster performance, while the A6000 provides a more budget-friendly option.

Ultimately, the best choice for you depends on your specific needs and budget. Consider the five key factors discussed in this article to make an informed decision.

FAQ

What are LLMs?

LLMs are advanced AI models trained on vast amounts of text data. They are capable of generating text, answering questions, translating languages, and much more.

What is the difference between generation and processing?

- Generation refers to the process of creating new text outputs from an LLM.

- Processing refers to the model's ability to understand and interpret existing text.

Can I run LLMs on my laptop?

It depends on the size of the LLM and your laptop's specifications. Some smaller LLMs can be run on high-end laptops with dedicated GPUs, but larger models typically require more powerful GPUs.

What about other GPUs?

While this article focuses on the RTX A6000 and L40S, other GPUs are also capable of running LLMs. You can research and compare different options based on your specific needs and budget.

Keywords

LLMs, Large Language Models, NVIDIA RTX A6000, NVIDIA L40S, GPU, performance, generation speed, processing speed, memory, power consumption, price, availability, quantization, AI, deep learning, natural language processing, code generation, use cases, research, development, production, deployment, budget, FAQ