5 Key Factors to Consider When Choosing Between NVIDIA RTX A6000 48GB and NVIDIA A100 SXM 80GB for AI

Introduction

Welcome to the fascinating world of Large Language Models (LLMs)! If you're a developer diving into the exciting realm of local LLM deployments, choosing the right hardware is crucial. This article will guide you through the key considerations when deciding between two popular GPUs: the NVIDIA RTX A6000 48GB and the NVIDIA A100 SXM 80GB. These powerful processors can unlock the potential of LLMs, allowing you to run complex models locally and explore the cutting-edge capabilities of artificial intelligence.

Imagine a super-smart AI that can understand and generate human-like text, translate languages, write different kinds of creative content, and answer your questions. That's the power of LLMs, and these GPUs are the engines that make them roar!

This article will compare the performance of these GPUs for running several LLM models, focusing on the Llama 3 family, and help you make an informed decision based on your needs and budget.

Comparison of RTX A6000 48GB and A100 SXM 80GB for LLM Inference

Let's dive into the nitty-gritty of comparing these two GPUs. We'll analyze their performance with different LLM models, exploring factors like token generation speed, processing power, and memory capacity. The data we'll be using comes from the llama.cpp project (https://github.com/ggerganov/llama.cpp) by ggerganov and the GPU Benchmarks on LLM Inference project (https://github.com/XiongjieDai/GPU-Benchmarks-on-LLM-Inference) by XiongjieDai.

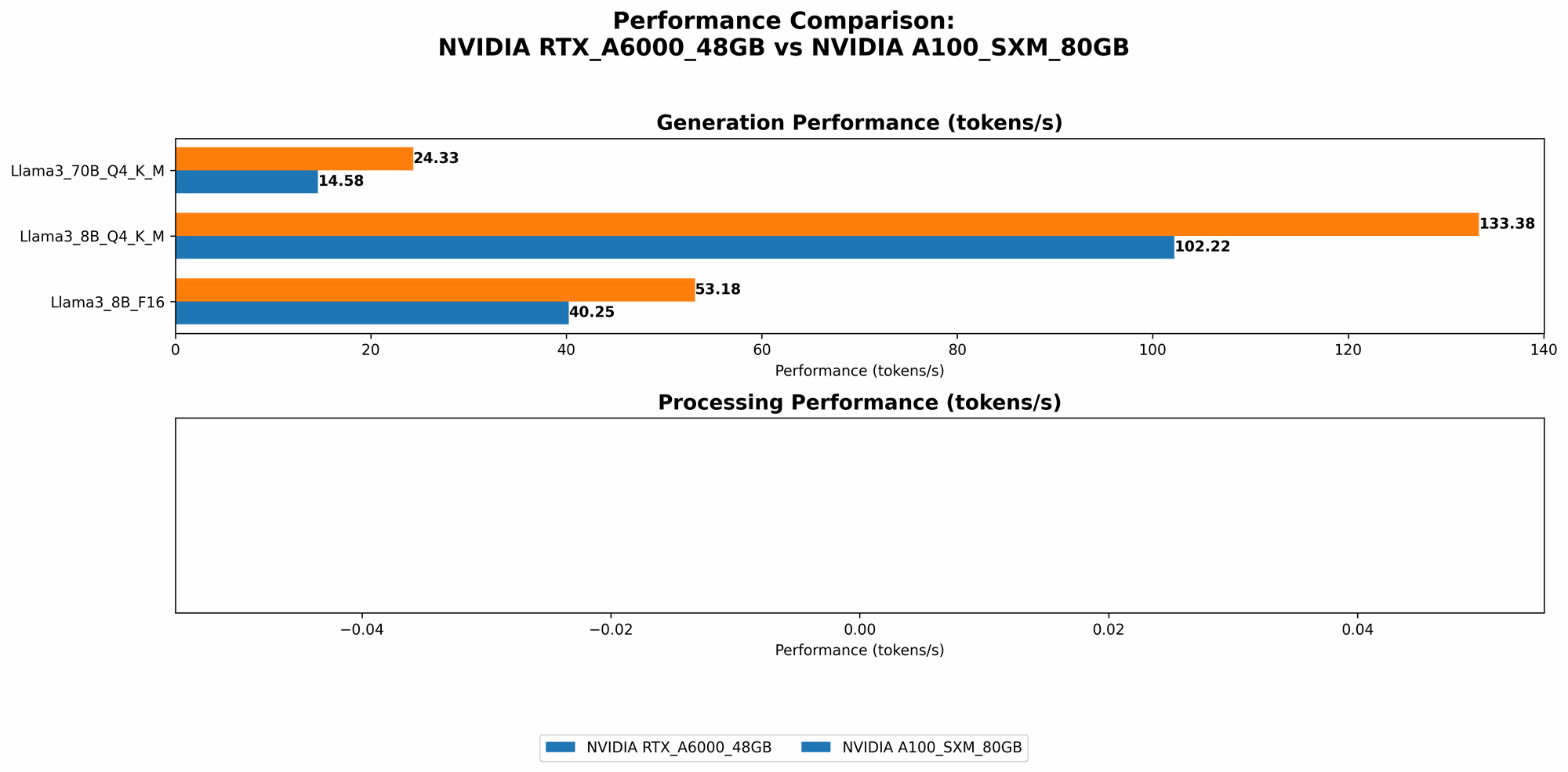

Llama 3 Model Performance Comparison

Let's start with the Llama 3 series of LLMs. We'll look at the performance of both GPUs running Llama 3 models in two different quantization levels (Q4KM and F16) and two different sizes: 8B and 70B. The performance is measured in tokens per second (tokens/sec).

| Model | RTX A6000 48GB (tokens/sec) | A100 SXM 80GB (tokens/sec) |

|---|---|---|

| Llama 3 8B Q4KM Generation | 102.22 | 133.38 |

| Llama 3 8B F16 Generation | 40.25 | 53.18 |

| Llama 3 70B Q4KM Generation | 14.58 | 24.33 |

| Llama 3 70B F16 Generation | No data available | No data available |

Key takeaways:

A100 SXM 80GB outperforms RTX A6000 48GB in all scenarios: The A100 SXM 80GB consistently delivers faster token generation speeds across Llama 3 models in both quantization levels. The difference in performance is more pronounced with the larger Llama 3 70B model, indicating the A100's advantage in handling complex models.

Quantization impacts performance: Both GPUs experience a significant drop in performance when using the F16 quantization level compared to Q4KM. This is expected, as F16 quantization sacrifices precision for speed.

Larger models demand more processing power: The token generation speed of the smaller Llama 3 8B model is significantly faster than the 70B model on both GPUs. This emphasizes the importance of choosing the right GPU based on the size and complexity of your LLM model.

Processing Power: A Deeper Dive

The token generation speed isn't the only performance metric to consider. Processing power, which is measured as tokens processed per second, is crucial for tasks like text understanding and processing.

| Model | RTX A6000 48GB (tokens/sec) | A100 SXM 80GB (tokens/sec) |

|---|---|---|

| Llama 3 8B Q4KM Processing | 3621.81 | No data available |

| Llama 3 8B F16 Processing | 4315.18 | No data available |

| Llama 3 70B Q4KM Processing | 466.82 | No data available |

| Llama 3 70B F16 Processing | No data available | No data available |

Key insights:

RTX A6000 offers strong processing capabilities: In the processing power analysis, the RTX A6000 48GB shines. Unfortunately, we don't have corresponding data for the A100 SXM 80GB. However, based on this data, the RTX A6000 demonstrates its capability in handling LLM tasks that involve complex processing.

Processing power is crucial for complex tasks: Observing the performance of both GPUs across different model sizes, we can understand that larger models require significantly more processing power. For complex tasks involving text understanding, sentiment analysis, or question answering, the RTX A6000's processing prowess might be a key deciding factor.

Memory Considerations

GPU memory is a crucial factor when running large LLMs. While both the RTX A6000 48GB and A100 SXM 80GB boast substantial memory, the A100 SXM 80GB has a clear advantage in this area:

A100 SXM 80GB offers more memory: With 80GB of HBM2e memory, the A100 SXM 80GB provides ample space for storing and accessing large LLM models and their parameters. This extra memory can be a game-changer for models like Llama 3 70B, which require larger memory footprints.

RTX A6000 48GB still provides significant memory: The RTX A6000 48GB offers 48GB of GDDR6 memory, which is still more than enough to handle smaller LLM models like Llama 3 8B.

Choosing the Right GPU for Your LLM Needs

Now that we've analyzed the performance and memory capabilities of both GPUs, let's discuss how these factors translate into practical use cases.

RTX A6000 48GB: The Workhorse for Smaller Models

The RTX A6000 48GB emerges as the workhorse for developers who focus on smaller LLM models like Llama 3 8B. Its strong processing power, combined with its 48GB of memory, makes it an excellent choice for tasks requiring fast inference and complex text processing.

Ideal for:

- Smaller LLM models: Llama 3 8B, other smaller models

- Text processing: Sentiment analysis, summarization

- Question answering: Answering specific questions

- Text generation: Creating shorter, more focused text outputs

Cost-effective solution: Compared to the A100 SXM 80GB, the RTX A6000 48GB offers a more budget-friendly option, making it attractive for individuals and smaller teams.

A100 SXM 80GB: Powerhouse for Large Models

The A100 SXM 80GB is the powerhouse for handling large LLM models like Llama 3 70B. Its exceptional token generation speed, coupled with its massive 80GB of memory, ensures smooth performance and provides the flexibility to explore more complex models.

Ideal for:

- Larger LLM models: Llama 3 70B, other large models

- Complex tasks: Text generation for novels, long-form content

- Multi-modal tasks: Integrating text with other data formats

- Research and development: Pushing the boundaries of LLM capabilities

High-performance investment: The A100 SXM 80GB represents a significant investment, but its unparalleled performance and ability to handle massive models make it worth considering for large organizations and research projects.

Quantization: Optimizing Performance and Memory

Quantization is a technique used to reduce the size of LLM models and improve their inference speed. It's like converting a high-resolution image into a smaller version while retaining its essential features.

- Q4KM: A quantization level that uses 4 bits to represent each value.

- F16: A quantization level that uses 16 bits to represent each value.

The choice between Q4KM and F16 depends on the balance you want to achieve between speed and accuracy. Think of it like choosing between a quick, rough sketch or a detailed, intricate drawing.

- Q4KM: Offers the fastest inference speed with a slightly reduced accuracy, but it comes with a smaller model size.

- F16: Provides better accuracy but sacrifices speed, and it results in a larger model size.

Understanding the trade-offs between quantization levels is essential in optimizing your LLM setup. The RTX A6000 48GB might be a better choice for tasks where accuracy isn't the primary concern, while the A100 SXM 80GB's raw speed might be preferred for scenarios that value speed over precision.

Frequently Asked Questions

What are the main differences between the RTX A6000 48GB and A100 SXM 80GB?

The RTX A6000 48GB offers a strong balance of processing power and memory for smaller LLM models, while the A100 SXM 80GB is a high-performance powerhouse designed for large LLMs. The A100 boasts significantly more memory and faster token generation speeds, making it ideal for resource-intensive models.

What are the benefits of running LLMs locally?

Running LLMs locally offers control over your data, improved privacy, and faster inference speeds. It also eliminates the need for Internet connectivity, making it suitable for use in environments with limited network access.

Is the A100 SXM 80GB always the best choice for LLMs?

Not necessarily. While the A100 SXM 80GB excels with large LLMs, the RTX A6000 48GB might be a more cost-effective and practical choice for smaller models and tasks that prioritize accuracy over speed.

What is the best way to choose the right GPU for my LLM needs?

Consider the size of your LLM model, your budget, and the specific tasks you intend to perform. If you're working with smaller models and are budget-conscious, the RTX A6000 48GB might be suitable. For large models and demanding tasks, the A100 SXM 80GB is a solid investment.

Keywords

NVIDIA RTX A6000 48GB, NVIDIA A100 SXM 80GB, LLM, Large Language Model, Llama 3, GPU, AI, Machine Learning, Token Generation, Processing Power, Memory, Quantization, Q4KM, F16, Inference Speed, Deep Learning, Text Generation, Text Processing, Text Understanding, Natural Language Processing, Development, Research, Cost-Effective, High-Performance, Data Privacy, Local Deployment