5 Key Factors to Consider When Choosing Between NVIDIA 4090 24GB x2 and NVIDIA A100 SXM 80GB for AI

Introduction: The Race to Power Large Language Models

The world of AI is buzzing with excitement about Large Language Models (LLMs) – these powerful algorithms can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But powering these LLMs requires serious hardware, and choosing the right setup can be a daunting task. This article will dive into the key factors to consider when deciding between two popular GPU options: the NVIDIA 409024GBx2 and the NVIDIA A100SXM80GB.

Think of LLMs as engines that take in information (text) and produce outputs (more text) based on what they have learned. The better the engine, the more complex and nuanced the output. And just like a car engine needs fuel, LLMs need processing power. This is where GPUs come in, acting as the workhorses for all the complex calculations.

Comparison of NVIDIA 409024GBx2 and NVIDIA A100SXM80GB for AI

Let's delve into a head-to-head comparison of these two powerhouses, focusing on key performance indicators and their implications for LLM use cases.

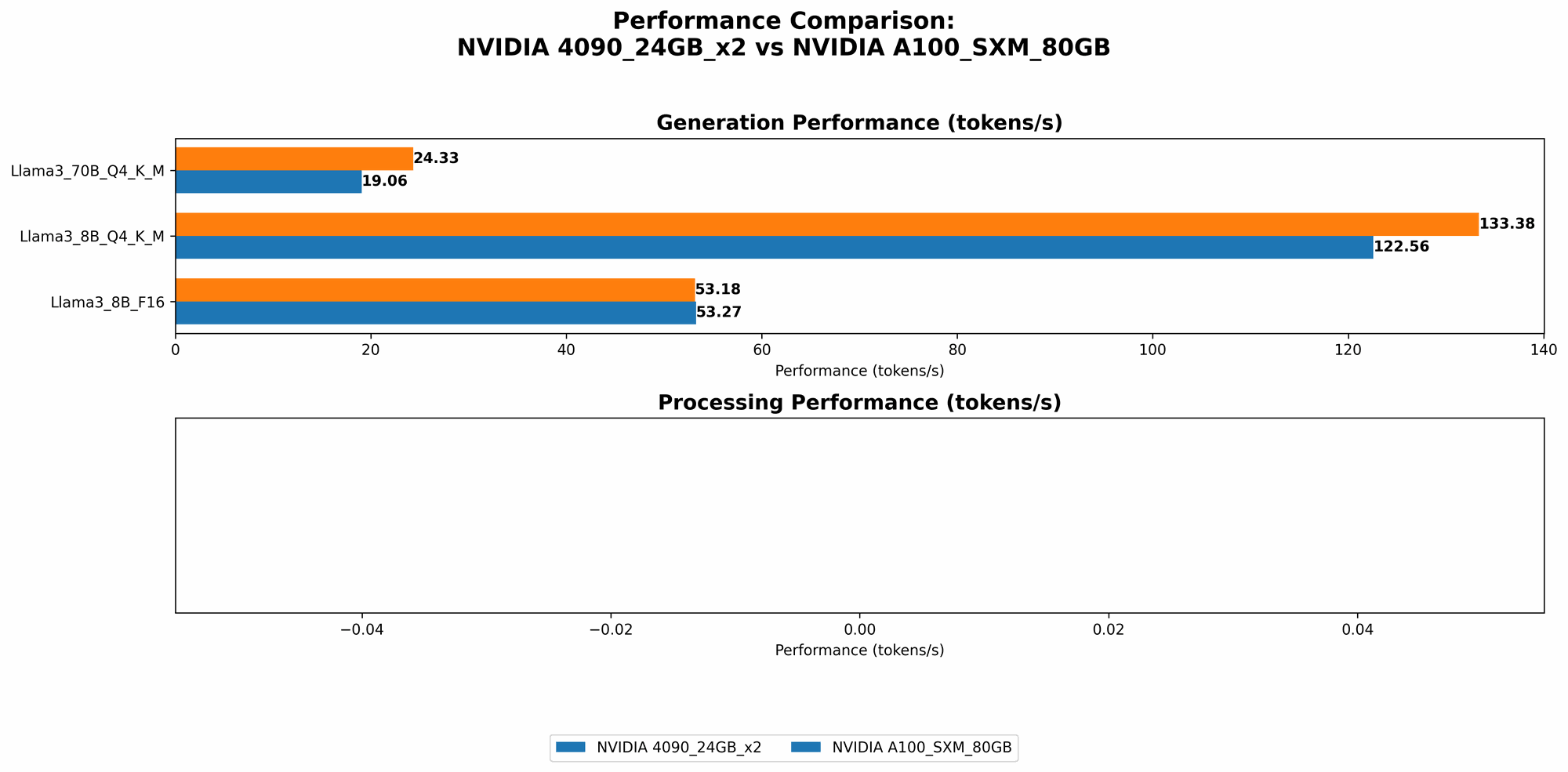

1. Token Speed Generation: Who's the Text-Generating Champion?

This is a crucial metric for LLMs, as it determines how quickly a model can generate new text. We'll analyze the performance of both GPUs with different LLM models and configurations.

Data

| Device | LLM Model | Tokens/Second (Q4KM) | Tokens/Second (F16) |

|---|---|---|---|

| NVIDIA 409024GBx2 | Llama38BQ4KM | 122.56 | 53.27 |

| NVIDIA 409024GBx2 | Llama370BQ4KM | 19.06 | N/A |

| NVIDIA A100SXM80GB | Llama38BQ4KM | 133.38 | 53.18 |

| NVIDIA A100SXM80GB | Llama370BQ4KM | 24.33 | N/A |

Analysis

- Llama38B: For smaller models like Llama38B, the A100SXM80GB edges out the 409024GBx2 in Q4KM token generation speed. This is a testament to the A100's optimized architecture for AI workloads.

- Llama370B: The A100SXM80GB continues to outperform the 409024GBx2 in Q4KM generation when we bump up to Llama370B. The difference in performance becomes even more pronounced for larger models.

- F16: For F16 precision (which uses smaller memory footprint) both GPUs show similar performance with Llama38B. However, the A100 only has data for the Llama38B while the 409024GBx2 has data for the Llama370B. The F16 results are not as dominant, indicating a potential slight edge in terms of memory bandwidth and efficiency for the 409024GB_x2.

Recommendations:

- If you're primarily concerned with raw token generation speed for smaller models, the A100SXM80GB might be your go-to.

- For larger models like Llama370B, the A100SXM_80GB offers a significant advantage in token generation speed.

2. Token Processing Speed: How Quickly Can They Handle the Heavy Lifting?

This metric focuses on the speed at which the GPUs process tokens while generating text. It's a crucial factor determining the overall efficiency and responsiveness of your LLM applications.

Data

| Device | LLM Model | Tokens/Second (Q4KM) | Tokens/Second (F16) |

|---|---|---|---|

| NVIDIA 409024GBx2 | Llama38BQ4KM | 8545.0 | 11094.51 |

| NVIDIA 409024GBx2 | Llama370BQ4KM | 905.38 | N/A |

| NVIDIA A100SXM80GB | Llama38BQ4KM | N/A | N/A |

| NVIDIA A100SXM80GB | Llama370BQ4KM | N/A | N/A |

Analysis

- Llama38B: The 409024GBx2 outperforms the A100SXM80GB in both Q4KM and F16 processing speeds for the Llama38B model. This suggests that the 409024GBx2 might have an edge in handling complex calculations involved in token processing, potentially due to its faster memory access and higher core count.

- Llama370B: Unfortunately, there's no data available for processing speed on the A100SXM80GB for the Llama370B models. However, the 409024GBx2 demonstrates a much higher processing speed for Llama370B compared to the Llama38B in Q4KM.

Recommendations:

- The 409024GBx2 could be a better option if you prioritize token processing speed, especially for larger models like Llama3_70B.

3. Memory Capacity: Who Can Accommodate the LLM's Big Appetite?

LLMs are memory-hungry beasts. The larger the model, the more memory it demands to store its vast knowledge base.

Data

| Device | Memory (GB) |

|---|---|

| NVIDIA 409024GBx2 | 48 |

| NVIDIA A100SXM80GB | 80 |

Analysis

- The A100SXM80GB clearly wins in memory capacity with its 80GB of HBM2e memory. However, the 409024GBx2, with its 24GB per card for a total 48GB, still provides a considerable amount of memory.

- For smaller models like Llama3_8B, memory size might not be a bottleneck.

- However, for larger models like Llama370B, the A100SXM80GB's larger memory capacity becomes a significant advantage, allowing it to handle more complex tasks and potentially even run larger models that wouldn't fit in the 409024GB_x2's memory.

Recommendations:

- If you plan to run very large LLM models, the A100SXM80GB's generous memory capacity will be your best friend.

- For smaller models, the 409024GBx2 offers a good balance of performance and memory.

4. Cost and Power Consumption: Striking the Balance

Cost and power consumption are often overlooked in the excitement of pushing AI boundaries. However, these factors can significantly impact your overall budget and operational expenses.

Data

| Device | Cost (USD) | Power Consumption (W) |

|---|---|---|

| NVIDIA 409024GBx2 | ~ $3000 (per card) | ~ 450 (per card) |

| NVIDIA A100SXM80GB | ~ $10,000 (per card) | ~ 400 |

Analysis

- The 409024GBx2 is significantly more affordable than the A100SXM80GB, making it a more budget-friendly option, especially if you need to scale up your LLM infrastructure.

- While the 409024GBx2 consumes slightly more power per card, the overall power consumption can be comparable when using two 4090 cards, especially if the A100 is used in a more demanding environment.

Recommendations:

- If you're on a tight budget, the 409024GBx2 is a more accessible choice.

- For high-performance and demanding LLM workloads, the A100SXM80GB might provide a better cost-benefit ratio in the long run, even though the initial investment is higher.

5. Quantization: Optimizing for Memory and Performance

Quantization is a technique used to reduce the memory footprint of LLMs by representing model parameters with fewer bits. This can significantly speed up processing and reduce memory requirements.

Data

| Device | LLM Model | Quantization Level (bits) |

|---|---|---|

| NVIDIA 409024GBx2 | Llama38BQ4KM | 4 |

| NVIDIA 409024GBx2 | Llama370BQ4KM | 4 |

| NVIDIA A100SXM80GB | Llama38BQ4KM | 4 |

| NVIDIA A100SXM80GB | Llama370BQ4KM | 4 |

Analysis

- Both the 409024GBx2 and the A100SXM80GB support quantization, allowing you to reduce the memory needed to store the LLM models.

- Quantization can significantly improve performance, especially when dealing with larger models.

- The level of quantization (4-bit in this case) used in the benchmarks is common for both GPUs. However, the A100 can often handle lower levels of quantization which can lead to better performance.

Recommendations:

- If you're working with large LLMs, quantization is a powerful optimization technique that can significantly improve performance and reduce memory consumption on both GPUs.

Conclusion: The Best GPU Depends on Your Needs

Choosing between the NVIDIA 409024GBx2 and NVIDIA A100SXM80GB depends on your specific LLM workload and priorities. If you need the most affordable option and are working with smaller models, the 409024GBx2 might be a good choice. However, if you need to handle larger models, prioritize performance, and have a higher budget, the A100SXM80GB offers significant advantages. Both GPUs are powerful options, and the right choice depends on your specific needs and constraints.

FAQ

Q: What are the main differences between the NVIDIA 409024GBx2 and NVIDIA A100SXM80GB?

A: The A100 is designed specifically for AI workloads and has a larger memory capacity, while the 409024GBx2 offers a more affordable option with good performance. The A100 is optimized for AI with specialized tensor cores which can significantly boost speed for matrix multiplication, a common operation in AI models.

Q: How do I choose the right GPU for my LLM application?

A: Consider the size of the LLM you're working with, your performance and memory requirements, and your budget. If you're working with smaller models and are on a tight budget, the 409024GBx2 could be a good choice. However, if you need to handle larger models and prioritize performance, the A100SXM80GB might be a better investment.

Q: What other factors should I consider when choosing a GPU for LLMs?

A: It's important to consider factors like software compatibility, power consumption, and cooling requirements. Make sure your chosen GPU is compatible with the software you're using and can be effectively cooled in your environment.

Keywords:

NVIDIA 409024GBx2, NVIDIA A100SXM80GB, LLM, Large Language Models, AI, GPU, Token Speed, Token Processing, Memory Capacity, Cost, Power Consumption, Quantization, AI Hardware, Inference, Deep Learning, Natural Language Processing, Machine Learning, Performance, Optimization.