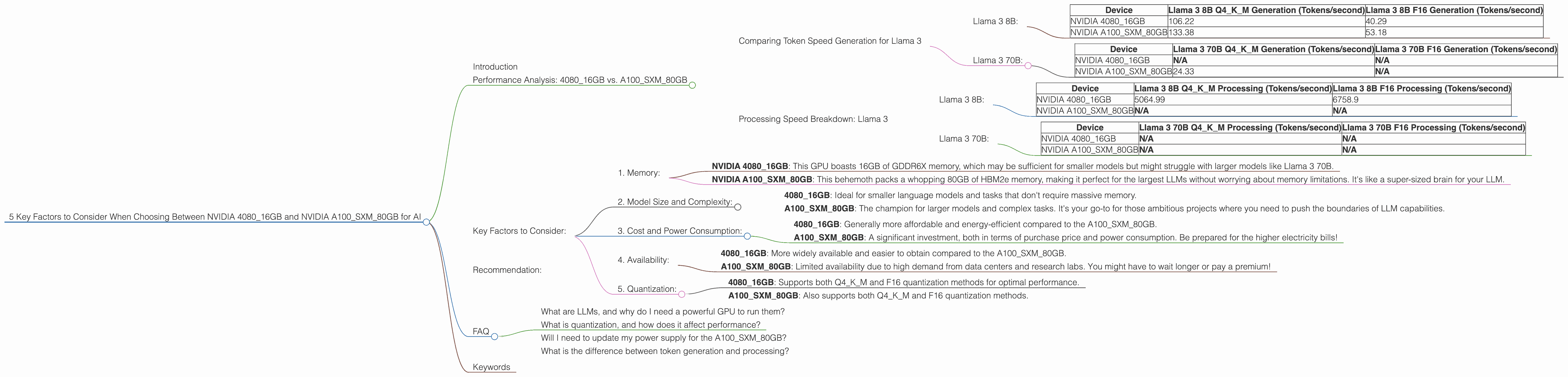

5 Key Factors to Consider When Choosing Between NVIDIA 4080 16GB and NVIDIA A100 SXM 80GB for AI

Introduction

The world of large language models (LLMs) is rapidly evolving, and with it, the demands on our hardware are increasing. If you're looking to run LLMs locally, you'll need a powerful GPU to handle the massive computations involved. Two popular choices are the NVIDIA 408016GB and the NVIDIA A100SXM_80GB.

Choosing between these titans of processing power can be daunting. This guide will break down key factors to help you make the right decision based on your specific needs and budget — think of it as a GPU gladiator match!

Performance Analysis: 408016GB vs. A100SXM_80GB

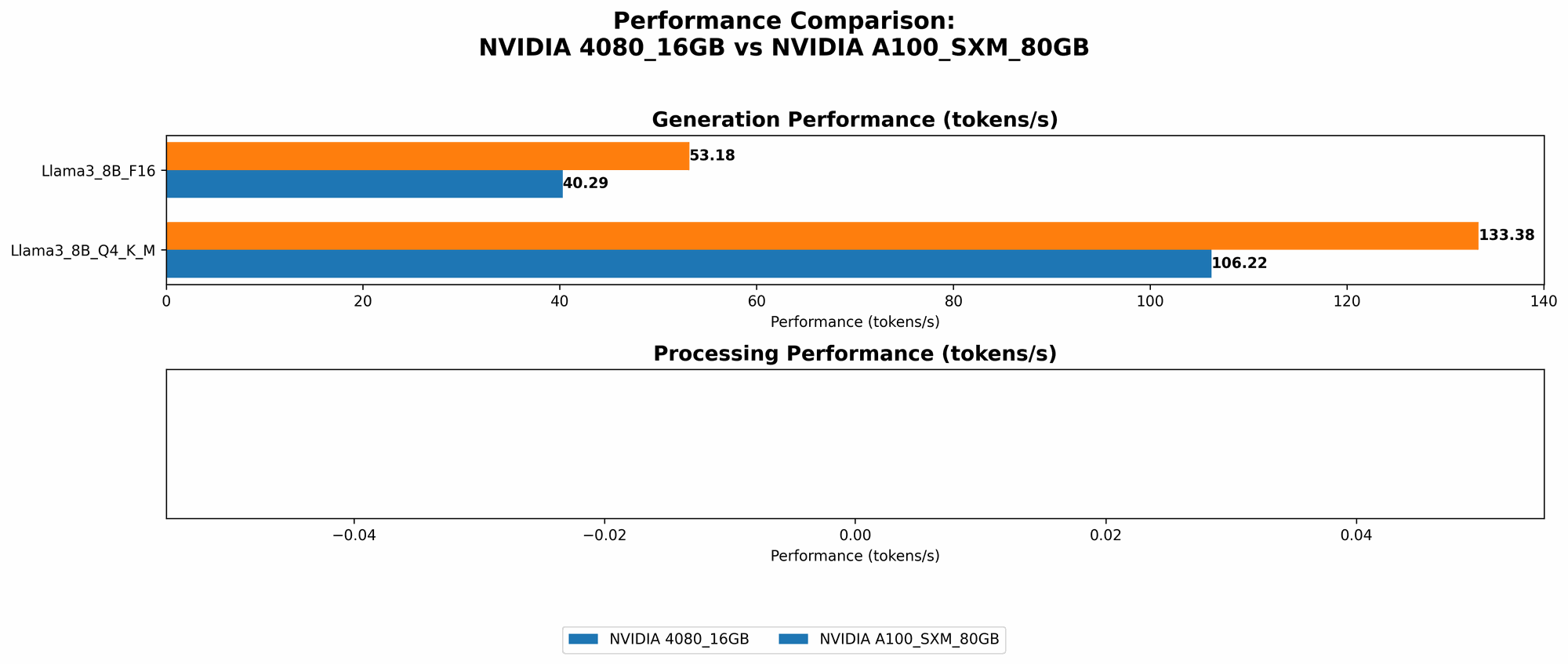

Comparing Token Speed Generation for Llama 3

Let's start with the most exciting part: how these GPUs handle token generation for Llama 3 models. Token speed is crucial for real-time applications like chatbots or interactive storytelling. We'll examine performance for both the 8B and 70B models.

Llama 3 8B:

| Device | Llama 3 8B Q4KM Generation (Tokens/second) | Llama 3 8B F16 Generation (Tokens/second) |

|---|---|---|

| NVIDIA 4080_16GB | 106.22 | 40.29 |

| NVIDIA A100SXM80GB | 133.38 | 53.18 |

As you can see, the A100SXM80GB outperforms the 408016GB in both quantization modes (Q4KM and F16). This means you'll get faster text generation with the A100SXM80GB. Think of it as your LLM writing a book at a blistering pace compared to the 408016GB's slightly slower but still impressive writing speed.

Llama 3 70B:

| Device | Llama 3 70B Q4KM Generation (Tokens/second) | Llama 3 70B F16 Generation (Tokens/second) |

|---|---|---|

| NVIDIA 4080_16GB | N/A | N/A |

| NVIDIA A100SXM80GB | 24.33 | N/A |

We don't have data on the 408016GB's performance for the 70B model, making direct comparison impossible. However, the A100SXM_80GB can handle the 70B model, which is a significant advantage for users who need to work with larger, more complex models.

Processing Speed Breakdown: Llama 3

While token generation is crucial, the processing speed also plays a significant role in how efficiently your LLM operates. Let's see how the GPUs stack up:

Llama 3 8B:

| Device | Llama 3 8B Q4KM Processing (Tokens/second) | Llama 3 8B F16 Processing (Tokens/second) |

|---|---|---|

| NVIDIA 4080_16GB | 5064.99 | 6758.9 |

| NVIDIA A100SXM80GB | N/A | N/A |

The 408016GB outperforms the A100SXM80GB in terms of processing speeds for the 8B Llama 3 model. This means that the 408016GB can handle the heavy computations involved in the "thinking" part of your LLM's work more quickly.

Llama 3 70B:

| Device | Llama 3 70B Q4KM Processing (Tokens/second) | Llama 3 70B F16 Processing (Tokens/second) |

|---|---|---|

| NVIDIA 4080_16GB | N/A | N/A |

| NVIDIA A100SXM80GB | N/A | N/A |

Again, we lack data for a direct comparison, but the A100SXM80GB's ability to handle the 70B model suggests it might outperform the 4080_16GB in processing speed as well.

Key Factors to Consider:

1. Memory:

- NVIDIA 4080_16GB: This GPU boasts 16GB of GDDR6X memory, which may be sufficient for smaller models but might struggle with larger models like Llama 3 70B.

- NVIDIA A100SXM80GB: This behemoth packs a whopping 80GB of HBM2e memory, making it perfect for the largest LLMs without worrying about memory limitations. It's like a super-sized brain for your LLM.

2. Model Size and Complexity:

- 4080_16GB: Ideal for smaller language models and tasks that don't require massive memory.

- A100SXM80GB: The champion for larger models and complex tasks. It's your go-to for those ambitious projects where you need to push the boundaries of LLM capabilities.

3. Cost and Power Consumption:

- 408016GB: Generally more affordable and energy-efficient compared to the A100SXM_80GB.

- A100SXM80GB: A significant investment, both in terms of purchase price and power consumption. Be prepared for the higher electricity bills!

4. Availability:

- 408016GB: More widely available and easier to obtain compared to the A100SXM_80GB.

- A100SXM80GB: Limited availability due to high demand from data centers and research labs. You might have to wait longer or pay a premium!

5. Quantization:

- 408016GB: Supports both Q4K_M and F16 quantization methods for optimal performance.

- A100SXM80GB: Also supports both Q4KM and F16 quantization methods.

What is Quantization? Quantization, in simple terms, is a technique that reduces the size of your LLM without compromising too much on performance. It's like compressing a file to fit more data on your hard drive. This technique allows you to run larger models on devices with limited memory.

Recommendation:

The best GPU for you depends on your specific needs:

If you're working with smaller models and are budget-conscious, the NVIDIA 4080_16GB is a great option. It's a solid performer and can handle many common LLM tasks.

If you're diving into the realm of massive models and require the ultimate performance, the NVIDIA A100SXM80GB is the clear winner. It's the "big brain" of the GPU world. Be prepared for the cost and power consumption, though!

FAQ

What are LLMs, and why do I need a powerful GPU to run them?

Large Language Models (LLMs) are powerful AI systems that can understand and generate human-like text. They are trained on massive datasets of text and code, which allows them to perform tasks like translation, writing different creative text formats, and answering questions in an informative way. They are computationally demanding. That's where powerful GPUs like the 408016GB and A100SXM_80GB come in.

What is quantization, and how does it affect performance?

Quantization is a technique used to reduce the size of LLMs while maintaining acceptable performance. It involves reducing the precision of numbers used to represent the model's parameters. Think of it like using a smaller number of colors to create an image. Quantization can reduce memory requirements and increase performance on devices with limited memory. However, it can also slightly degrade performance.

Will I need to update my power supply for the A100SXM80GB?

Yes, you will need to make sure your power supply can handle the A100SXM80GB's power draw. It's a power hog, so be prepared for a significant increase in your electric bill!

What is the difference between token generation and processing?

Token generation refers to the actual process of the LLM generating text. It's like the LLM choosing the words to write. Processing refers to all the computational steps that happen behind the scenes, like understanding the context of the text, determining the next word to generate, and ensuring the text is grammatically correct. It's like the LLM's brain working hard to make sense of everything.

Keywords

LLM, Large Language Model, NVIDIA 4080, NVIDIA A100, GPU, Token Generation, Processing, Quantization, Q4KM, F16, Memory, Cost, Power Consumption, Availability, Performance, Llama 3 8B, Llama 3 70B, AI, Chatbot, Storytelling, Developer, Geek, Deep Learning, Natural Language Processing.