5 Key Factors to Consider When Choosing Between NVIDIA 3080 Ti 12GB and NVIDIA 3090 24GB for AI

Introduction

The world of Large Language Models (LLMs) is rapidly evolving, with models like Llama 3 gaining popularity for their impressive capabilities. Running these models locally requires powerful hardware, and two popular choices are the NVIDIA GeForce RTX 3080 Ti 12GB and the NVIDIA GeForce RTX 3090 24GB GPUs.

Choosing the right GPU can be a perplexing task, especially with technical specifications and performance benchmarks flying around. This article will guide you through the key factors to consider when deciding between the NVIDIA 3080 Ti 12GB and the NVIDIA 3090 24GB for running LLMs.

Comparing NVIDIA 3080 Ti 12GB and NVIDIA 3090 24GB for Llama 3 Model Inference

Let's dive deeper into the comparison by examining the key considerations for running Llama 3 models on these popular GPUs.

1. Memory Capacity & Processing Power: How much RAM is enough for your LLM?

The NVIDIA 3090 24GB boasts a massive 24GB of GDDR6X memory, making it a clear winner in memory capacity. This impressive storage capacity is crucial for running larger models like Llama 3 70B. The NVIDIA 3080 Ti 12GB, with its 12GB of GDDR6X memory, might struggle to load larger models fully.

However, it's important to note that the NVIDIA 3080 Ti 12GB can still handle smaller LLMs like Llama 3 8B quite effectively.

Let's look at the facts:

| GPU Model | Llama 3 Model | Token Speed (tokens/second) |

|---|---|---|

| NVIDIA 3080 Ti 12GB | Llama 3 8B (Q4KM) | 106.71 |

| NVIDIA 3090 24GB | Llama 3 8B (Q4KM) | 111.74 |

| NVIDIA 3090 24GB | Llama 3 8B (F16) | 46.51 |

Here's a breakdown:

- Q4KM (Quantization): This is a technique that reduces the memory footprint of large models by converting their massive weights (like a set of instructions) into smaller representations, sacrificing some accuracy but achieving faster speeds. In this case, the 3080 Ti and 3090 are both capable of handling the 8B Llama 3 model with the Q4KM quantization technique.

- F16 (Mixed Precision): This is another technique that uses a lower precision format (half-precision) for calculations to improve speed. You can see that the NVIDIA 3090 24GB can handle the 8B model with mixed precision (F16), illustrating its ability to process more complex data types.

Practical considerations:

- Model Size: If you are working with smaller models like Llama 3 8B, the NVIDIA 3080 Ti 12GB can be a great option. But for larger models like Llama 3 70B, the NVIDIA 3090 24GB is the way to go.

- Quantization: If you are willing to sacrifice some accuracy for speed, both GPUs can perform well with the Q4KM quantization techniques. However, if you need higher precision, the NVIDIA 3090 24GB's F16 capability gives it a clear edge.

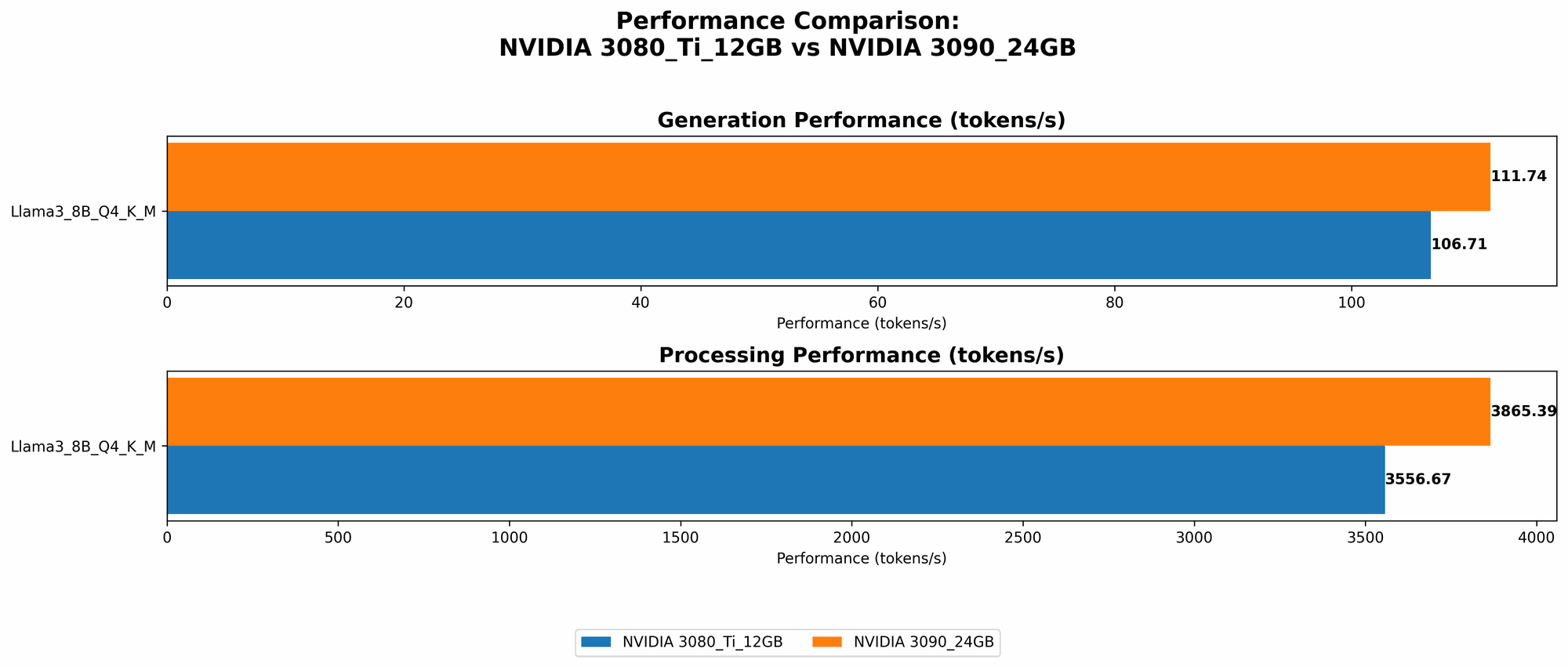

2. Performance Comparison: How fast can these GPUs run LLMs?

The NVIDIA 3090 24GB generally outperforms the NVIDIA 3080 Ti 12GB, but it's not a landslide victory. The difference in speed is not always significant, and the NVIDIA 3080 Ti 12GB can still be a great value proposition for many use cases.

Performance analysis:

| GPU Model | Llama 3 Model | Token Speed (tokens/second) |

|---|---|---|

| NVIDIA 3080 Ti 12GB | Llama 3 8B (Q4KM) | 106.71 |

| NVIDIA 3090 24GB | Llama 3 8B (Q4KM) | 111.74 |

This data indicates that the NVIDIA 3090 24GB has a slightly faster token speed for the Llama 3 8B model with Q4KM quantization. The difference, about 5 tokens per second, might not seem significant, but it can add up for longer requests.

Practical considerations:

- Compute-intensive tasks: For tasks that require high processing power, the NVIDIA 3090 24GB is the ideal choice.

- Budget: The NVIDIA 3080 Ti 12GB offers excellent performance at a lower price point, making it a more budget-friendly option, especially for less computationally demanding use cases.

3. Quantization Capabilities & Model Optimization: The Art of Reducing Memory Footprint

Quantization is a crucial technique for reducing the memory footprint of LLMs, allowing you to run larger models on less powerful hardware. Both the NVIDIA 3080 Ti 12GB and 3090 24GB support various quantization techniques.

Here's a breakdown:

- Q4KM (Quantization): Both GPUs handle this quantization approach effectively. The 3080 Ti 12GB can run the Llama 3 8B model using Q4KM quantization, while the 3090 24GB can handle even larger models with this technique.

- F16 (Mixed Precision): The NVIDIA 3090 24GB supports mixed precision (F16), offering a balance between speed and accuracy. This capability allows it to manage more complex model architectures with greater flexibility.

Practical considerations:

- Accuracy vs. Speed: When using quantization, it's important to weigh the trade-offs between accuracy and speed. For example, Q4KM quantization offers a faster inference speed but might compromise accuracy.

- Model Optimization: Both GPUs can benefit from model optimization techniques. For example, pruning and quantization can significantly reduce the size of the model, enabling smoother operation on both GPUs.

4. GPU Architecture & CUDA Cores: The Power Under the Hood

The NVIDIA 3090 24GB boasts a more advanced architecture and a higher count of CUDA cores compared to the NVIDIA 3080 Ti 12GB.

Here's a breakdown:

| GPU Model | CUDA Cores | Architecture |

|---|---|---|

| NVIDIA 3080 Ti 12GB | 10240 | Ampere (GA102) |

| NVIDIA 3090 24GB | 10496 | Ampere (GA102) |

The NVIDIA 3090 24GB's extra CUDA cores, dedicated processing units for parallel computing, give it a slight performance edge. However, both GPUs are based on the Ampere architecture, which is renowned for its performance and efficiency.

Practical considerations:

- Compute-intensive tasks: The NVIDIA 3090 24GB's additional CUDA cores give it an advantage in compute-intensive tasks, such as training large LLMs.

- Software and Driver Compatibility: Both GPUs benefit from NVIDIA's latest software and driver updates, ensuring optimal performance and compatibility with LLMs.

5. Power Consumption & Cooling: The Heat & Efficiency Factor

The NVIDIA 3090 24GB, with its higher processing power, naturally draws more power than the NVIDIA 3080 Ti 12GB. This means it generates more heat and requires a more robust cooling system.

Here's a breakdown:

| GPU Model | Power Consumption (TDP) |

|---|---|

| NVIDIA 3080 Ti 12GB | 350W |

| NVIDIA 3090 24GB | 350W |

While both GPUs have the same TDP (Thermal Design Power), the NVIDIA 3090 24GB needs to manage a higher heat load due to its more demanding workload.

Practical considerations:

- Cooling Solution: Consider investing in a high-quality cooling solution for the NVIDIA 3090 24GB if you plan to run it for extended periods, especially for demanding tasks.

- Power Supply: You'll need a more powerful power supply to accommodate the NVIDIA 3090 24GB's energy consumption.

Summary & Recommendations

Choosing between the NVIDIA 3080 Ti 12GB and NVIDIA 3090 24GB for running LLMs depends on your specific needs and budget.

Here's a quick comparison:

| Feature | NVIDIA 3080 Ti 12GB | NVIDIA 3090 24GB |

|---|---|---|

| Memory Capacity | 12GB | 24GB |

| Performance | Excellent for smaller models | Higher and better for larger models |

| Quantization | Supports Q4KM | Supports Q4KM and F16 |

| GPU Architecture | Ampere | Ampere |

| CUDA Cores | 10240 | 10496 |

| Power Consumption | 350W | 350W |

Recommendations:

- Smaller models (Llama 3 8B): The NVIDIA 3080 Ti 12GB can be a great option, offering excellent performance at a lower price point.

- Larger models (Llama 3 70B): Opt for the NVIDIA 3090 24GB for its superior memory capacity and faster processing speed.

- Budget-conscious users: The NVIDIA 3080 Ti 12GB offers excellent value for money, especially if you're working with smaller models.

- Maximum performance: If you require the highest possible performance, the NVIDIA 3090 24GB is your best choice.

FAQ

What is Quantization?

Quantization is a technique used in AI that reduces the precision of the numbers in a neural network, which in turn reduces the memory needed to store the weights for that network. This means that a model can be run on hardware with less memory, like the NVIDIA 3080 Ti 12GB. Imagine you're storing a recipe for a cake in a cookbook. Quantization is like replacing the exact measurements of each ingredient with a simpler, rounded approximation – you might lose a bit of accuracy, but it makes the recipe easier to understand and use.

What is F16 (Mixed Precision)?

F16 (Half-Precision) is a format that uses fewer bits to represent numbers in computations. This reduces the memory needed for calculations and speeds up the process. However, it also reduces the accuracy of the results. It's like using a shorter ruler to measure something – you get a quick answer, but it might not be as precise.

What are CUDA cores?

CUDA cores are the processing units on a GPU that perform parallel calculations for tasks like running LLMs. The more CUDA cores a GPU has, the faster it can complete these calculations. Think of them as a team of workers; the more workers you have, the faster you can build a house.

Which GPU is best for training LLMs?

While the NVIDIA 3090 24GB offers better performance for training, both GPUs can be used for training, with the NVIDIA 3090 24GB being the superior choice due to its higher memory capacity and faster processing speed.

Can I use a CPU to run LLMs?

Yes, but it will be significantly slower than using a GPU. CPUs are not optimized for the massive parallel calculations required by large language models.

Keywords

NVIDIA 3080 Ti, NVIDIA 3090, LLM, Llama 3, GPU, Memory Capacity, Performance, Quantization, CUDA cores, Power Consumption, AI, Machine Learning, Deep Learning, Inference, Token Speed, F16, Q4KM, Model Optimization, Training, CPU, Large Language Model