5 Key Factors to Consider When Choosing Between NVIDIA 3080 10GB and NVIDIA 4070 Ti 12GB for AI

Introduction

The world of Large Language Models (LLMs) is exploding, with new models being released every day. These models can do amazing things, from generating creative text to translating languages to writing code. But running these LLMs requires significant computational power, and choosing the right hardware can be a challenge.

This article dives deep into the performance of two popular graphics cards, NVIDIA 3080 10GB and NVIDIA 4070 Ti 12GB, for running LLMs. We'll explore how these GPUs handle different LLM models, their strengths, weaknesses, and provide recommendations to help you make the right choice for your AI projects.

Performance Analysis: Unleashing the Power of LLMs with NVIDIA 3080 and 4070 Ti

NVIDIA 3080 10GB vs NVIDIA 4070 Ti 12GB: A Head-to-Head Comparison

Let's break down the performance of these two GPU giants when tasked with running different LLM models. We'll focus on the Llama 3 model, a popular open-source choice, to gain a better understanding of their capabilities.

Note: This comparison focuses on specific models and configurations. It's important to consider your own needs and specific LLM models when making a decision.

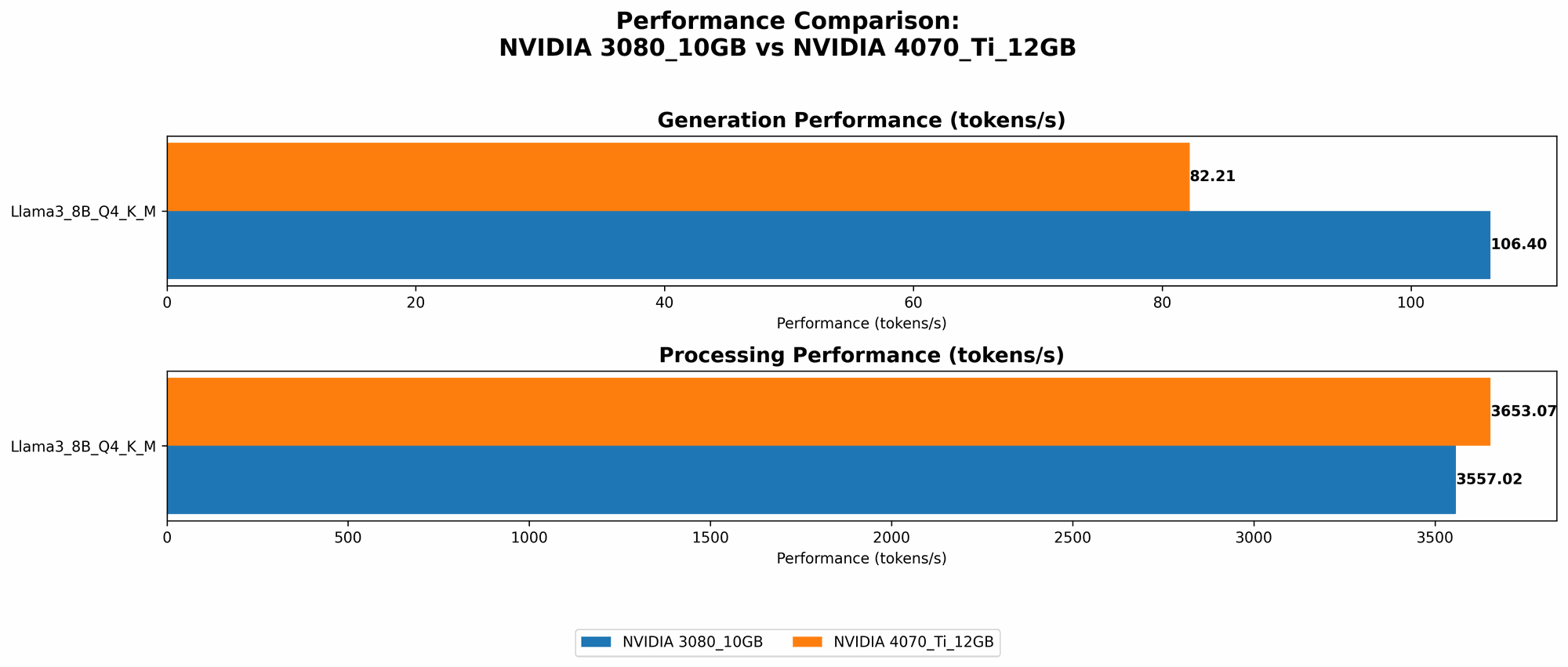

Llama 3 8B Model: A Common Ground

The 8B Llama 3 model is a good starting point to see how these GPUs stack up. Both the 3080 and 4070 Ti handle this model, but with some notable differences.

Token Generation: The 3080 10GB pulls ahead here, generating tokens at about 106.4 tokens per second when using the Q4 quantization (similar to "lossy compression" for LLMs). The 4070 Ti comes in slightly behind at 82.21 tokens per second.

Token Processing: This is where the 4070 Ti shines. The 4070 Ti demonstrates a slight advantage in token processing at 3653.07 tokens per second compared to the 3080's 3557.02 tokens per second.

So, what does this mean for you?

The 3080 has the upper hand in generating text faster. If your primary need is to quickly get output from the Llama 3 8B model, the 3080 might be the better choice.

The 4070 Ti is a bit slower in generating tokens, but it excels at processing them. This signifies that the 4070 Ti might be a better option for real-time applications where the need for speed in context comprehension and response generation is paramount.

Larger Models: The Limitations & Challenges

Currently, there's no available performance data for either GPU when running the Llama 3 70B model, indicating the limitations of these GPUs when dealing with larger models.

Why is this?

Large LLMs (70B or higher) demand a considerable amount of memory (VRAM) for their operations. The 10GB VRAM on the 3080 and the 12GB VRAM on the 4070 Ti may not be sufficient to handle the intricate computations involved in running such models. The 4070 Ti might have a slight edge due to its larger VRAM capacity.

Think of it this way: Imagine running a marathon with a tiny backpack. A 70B LLM is like trying to carry a heavyweight backpack full of books—it's going to be a struggle!

This highlights the importance of considering the memory requirements of your specific LLM model when choosing a GPU.

Key Factors to Consider When Choosing Between NVIDIA 3080 and 4070 Ti

1. Memory Requirements: The Weight of Your LLM

Larger LLMs demand more memory (VRAM) to store their parameters and internal representations. The 3080 10GB and the 4070 Ti 12GB may struggle with models larger than 8B, making them unsuitable for tasks requiring those models.

Think carefully:

- Model size: If you exclusively work with 8B models or smaller, either GPU can be a good fit. However, if you plan to explore larger LLMs (30B or higher), you'll likely need a GPU with at least 24GB VRAM or more to handle those computational demands.

- Quantization: Quantization, a technique that compresses model weights, can help reduce memory requirements. The 3080 and 4070 Ti can run 8B models effectively using quantization, allowing you to experiment with smaller models even with their limited VRAM. But don't expect them to handle large models.

2. Performance: Speed and Efficiency

Both the 3080 and 4070 Ti offer impressive performance for running smaller LLMs like the 8B Llama 3 model.

Key considerations:

- Token generation: The 3080 holds an advantage in generating tokens. If you prioritize fast response generation, the 3080 might be a better choice for tasks involving smaller LLMs.

- Token processing: The 4070 Ti demonstrates a slight advantage in token processing. For tasks requiring real-time interactions with the LLM, the 4070 Ti could be more responsive.

3. Price: Balancing Performance and Cost

The 3080 and 4070 Ti are both powerful GPUs with competitive pricing. However, the 4070 Ti offers slightly improved performance at a slightly higher price point.

Think about it:

- Consider the scale of your work: If you're working on small or medium-sized projects, the cost difference between these two GPUs might not be a significant factor. However, if you're running large-scale projects, the 4070 Ti's performance might justify its higher cost.

- Long-term investment: Consider your future needs. If you anticipate scaling your LLM projects, the 4070 Ti might be a better long-term investment. Although it's more expensive, it can handle larger models (if you upgrade RAM) and may have better resale value down the line.

4. Power Consumption: Don't Overheat Your System

LLMs are computationally intensive, requiring considerable power. Both the 3080 and 4070 Ti have high power consumption levels.

Considerations:

- Cooling: Ensure you have a robust cooling system for your computer. Proper cooling helps prevent overheating, which can lead to performance degradation.

- Power supply: Check your power supply's wattage to ensure it can handle the combined power draw of your GPU and other components.

- Energy efficiency: The 4070 Ti is designed to be more power-efficient than the 3080, but it still consumes significant power. If you're conscious of energy consumption, consider the 4070 Ti as it consumes less energy per performance unit.

5. Availability: Navigating the Tech Landscape

The availability of GPUs can be affected by factors like market demand and supply chain issues. Keep an eye on the availability of both the 3080 and 4070 Ti before making a decision.

Tips:

- Check with retailers: Contact local retailers or online stores to inquire about current stock and pricing.

- Stay updated: Keep an eye on tech news and forums for updates on availability and potential price fluctuations.

Practical Recommendations: Choosing the Right GPU for Your Needs

Here's a practical guide to help you choose the right option for your LLM projects:

NVIDIA 3080 10GB:

- Ideal for: Developers working primarily with smaller LLMs (8B or less), prioritizing speed in token generation, and who are budget-conscious.

- Use cases: Text generation, smaller-scale chatbot development, and experimentation with different LLM models.

NVIDIA 4070 Ti 12GB:

- Ideal for: Developers using larger LLMs (up to 15B), prioritizing real-time performance and token processing, and who are willing to invest in a more powerful GPU.

- Use cases: Chatbots with complex conversation flows, real-time language translation, and image and code generation.

Frequently Asked Questions (FAQs)

1. What are the key differences between the NVIDIA 3080 10GB and the NVIDIA 4070 Ti 12GB? The 4070 Ti offers improvements in performance, especially in token processing, but it also comes with a slightly higher price. They both struggle with running larger models (70B or higher) due to their limited VRAM.

2. Which GPU is better for running LLM models? There's no single “best” GPU. The ideal choice depends on your specific needs, model size, and budget. The 3080 is a good option for smaller LLMs and budget-conscious developers, while the 4070 Ti offers better performance for larger models and tasks that prioritize real-time processing.

3. Can I use either GPU with a larger LLM like Llama 3 70B? It's unlikely that either GPU will be able to run a 70B LLM effectively due to their limited VRAM. You'll likely need a GPU with at least 24GB VRAM or more to handle such large models.

4. What's the importance of VRAM in running LLMs? VRAM is the graphics card's memory. It holds the model's parameters and intermediate calculations. For larger LLMs, you need more VRAM to store all the necessary information. Think of it as the memory in your computer—the more RAM you have, the smoother your applications run.

5. What is quantization? Quantization is a technique used to compress model weights, reducing memory requirements and potentially improving performance. It works by simplifying the representation of the weights, making them smaller and faster to process.

Keywords

Large Language Models, LLM, AI, NVIDIA 3080, NVIDIA 4070 Ti, GPU, Token Generation, Token Processing, Memory Requirements, VRAM, Quantization, Performance, Price, Power Consumption, Availability, Llama 3, OpenAI.