5 Key Factors to Consider When Choosing Between NVIDIA 3080 10GB and NVIDIA 3090 24GB for AI

Introduction

The world of large language models (LLMs) is exploding, and everyone wants to run these powerful AI models locally. But, choosing the right graphics card for your AI work can be a tricky decision, particularly when your options include the NVIDIA GeForce RTX 3080 10GB and NVIDIA GeForce RTX 3090 24GB. Both cards are powerhouses, but their differences can significantly impact your performance and budget.

This article will guide you through the process of deciding which card is best for you, considering the key factors that matter most for running LLMs: model size, precision, memory capacity, and price. Along the way, we'll delve into real-world performance data and help you understand the trade-offs you'll face with each card.

Let's dive in!

Comparison of NVIDIA 3080 10GB and 3090 24GB for LLM Models

Understanding the Basics: What are LLMs and Why Should You Care?

Imagine a super-smart computer program that can understand and generate human-like text. That's basically what an LLM is! These models are trained on massive datasets of text, learning patterns and relationships within language. This allows them to do incredible things like:

- Write different kinds of creative content: From poems to code, these models can generate text in various styles.

- Summarize large amounts of text: No more reading through endless paragraphs!

- Translate languages: Imagine conversing effortlessly with someone who speaks a different language.

- Answer questions based on existing information: Think of it like having a personal digital assistant with a vast knowledge base.

The more data an LLM is trained on, and the larger its size, the more complex and sophisticated its capabilities become. But, running these models locally requires a powerful GPU, and that's where the RTX 3080 10GB and RTX 3090 24GB come in.

Memory: The Bigger, the Better (But Not Always)

The first major difference between the RTX 3080 10GB and RTX 3090 24GB is the amount of video memory (VRAM). The 3090 boasts a whopping 24GB of GDDR6X VRAM, nearly double the 3080's 10GB. Having more memory means you can handle bigger, more complex LLM models without hitting memory constraints and causing performance bottlenecks.

Think of it like this: if you're building a house, you need enough lumber and materials to finish the project. The larger the house, the more materials you need. Similarly, running a large LLM requires sufficient VRAM to store the model's parameters and data, allowing for smooth processing.

Here's a breakdown of the memory difference in practice:

- Smaller LLMs (e.g., Llama 7B): Both the 3080 and 3090 can easily handle these models, as the memory requirements are relatively low.

- Larger LLMs (e.g., Llama 70B): The 3090's 24GB of VRAM becomes a significant advantage, enabling you to run larger models without compromising performance. The 3080, with its limited 10GB of VRAM, might struggle with these heavyweights.

Quantization: Shrinking Down to Fit (It's Like a Diet for Models)

Here comes a super-important concept, and it's called quantization. Think of it like a diet for LLMs, where we make the model smaller and faster without sacrificing too much accuracy! It essentially involves reducing the size of the model's numbers (parameters) by using a smaller data type.

Let's say you have a model with parameters represented by numbers with 32 digits. With quantization, you can squeeze those numbers down to only 8 digits, making the model much smaller and faster. But, remember, this reduction comes with a small trade-off in accuracy. Think of it like losing a few pounds of weight: you might lose some muscles but become leaner and faster.

There are two primary quantization levels:

- Q4/K/M: The model is quantized to 4 bits, which is the most aggressive form of quantization. This leads to significant reduction in memory requirements and faster processing, but it can also lead to a slight decrease in accuracy.

- F16: This uses half-precision floating-point numbers, which is a less aggressive form of quantization, offering a balance between accuracy and performance.

Processing and Generation Speed: The Speed Demons of AI

Now, let's talk about the speed demons of AI: processing and generation! These two factors are crucial for a smooth LLM experience.

- Processing Speed: This refers to how quickly the GPU can process the model's parameters and data. It plays a crucial role in getting results faster and enhancing your AI experience.

- Generation Speed: This is the speed at which the GPU generates new text. It's critical for fast and responsive interactions with your LLM.

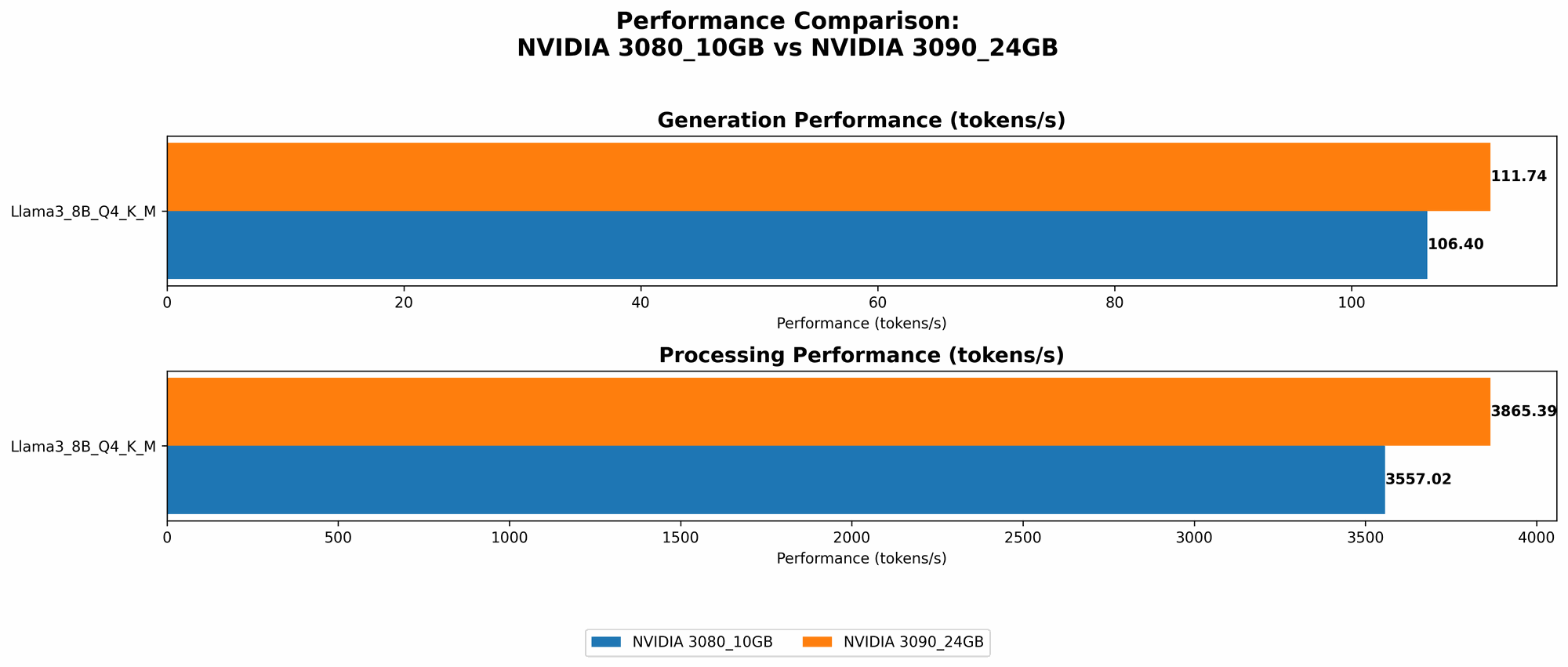

Performance Analysis: Putting the Numbers to Work

Now, let's dive into some real-world performance data to see how the RTX 3080 10GB and RTX 3090 24GB measure up in these critical areas.

| Model | NVIDIA 3080 10GB | NVIDIA 3090 24GB |

|---|---|---|

| Llama 3 8B Q4/K/M Generation | 106.4 tokens/second | 111.74 tokens/second |

| Llama 3 8B F16 Generation | N/A | 46.51 tokens/second |

| Llama 3 8B Q4/K/M Processing | 3557.02 tokens/second | 3865.39 tokens/second |

| Llama 3 8B F16 Processing | N/A | 4239.64 tokens/second |

| Llama 3 70B Q4/K/M Generation | N/A | N/A |

| Llama 3 70B F16 Generation | N/A | N/A |

| Llama 3 70B Q4/K/M Processing | N/A | N/A |

| Llama 3 70B F16 Processing | N/A | N/A |

Key Takeaways:

- Llama 3 8B: The RTX 3090 boasts a slight edge in both generation and processing speeds compared to the RTX 3080. This difference becomes more pronounced when using F16 quantization.

- Memory Limitations: The 3080 10GB might struggle with larger LLMs, while the 3090's 24 GB provides ample space for larger models and more complex tasks.

Practical Recommendations: Choosing the Right Weapon for the AI Battlefield

So, which card should you choose? It all comes down to your specific needs and budget.

- If you're working with smaller models (e.g., Llama 3 8B) and your budget is tight: The RTX 3080 10GB can be a solid choice, delivering decent performance at a lower price point.

- If you want to run larger models or prioritize accuracy: The RTX 3090 24GB is the undisputed champion, providing superior performance, more memory, and the ability to handle more demanding tasks.

Price: The Ultimate Decision Maker

Let's face it, the price tag is often the deciding factor, especially for developers and geeks working with these powerful devices.

The RTX 3090 24GB typically comes with a higher price tag compared to the RTX 3080 10GB. So, it's essential to weigh the performance benefits against the added cost.

Beyond the Battlefield: Factors to Consider

Power Consumption: The Energy Heavyweight

While both cards are incredibly powerful, they also consume a significant amount of power. The RTX 3090 24GB has a higher power draw, potentially making it more expensive to run in the long run. Consider your energy bills and ensure you have a suitable power supply for the card.

Noise: The Sound of AI

These cards can generate a noticeable amount of noise during operation, particularly when under load. If you're working in a quiet environment, noise levels might be something to factor into your decision. Look for quieter models or consider using a cooling system to manage noise levels.

FAQ

What are the different versions of Llama models?

The most popular Llama models are 7B, 13B, and 70B, with each version having different memory requirements and performance characteristics.

What does "Q4/K/M" mean?

It refers to a type of quantization where the model's parameters are converted to 4-bit numbers. This significantly reduces the model's size and memory requirements, making it faster to run.

Are there any alternatives to NVIDIA GPUs?

While NVIDIA GPUs are currently the industry standard for AI workloads, other brands are emerging, including AMD and Intel.

What's the best way to choose the right GPU?

Consider your budget, the types of LLMs you want to run, and the performance you expect. Do your research, read reviews, and compare specifications.

What about the future of LLMs and GPUs?

Expect the field of LLMs to continue evolving rapidly, with larger, more complex models released frequently. This will drive demand for even more powerful GPUs, pushing the limits of hardware performance.

Keywords

NVIDIA, RTX 3080, RTX 3090, LLM, GPU, AI, Large Language Model, Token Generation, Processing Speed, Quantization, Memory, VRAM, Llama, 7B, 70B, Performance, Comparison, Price, Power Consumption, Noise.