5 Key Factors to Consider When Choosing Between Apple M3 Pro 150gb 14cores and NVIDIA A40 48GB for AI

Introduction

The world of artificial intelligence is booming, with large language models (LLMs) at the forefront. These powerful tools are revolutionizing industries and changing how we interact with technology. For developers and researchers, choosing the right hardware for running LLMs is crucial for achieving optimal performance and pushing the boundaries of AI innovation.

Two leading contenders in this hardware race are the Apple M3 Pro 150GB 14-core chip and the NVIDIA A40 48GB GPU. This article delves into the key factors to consider when choosing between these two powerhouses, shedding light on their strengths and weaknesses, and providing practical recommendations for different use cases.

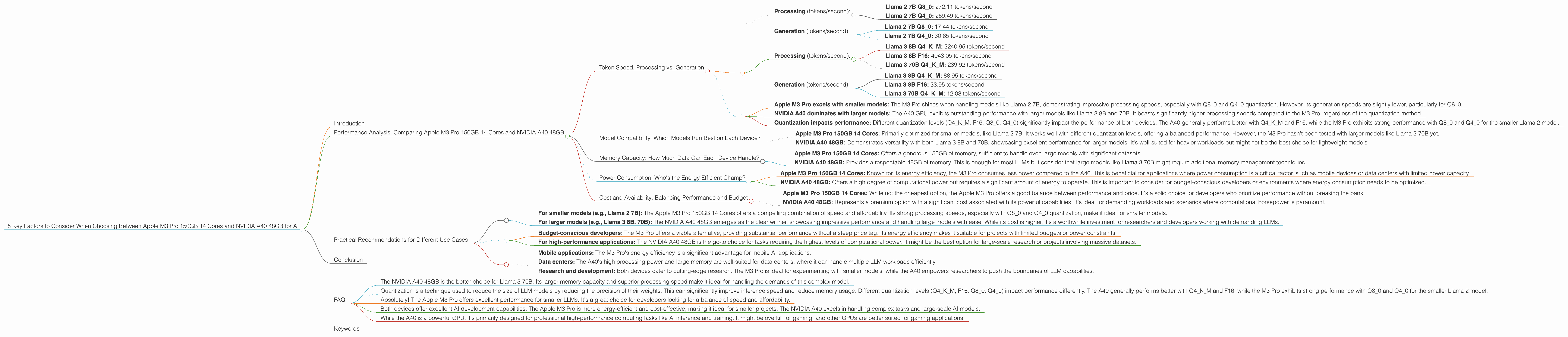

Performance Analysis: Comparing Apple M3 Pro 150GB 14 Cores and NVIDIA A40 48GB

Token Speed: Processing vs. Generation

The ability to process and generate tokens quickly is crucial for efficient LLM execution. Let's break down the performance of each device based on token speed:

Apple M3 Pro 150GB 14 Cores:

- Processing (tokens/second):

- Llama 2 7B Q80: 272.11 tokens/second

- Llama 2 7B Q40: 269.49 tokens/second

- Generation (tokens/second):

- Llama 2 7B Q80: 17.44 tokens/second

- Llama 2 7B Q40: 30.65 tokens/second

NVIDIA A40 48GB:

- Processing (tokens/second):

- Llama 3 8B Q4KM: 3240.95 tokens/second

- Llama 3 8B F16: 4043.05 tokens/second

- Llama 3 70B Q4KM: 239.92 tokens/second

- Generation (tokens/second):

- Llama 3 8B Q4KM: 88.95 tokens/second

- Llama 3 8B F16: 33.95 tokens/second

- Llama 3 70B Q4KM: 12.08 tokens/second

Key Observations:

- Apple M3 Pro excels with smaller models: The M3 Pro shines when handling models like Llama 2 7B, demonstrating impressive processing speeds, especially with Q80 and Q40 quantization. However, its generation speeds are slightly lower, particularly for Q8_0.

- NVIDIA A40 dominates with larger models: The A40 GPU exhibits outstanding performance with larger models like Llama 3 8B and 70B. It boasts significantly higher processing speeds compared to the M3 Pro, regardless of the quantization method.

- Quantization impacts performance: Different quantization levels (Q4KM, F16, Q80, Q40) significantly impact the performance of both devices. The A40 generally performs better with Q4KM and F16, while the M3 Pro exhibits strong performance with Q80 and Q40 for the smaller Llama 2 model.

Model Compatibility: Which Models Run Best on Each Device?

- Apple M3 Pro 150GB 14 Cores: Primarily optimized for smaller models, like Llama 2 7B. It works well with different quantization levels, offering a balanced performance. However, the M3 Pro hasn't been tested with larger models like Llama 3 70B yet.

- NVIDIA A40 48GB: Demonstrates versatility with both Llama 3 8B and 70B, showcasing excellent performance for larger models. It's well-suited for heavier workloads but might not be the best choice for lightweight models.

Memory Capacity: How Much Data Can Each Device Handle?

- Apple M3 Pro 150GB 14 Cores: Offers a generous 150GB of memory, sufficient to handle even large models with significant datasets.

- NVIDIA A40 48GB: Provides a respectable 48GB of memory. This is enough for most LLMs but consider that large models like Llama 3 70B might require additional memory management techniques.

Power Consumption: Who's the Energy Efficient Champ?

- Apple M3 Pro 150GB 14 Cores: Known for its energy efficiency, the M3 Pro consumes less power compared to the A40. This is beneficial for applications where power consumption is a critical factor, such as mobile devices or data centers with limited power capacity.

- NVIDIA A40 48GB: Offers a high degree of computational power but requires a significant amount of energy to operate. This is important to consider for budget-conscious developers or environments where energy consumption needs to be optimized.

Cost and Availability: Balancing Performance and Budget

- Apple M3 Pro 150GB 14 Cores: While not the cheapest option, the Apple M3 Pro offers a good balance between performance and price. It's a solid choice for developers who prioritize performance without breaking the bank.

- NVIDIA A40 48GB: Represents a premium option with a significant cost associated with its powerful capabilities. It's ideal for demanding workloads and scenarios where computational horsepower is paramount.

Practical Recommendations for Different Use Cases

Model Size and Complexity:

- For smaller models (e.g., Llama 2 7B): The Apple M3 Pro 150GB 14 Cores offers a compelling combination of speed and affordability. Its strong processing speeds, especially with Q80 and Q40 quantization, make it ideal for smaller models.

- For larger models (e.g., Llama 3 8B, 70B): The NVIDIA A40 48GB emerges as the clear winner, showcasing impressive performance and handling large models with ease. While its cost is higher, it's a worthwhile investment for researchers and developers working with demanding LLMs.

Budget and Power Consumption:

- Budget-conscious developers: The M3 Pro offers a viable alternative, providing substantial performance without a steep price tag. Its energy efficiency makes it suitable for projects with limited budgets or power constraints.

- For high-performance applications: The NVIDIA A40 48GB is the go-to choice for tasks requiring the highest levels of computational power. It might be the best option for large-scale research or projects involving massive datasets.

Specific Use Cases:

- Mobile applications: The M3 Pro's energy efficiency is a significant advantage for mobile AI applications.

- Data centers: The A40's high processing power and large memory are well-suited for data centers, where it can handle multiple LLM workloads efficiently.

- Research and development: Both devices cater to cutting-edge research. The M3 Pro is ideal for experimenting with smaller models, while the A40 empowers researchers to push the boundaries of LLM capabilities.

Conclusion

The choice between the Apple M3 Pro 150GB 14 Cores and NVIDIA A40 48GB depends heavily on your use case, budget, and power consumption requirements.

Apple M3 Pro 150GB 14 Cores: Offers a compelling balance of performance and affordability, making it a strong choice for working with smaller models. Its energy efficiency is ideal for mobile and budget-conscious applications.

NVIDIA A40 48GB: Represents a powerful option for demanding workloads and larger models. Its high computational power and substantial memory make it suitable for data centers, research, and high-performance computing.

Ultimately, the decision is yours. By carefully considering the factors discussed above, you can choose the perfect hardware to unleash the full potential of your LLM projects.

FAQ

Q: Which device is better for Llama 3 70B?

- The NVIDIA A40 48GB is the better choice for Llama 3 70B. Its larger memory capacity and superior processing speed make it ideal for handling the demands of this complex model.

Q: What is quantization and how does it affect these devices?

- Quantization is a technique used to reduce the size of LLM models by reducing the precision of their weights. This can significantly improve inference speed and reduce memory usage. Different quantization levels (Q4KM, F16, Q80, Q40) impact performance differently. The A40 generally performs better with Q4KM and F16, while the M3 Pro exhibits strong performance with Q80 and Q40 for the smaller Llama 2 model.

Q: Is the Apple M3 Pro suitable for running LLMs?

- Absolutely! The Apple M3 Pro offers excellent performance for smaller LLMs. It's a great choice for developers looking for a balance of speed and affordability.

Q: Which device is more efficient for AI development?

- Both devices offer excellent AI development capabilities. The Apple M3 Pro is more energy-efficient and cost-effective, making it ideal for smaller projects. The NVIDIA A40 excels in handling complex tasks and large-scale AI models.

Q: Is the NVIDIA A40 a good option for gaming?

- While the A40 is a powerful GPU, it's primarily designed for professional high-performance computing tasks like AI inference and training. It might be overkill for gaming, and other GPUs are better suited for gaming applications.

Keywords

Apple M3 Pro, NVIDIA A40, LLM, Large Language Model, AI, Machine Learning, Deep Learning, Quantization, Token Speed, Performance, Processing, Generation, Model Compatibility, Memory Capacity, Power Consumption, Cost, Availability, Llama 2, Llama 3, Use Cases.