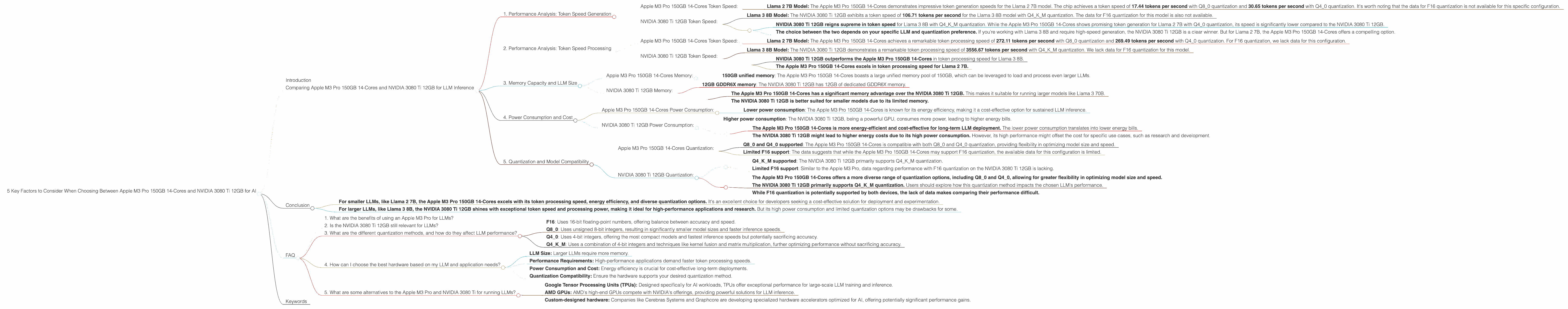

5 Key Factors to Consider When Choosing Between Apple M3 Pro 150gb 14cores and NVIDIA 3080 Ti 12GB for AI

Introduction

The world of artificial intelligence (AI) is booming, with Large Language Models (LLMs) becoming increasingly powerful and capable of handling complex tasks. These models require significant computing power to operate effectively, and choosing the right hardware can be a critical decision for developers seeking to run them efficiently. This article dives deep into comparing two popular choices for LLM deployment: the Apple M3 Pro 150GB 14-Core chip and the NVIDIA 3080 Ti 12GB graphics card. By examining key performance metrics and weighing their strengths and weaknesses, we'll equip you with the knowledge to make an informed decision for your specific AI needs.

Imagine you're building a rocket to launch a satellite. You need a powerful engine to lift the payload into space. Similarly, running LLMs requires powerful hardware to process massive amounts of data and generate insightful responses.

This article will help you choose the right "engine" for your LLM "rocket" based on factors like model size, speed, and cost, providing practical recommendations for various use cases.

Comparing Apple M3 Pro 150GB 14-Cores and NVIDIA 3080 Ti 12GB for LLM Inference

1. Performance Analysis: Token Speed Generation

Token speed generation is a crucial metric for evaluating the performance of LLM devices. It measures how quickly a device can process and generate new tokens (words or sub-words) based on the given input. Higher token speeds translate into faster response times and smoother user experiences.

Apple M3 Pro 150GB 14-Cores Token Speed:

- Llama 2 7B Model: The Apple M3 Pro 150GB 14-Cores demonstrates impressive token generation speeds for the Llama 2 7B model. The chip achieves a token speed of 17.44 tokens per second with Q80 quantization and 30.65 tokens per second with Q40 quantization. It's worth noting that the data for F16 quantization is not available for this specific configuration.

NVIDIA 3080 Ti 12GB Token Speed:

- Llama 3 8B Model: The NVIDIA 3080 Ti 12GB exhibits a token speed of 106.71 tokens per second for the Llama 3 8B model with Q4KM quantization. The data for F16 quantization for this model is also not available.

Key Takeaways:

- NVIDIA 3080 Ti 12GB reigns supreme in token speed for Llama 3 8B with Q4KM quantization. While the Apple M3 Pro 150GB 14-Cores shows promising token generation for Llama 2 7B with Q4_0 quantization, its speed is significantly lower compared to the NVIDIA 3080 Ti 12GB.

- The choice between the two depends on your specific LLM and quantization preference. If you're working with Llama 3 8B and require high-speed generation, the NVIDIA 3080 Ti 12GB is a clear winner. But for Llama 2 7B, the Apple M3 Pro 150GB 14-Cores offers a compelling option.

2. Performance Analysis: Token Speed Processing

Token speed processing measures how efficiently a device can process the input tokens, feeding them into the LLM for analysis and generating outputs.

Apple M3 Pro 150GB 14-Cores Token Speed:

- Llama 2 7B Model: The Apple M3 Pro 150GB 14-Cores achieves a remarkable token processing speed of 272.11 tokens per second with Q80 quantization and 269.49 tokens per second with Q40 quantization. For F16 quantization, we lack data for this configuration.

NVIDIA 3080 Ti 12GB Token Speed:

- Llama 3 8B Model: The NVIDIA 3080 Ti 12GB demonstrates a remarkable token processing speed of 3556.67 tokens per second with Q4KM quantization. We lack data for F16 quantization for this model.

Key Takeaways:

- NVIDIA 3080 Ti 12GB outperforms the Apple M3 Pro 150GB 14-Cores in token processing speed for Llama 3 8B.

- The Apple M3 Pro 150GB 14-Cores excels in token processing speed for Llama 2 7B.

3. Memory Capacity and LLM Size

Memory capacity is a critical factor in determining which device can handle larger LLMs. Larger LLMs require more memory to store their parameters and efficiently perform computations.

Apple M3 Pro 150GB 14-Cores Memory:

- 150GB unified memory: The Apple M3 Pro 150GB 14-Cores boasts a large unified memory pool of 150GB, which can be leveraged to load and process even larger LLMs.

NVIDIA 3080 Ti 12GB Memory:

- 12GB GDDR6X memory: The NVIDIA 3080 Ti 12GB has 12GB of dedicated GDDR6X memory.

Key Takeaways:

- The Apple M3 Pro 150GB 14-Cores has a significant memory advantage over the NVIDIA 3080 Ti 12GB. This makes it suitable for running larger models like Llama 3 70B.

- The NVIDIA 3080 Ti 12GB is better suited for smaller models due to its limited memory.

4. Power Consumption and Cost

Power consumption and cost are essential considerations for deploying LLMs in production environments.

Apple M3 Pro 150GB 14-Cores Power Consumption:

- Lower power consumption: The Apple M3 Pro 150GB 14-Cores is known for its energy efficiency, making it a cost-effective option for sustained LLM inference.

NVIDIA 3080 Ti 12GB Power Consumption:

- Higher power consumption: The NVIDIA 3080 Ti 12GB, being a powerful GPU, consumes more power, leading to higher energy bills.

Key Takeaways:

- The Apple M3 Pro 150GB 14-Cores is more energy-efficient and cost-effective for long-term LLM deployment. The lower power consumption translates into lower energy bills.

- The NVIDIA 3080 Ti 12GB might lead to higher energy costs due to its high power consumption. However, its high performance might offset the cost for specific use cases, such as research and development.

5. Quantization and Model Compatibility

Quantization is a technique used to reduce the precision of LLM parameters, resulting in smaller model sizes and faster inference speed. This can significantly impact performance, making it essential to consider when choosing hardware.

Apple M3 Pro 150GB 14-Cores Quantization:

- Q80 and Q40 supported: The Apple M3 Pro 150GB 14-Cores is compatible with both Q80 and Q40 quantization, providing flexibility in optimizing model size and speed.

- Limited F16 support: The data suggests that while the Apple M3 Pro 150GB 14-Cores may support F16 quantization, the available data for this configuration is limited.

NVIDIA 3080 Ti 12GB Quantization:

- Q4KM supported: The NVIDIA 3080 Ti 12GB primarily supports Q4KM quantization.

- Limited F16 support: Similar to the Apple M3 Pro, data regarding performance with F16 quantization on the NVIDIA 3080 Ti 12GB is lacking.

Key Takeaways:

- The Apple M3 Pro 150GB 14-Cores offers a more diverse range of quantization options, including Q80 and Q40, allowing for greater flexibility in optimizing model size and speed.

- The NVIDIA 3080 Ti 12GB primarily supports Q4KM quantization. Users should explore how this quantization method impacts the chosen LLM's performance.

- While F16 quantization is potentially supported by both devices, the lack of data makes comparing their performance difficult.

Conclusion

The choice between Apple M3 Pro 150GB 14-Cores and NVIDIA 3080 Ti 12GB for running LLMs depends heavily on your specific use case, model size, and performance expectations.

- For smaller LLMs, like Llama 2 7B, the Apple M3 Pro 150GB 14-Cores excels with its token processing speed, energy efficiency, and diverse quantization options. It's an excellent choice for developers seeking a cost-effective solution for deployment and experimentation.

- For larger LLMs, like Llama 3 8B, the NVIDIA 3080 Ti 12GB shines with exceptional token speed and processing power, making it ideal for high-performance applications and research. But its high power consumption and limited quantization options may be drawbacks for some.

Always consider the specific requirements of your project when making this crucial decision.

FAQ

1. What are the benefits of using an Apple M3 Pro for LLMs?

Apple M3 Pro chips are known for their exceptional energy efficiency, powerful integrated GPUs, and large unified memory pools, making them cost-effective and efficient for running LLMs. Their support for various quantization methods allows developers to optimize model size and speed.

2. Is the NVIDIA 3080 Ti 12GB still relevant for LLMs?

Absolutely! While the Apple M3 Pro 150GB 14-Cores boasts a superior memory capacity and energy efficiency, the NVIDIA 3080 Ti 12GB still offers a powerful solution for high-performance LLM inference, particularly for smaller models. Its raw processing power can be crucial for research and demanding applications where speed is paramount.

3. What are the different quantization methods, and how do they affect LLM performance?

Quantization involves reducing the precision of an LLM's parameters, leading to smaller model sizes and faster inference speed. The most common quantization methods are:

- F16: Uses 16-bit floating-point numbers, offering balance between accuracy and speed.

- Q8_0: Uses unsigned 8-bit integers, resulting in significantly smaller model sizes and faster inference speeds.

- Q4_0: Uses 4-bit integers, offering the most compact models and fastest inference speeds but potentially sacrificing accuracy.

- Q4KM: Uses a combination of 4-bit integers and techniques like kernel fusion and matrix multiplication, further optimizing performance without sacrificing accuracy.

4. How can I choose the best hardware based on my LLM and application needs?

Consider the following factors:

- LLM Size: Larger LLMs require more memory.

- Performance Requirements: High-performance applications demand faster token processing speeds.

- Power Consumption and Cost: Energy efficiency is crucial for cost-effective long-term deployments.

- Quantization Compatibility: Ensure the hardware supports your desired quantization method.

5. What are some alternatives to the Apple M3 Pro and NVIDIA 3080 Ti for running LLMs?

The market offers a variety of hardware options for LLM deployment. Some notable alternatives include:

- Google Tensor Processing Units (TPUs): Designed specifically for AI workloads, TPUs offer exceptional performance for large-scale LLM training and inference.

- AMD GPUs: AMD's high-end GPUs compete with NVIDIA's offerings, providing powerful solutions for LLM inference.

- Custom-designed hardware: Companies like Cerebras Systems and Graphcore are developing specialized hardware accelerators optimized for AI, offering potentially significant performance gains.

Keywords

Apple M3 Pro, NVIDIA 3080 Ti, LLM, Large Language Models, Token Speed Generation, Token Speed Processing, Memory Capacity, Power Consumption, Quantization, Q80, Q40, Q4KM, F16, AI, Inference, Deployment, Performance Comparison, Hardware Choice, Tokenization, Model Size.