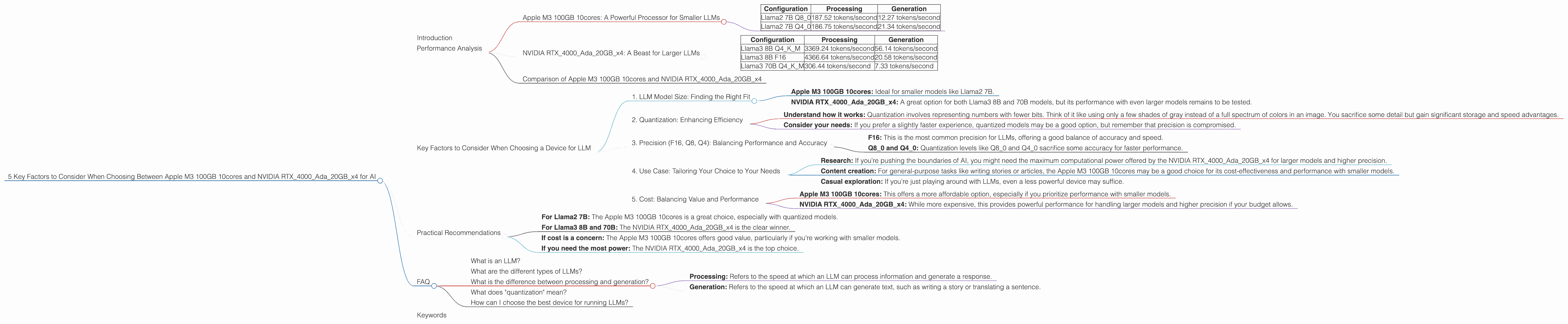

5 Key Factors to Consider When Choosing Between Apple M3 100gb 10cores and NVIDIA RTX 4000 Ada 20GB x4 for AI

Introduction

The world of Large Language Models (LLMs) is abuzz with excitement. These powerful AI models can generate creative text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running LLMs locally can be a challenge, requiring powerful hardware. This article dives deep into the performance of two popular devices: the Apple M3 100GB 10cores and the NVIDIA RTX4000Ada20GBx4, to help you make an informed decision for your AI endeavors.

Performance Analysis

This section dives into the performance of these two devices for running LLMs. We'll analyze both processing and generation speeds for different LLM models and quantization levels, revealing strengths and weaknesses.

Apple M3 100GB 10cores: A Powerful Processor for Smaller LLMs

The Apple M3 100GB 10cores is a powerful chip that excels in processing smaller LLMs. It boasts a significant performance advantage in processing Llama2 7B models, especially when using quantized formats like Q80 and Q40:

| Configuration | Processing | Generation |

|---|---|---|

| Llama2 7B Q8_0 | 187.52 tokens/second | 12.27 tokens/second |

| Llama2 7B Q4_0 | 186.75 tokens/second | 21.34 tokens/second |

It's important to note that we don't have data for F16 precision and Llama 7B generation for the M3 chip. This means that while the M3 excels with quantized models, its performance with larger models or higher precision may not be ideal.

NVIDIA RTX4000Ada20GBx4: A Beast for Larger LLMs

The NVIDIA RTX4000Ada20GBx4 shines with its impressive capabilities for handling larger LLMs. It offers strong performance with Llama3 models, both in terms of processing speed and generation speed:

| Configuration | Processing | Generation |

|---|---|---|

| Llama3 8B Q4KM | 3369.24 tokens/second | 56.14 tokens/second |

| Llama3 8B F16 | 4366.64 tokens/second | 20.58 tokens/second |

| Llama3 70B Q4KM | 306.44 tokens/second | 7.33 tokens/second |

However, we lack data for F16 precision for the Llama 70B model on this hardware.

Comparison of Apple M3 100GB 10cores and NVIDIA RTX4000Ada20GBx4

The choice between the Apple M3 100GB 10cores and the NVIDIA RTX4000Ada20GBx4 ultimately depends on your specific use case. The M3 is a great choice if you're working with smaller models like Llama2 7B, especially if you prioritize speed in quantized formats. Its performance with larger models and higher precision remains unknown.

The NVIDIA RTX4000Ada20GBx4 is a powerhouse for larger LLMs like Llama3 8B and 70B, offering significant performance gains in both processing and generation. However, its performance with F16 precision for larger models remains unknown, which could be a point of consideration.

Key Factors to Consider When Choosing a Device for LLM

Let's break down five key factors that will help you determine the best device for your AI needs:

1. LLM Model Size: Finding the Right Fit

LLMs come in different sizes, from the compact 7B models to the gigantic 70B models. Larger models require more computational resources, and you'll need to consider the device's ability to handle the memory demands.

- Apple M3 100GB 10cores: Ideal for smaller models like Llama2 7B.

- NVIDIA RTX4000Ada20GBx4: A great option for both Llama3 8B and 70B models, but its performance with even larger models remains to be tested.

2. Quantization: Enhancing Efficiency

Quantization is a technique that reduces the size of the LLM, sacrificing some precision for a significant speed boost.

- Understand how it works: Quantization involves representing numbers with fewer bits. Think of it like using only a few shades of gray instead of a full spectrum of colors in an image. You sacrifice some detail but gain significant storage and speed advantages.

- Consider your needs: If you prefer a slightly faster experience, quantized models may be a good option, but remember that precision is compromised.

3. Precision (F16, Q8, Q4): Balancing Performance and Accuracy

Precision levels determine the detail and accuracy of the LLM's computations. Higher precision generally leads to better results but comes with a performance cost.

- F16: This is the most common precision for LLMs, offering a good balance of accuracy and speed.

- Q80 and Q40: Quantization levels like Q80 and Q40 sacrifice some accuracy for faster performance.

4. Use Case: Tailoring Your Choice to Your Needs

The best device will depend on your specific use case. Do you need to run an LLM for research, content creation, or just casual exploration?

- Research: If you're pushing the boundaries of AI, you might need the maximum computational power offered by the NVIDIA RTX4000Ada20GBx4 for larger models and higher precision.

- Content creation: For general-purpose tasks like writing stories or articles, the Apple M3 100GB 10cores may be a good choice for its cost-effectiveness and performance with smaller models.

- Casual exploration: If you're just playing around with LLMs, even a less powerful device may suffice.

5. Cost: Balancing Value and Performance

The price tag is an important consideration, especially if you're on a budget.

- Apple M3 100GB 10cores: This offers a more affordable option, especially if you prioritize performance with smaller models.

- NVIDIA RTX4000Ada20GBx4: While more expensive, this provides powerful performance for handling larger models and higher precision if your budget allows.

Practical Recommendations

Here's a quick guide to make your decision easier:

- For Llama2 7B: The Apple M3 100GB 10cores is a great choice, especially with quantized models.

- For Llama3 8B and 70B: The NVIDIA RTX4000Ada20GBx4 is the clear winner.

- If cost is a concern: The Apple M3 100GB 10cores offers good value, particularly if you're working with smaller models.

- If you need the most power: The NVIDIA RTX4000Ada20GBx4 is the top choice.

FAQ

What is an LLM?

LLMs, or Large Language Models, are a type of AI that excels at understanding and generating human language. They can create text, translate languages, write different kinds of creative content, and answer your questions in a natural way.

What are the different types of LLMs?

Popular LLMs include GPT-3, LaMDA, and Llama. Each model has different strengths and weaknesses, with some being better at writing poems while others excel at translation.

What is the difference between processing and generation?

- Processing: Refers to the speed at which an LLM can process information and generate a response.

- Generation: Refers to the speed at which an LLM can generate text, such as writing a story or translating a sentence.

What does "quantization" mean?

Quantization is a technique that compresses an LLM's data. It sacrifices some accuracy but boosts performance by reducing the amount of information that needs to be processed. Think of it as using only a few shades of gray instead of a full spectrum of colors in an image. You sacrifice some detail but gain significant storage and speed advantages.

How can I choose the best device for running LLMs?

Consider factors like LLM size, your budget, and what you want to use the LLM for.

Keywords

LLM, Large Language Model, Apple M3, NVIDIA RTX4000Ada, Llama2, Llama3, performance, processing, generation, token speed, quantization, F16, Q80, Q40, AI, machine learning, deep learning, GPU, CPU, cost, use case, recommendation, comparison.