5 Key Factors to Consider When Choosing Between Apple M3 100gb 10cores and NVIDIA 3090 24GB for AI

Introduction:

The world of AI is buzzing with excitement - Large Language Models (LLMs) are revolutionizing how we interact with computers, and local running of these models is becoming increasingly popular. But when it comes to choosing the right hardware, the options can feel overwhelming. Two popular contenders for running LLMs locally are the Apple M3 100GB 10Cores and the NVIDIA 3090 24GB.

This guide will help you understand the key differences between these devices and make an informed decision based on your needs and budget. We'll dive into the performance of these devices, analyzing their strengths and weaknesses, and exploring their suitability for various use cases.

Comparing Apple M3 100GB 10Cores & NVIDIA 3090 24GB: A Head-to-Head Battle

Let's get down to the nitty-gritty and see how these two titans of computing measure up in the AI arena:

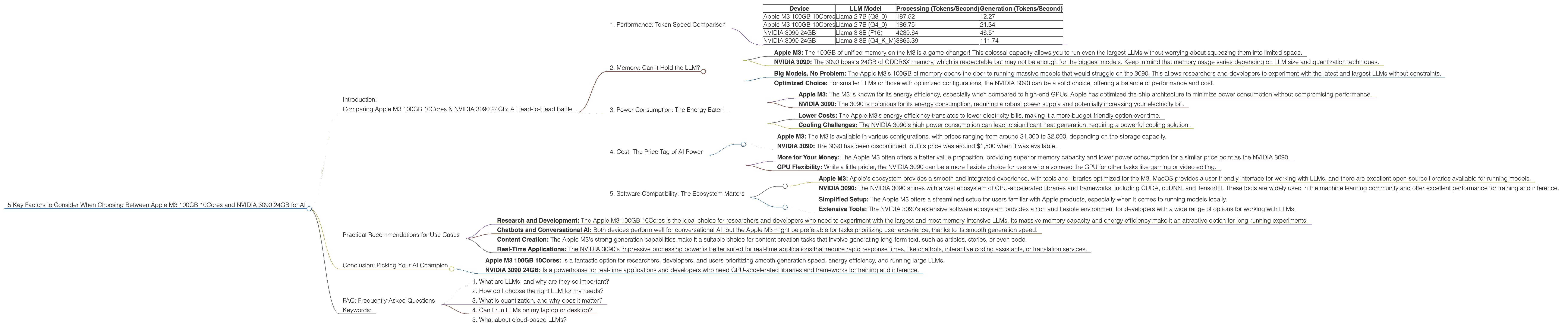

1. Performance: Token Speed Comparison

This is where the rubber meets the road! How fast can these devices generate tokens, the building blocks of text for LLMs? We'll compare them based on their performance with popular LLM models:

Table: Token Speed Comparison (Tokens per Second)

| Device | LLM Model | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|---|

| Apple M3 100GB 10Cores | Llama 2 7B (Q8_0) | 187.52 | 12.27 |

| Apple M3 100GB 10Cores | Llama 2 7B (Q4_0) | 186.75 | 21.34 |

| NVIDIA 3090 24GB | Llama 3 8B (F16) | 4239.64 | 46.51 |

| NVIDIA 3090 24GB | Llama 3 8B (Q4KM) | 3865.39 | 111.74 |

Observations:

- NVIDIA 3090 24GB Dominates Processing: The NVIDIA 3090 24GB significantly outperforms the Apple M3 for processing tokens. This is likely due to its specialized GPU architecture, which is designed for parallel computing, making it ideal for complex mathematical operations required for LLM processing.

- Apple M3 100GB 10Cores Holds its own in Generation: While the M3 falls behind in processing, it shines in the generation phase. It's able to generate tokens at comparable speeds to the 3090, highlighting its efficiency for conversational tasks.

- Quantization's Crucial Role: Notice the impact of quantization on the M3's performance. Using Q80 and Q40 techniques significantly enhances the processing speed. Quantization is like a diet for LLMs, reducing their size and improving their efficiency on less powerful hardware. For non-technical readers, think of quantization as reducing the size of a picture by using fewer colors.

Real-world Implications:

- Fast Response Times: The NVIDIA 3090's impressive processing speed translates to faster response times when running large and complex LLMs. This is particularly beneficial for applications requiring real-time interaction, like chatbots or interactive coding assistants.

- Smooth Generation: The Apple M3 100GB 10Cores' strength in token generation ensures smooth and natural text generation. This makes it a solid choice for tasks that prioritize user experience, such as content creation or translation.

2. Memory: Can It Hold the LLM?

LLMs hungry for memory! It's not just about processing speed; you need enough RAM to load the model itself. Let's compare the memory capacity of our contenders:

Apple M3 100GB 10Cores vs NVIDIA 3090 24GB:

- Apple M3: The 100GB of unified memory on the M3 is a game-changer! This colossal capacity allows you to run even the largest LLMs without worrying about squeezing them into limited space.

NVIDIA 3090: The 3090 boasts 24GB of GDDR6X memory, which is respectable but may not be enough for the biggest models. Keep in mind that memory usage varies depending on LLM size and quantization techniques.

Impact on LLM Choice:

Big Models, No Problem: The Apple M3's 100GB of memory opens the door to running massive models that would struggle on the 3090. This allows researchers and developers to experiment with the latest and largest LLMs without constraints.

- Optimized Choice: For smaller LLMs or those with optimized configurations, the NVIDIA 3090 can be a solid choice, offering a balance of performance and cost.

3. Power Consumption: The Energy Eater!

Running these powerful devices comes at a cost, literally! Let's compare their appetite for electricity:

Apple M3 100GB 10Cores vs NVIDIA 3090 24GB:

- Apple M3: The M3 is known for its energy efficiency, especially when compared to high-end GPUs. Apple has optimized the chip architecture to minimize power consumption without compromising performance.

- NVIDIA 3090: The 3090 is notorious for its energy consumption, requiring a robust power supply and potentially increasing your electricity bill.

The Energy Equation:

- Lower Costs: The Apple M3's energy efficiency translates to lower electricity bills, making it a more budget-friendly option over time.

- Cooling Challenges: The NVIDIA 3090's high power consumption can lead to significant heat generation, requiring a powerful cooling solution.

4. Cost: The Price Tag of AI Power

Let's face it, the best hardware comes with a price tag! Let's compare the cost of our two contenders:

Apple M3 100GB 10Cores vs NVIDIA 3090 24GB:

- Apple M3: The M3 is available in various configurations, with prices ranging from around $1,000 to $2,000, depending on the storage capacity.

- NVIDIA 3090: The 3090 has been discontinued, but its price was around $1,500 when it was available.

The Price-to-Performance Ratio:

- More for Your Money: The Apple M3 often offers a better value proposition, providing superior memory capacity and lower power consumption for a similar price point as the NVIDIA 3090.

- GPU Flexibility: While a little pricier, the NVIDIA 3090 can be a more flexible choice for users who also need the GPU for other tasks like gaming or video editing.

5. Software Compatibility: The Ecosystem Matters

The software ecosystem you use to run LLMs is crucial! Let's see how our devices stack up:

Apple M3 100GB 10Cores vs NVIDIA 3090 24GB:

- Apple M3: Apple's ecosystem provides a smooth and integrated experience, with tools and libraries optimized for the M3. MacOS provides a user-friendly interface for working with LLMs, and there are excellent open-source libraries available for running models.

- NVIDIA 3090: The NVIDIA 3090 shines with a vast ecosystem of GPU-accelerated libraries and frameworks, including CUDA, cuDNN, and TensorRT. These tools are widely used in the machine learning community and offer excellent performance for training and inference.

The Compatibility Factor:

- Simplified Setup: The Apple M3 offers a streamlined setup for users familiar with Apple products, especially when it comes to running models locally.

- Extensive Tools: The NVIDIA 3090's extensive software ecosystem provides a rich and flexible environment for developers with a wide range of options for working with LLMs.

Practical Recommendations for Use Cases

Now that we've compared the devices, let's see how they fit into different use cases:

- Research and Development: The Apple M3 100GB 10Cores is the ideal choice for researchers and developers who need to experiment with the largest and most memory-intensive LLMs. Its massive memory capacity and energy efficiency make it an attractive option for long-running experiments.

- Chatbots and Conversational AI: Both devices perform well for conversational AI, but the Apple M3 might be preferable for tasks prioritizing user experience, thanks to its smooth generation speed.

- Content Creation: The Apple M3's strong generation capabilities make it a suitable choice for content creation tasks that involve generating long-form text, such as articles, stories, or even code.

- Real-Time Applications: The NVIDIA 3090's impressive processing power is better suited for real-time applications that require rapid response times, like chatbots, interactive coding assistants, or translation services.

Conclusion: Picking Your AI Champion

Choosing the right device for running LLMs boils down to understanding your specific needs and priorities.

- Apple M3 100GB 10Cores: Is a fantastic option for researchers, developers, and users prioritizing smooth generation speed, energy efficiency, and running large LLMs.

- NVIDIA 3090 24GB: Is a powerhouse for real-time applications and developers who need GPU-accelerated libraries and frameworks for training and inference.

Remember, the best choice is the one that aligns with your budget, the size of your LLM, and its intended use case.

FAQ: Frequently Asked Questions

1. What are LLMs, and why are they so important?

LLMs are Large Language Models, a type of AI capable of understanding and generating human-like text. They are driving the development of chatbots, AI assistants, and advanced text-based applications.

2. How do I choose the right LLM for my needs?

Choosing the right LLM depends on your specific task and requirements. Consider factors like model size, performance, and the availability of pre-trained models for your desired language and domain.

3. What is quantization, and why does it matter?

Quantization is a technique for reducing the size of LLMs by using fewer bits to represent their weights. It improves efficiency, allowing LLMs to run on less powerful hardware with faster processing speed.

4. Can I run LLMs on my laptop or desktop?

Yes! Both the Apple M3 and NVIDIA 3090 are capable of running LLMs locally. However, the choice depends on the model size and your device's memory capacity.

5. What about cloud-based LLMs?

Cloud-based LLMs are a good option for users who need access to the most powerful and up-to-date models without the hassle of managing hardware. However, running LLMs locally offers more control, privacy, and faster response times in some cases.

Keywords:

Apple M3, NVIDIA 3090, LLM, Large Language Model, Token Speed, Processing, Generation, Quantization, Memory, Power Consumption, Cost, Software Compatibility, Use Cases, Research and Development, Chatbots, Content Creation, Real-Time Applications, AI, Machine Learning, GPU, CUDA, cuDNN, TensorRT, Local Running, Cloud-Based,