5 Key Factors to Consider When Choosing Between Apple M2 Pro 200gb 16cores and NVIDIA RTX 4000 Ada 20GB x4 for AI

Introduction

The world of artificial intelligence (AI) is rapidly evolving, with large language models (LLMs) becoming increasingly powerful and sophisticated. These models' ability to generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way has sparked excitement among developers and tech enthusiasts. But running these models locally can be challenging, requiring specialized hardware with significant processing power.

This article will compare the performance of two popular hardware configurations: the Apple M2 Pro 200 GB 16 Core processor and the NVIDIA RTX 4000 Ada 20 GB x4. This will provide a comprehensive analysis of their strengths and weaknesses, helping you to make an informed decision for your AI projects.

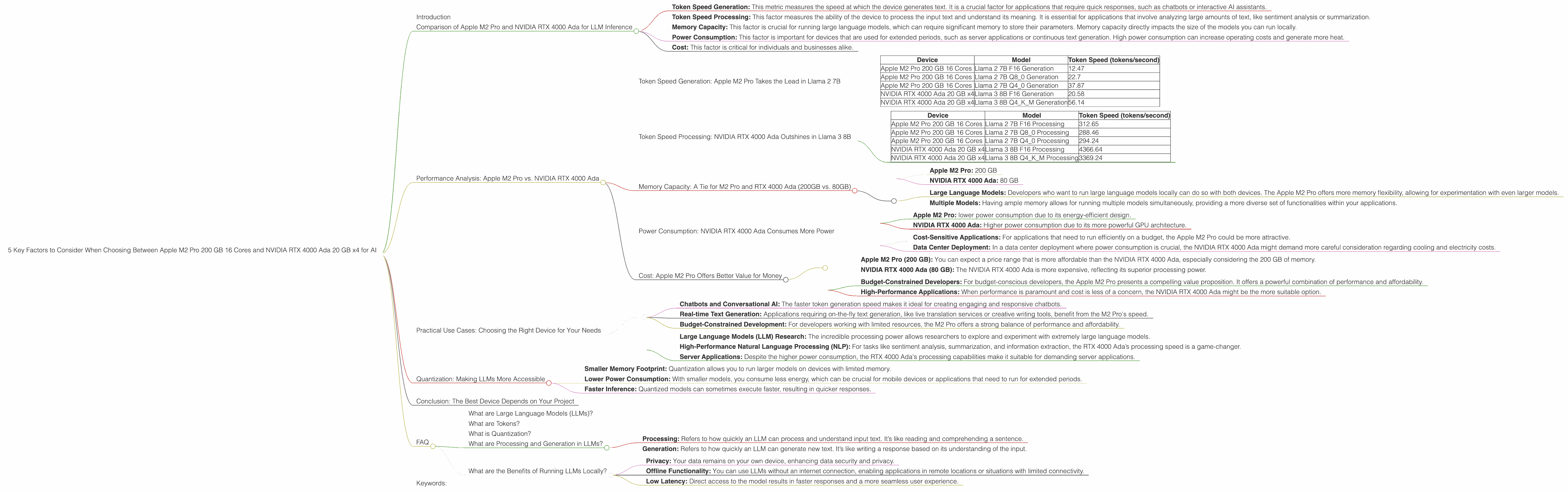

Comparison of Apple M2 Pro and NVIDIA RTX 4000 Ada for LLM Inference

We'll evaluate the performance of these devices based on the following key factors:

- Token Speed Generation: This metric measures the speed at which the device generates text. It is a crucial factor for applications that require quick responses, such as chatbots or interactive AI assistants.

- Token Speed Processing: This factor measures the ability of the device to process the input text and understand its meaning. It is essential for applications that involve analyzing large amounts of text, like sentiment analysis or summarization.

- Memory Capacity: This factor is crucial for running large language models, which can require significant memory to store their parameters. Memory capacity directly impacts the size of the models you can run locally.

- Power Consumption: This factor is important for devices that are used for extended periods, such as server applications or continuous text generation. High power consumption can increase operating costs and generate more heat.

- Cost: This factor is critical for individuals and businesses alike.

Performance Analysis: Apple M2 Pro vs. NVIDIA RTX 4000 Ada

Token Speed Generation: Apple M2 Pro Takes the Lead in Llama 2 7B

Let’s dive into the results. We'll focus on the Llama 2 7B model, as it's a popular choice for developers.

Table 1. Token Speed Comparison for Llama 2 7B:

| Device | Model | Token Speed (tokens/second) |

|---|---|---|

| Apple M2 Pro 200 GB 16 Cores | Llama 2 7B F16 Generation | 12.47 |

| Apple M2 Pro 200 GB 16 Cores | Llama 2 7B Q8_0 Generation | 22.7 |

| Apple M2 Pro 200 GB 16 Cores | Llama 2 7B Q4_0 Generation | 37.87 |

| NVIDIA RTX 4000 Ada 20 GB x4 | Llama 3 8B F16 Generation | 20.58 |

| NVIDIA RTX 4000 Ada 20 GB x4 | Llama 3 8B Q4KM Generation | 56.14 |

Observations:

- Apple M2 Pro excels in token speed generation for Llama 2 7B: In all quantization formats (F16, Q80, Q40), the Apple M2 Pro is a step ahead, showcasing faster text generation. This is a crucial factor for user-facing applications that prioritize quick responses, especially those that involve interactive experiences.

- NVIDIA RTX 4000 Ada shows decent performance: While the NVIDIA card doesn’t quite match the Apple M2 Pro's token speed for Llama 2 7B, it still provides competitive performance in its generation.

- Important: We have no data on

Llama3 70Bperformance for the RTX 4000 Ada in F16 and Q4KM formats, so we cannot compare the devices on this model.

Implications:

- Chatbots and Interactive AI Assistants: For developers building chatbots or interactive AI assistants, the Apple M2 Pro shines due to its faster text generation speed. Users won't experience lag, making for a smooth and engaging experience.

- Real-time Text Generation: Tasks requiring real-time text generation, like live translation or creative writing applications, benefit from the M2 Pro's impressive token speed.

Token Speed Processing: NVIDIA RTX 4000 Ada Outshines in Llama 3 8B

Now let's analyze the processing speeds, which are crucial for tasks that involves understanding the meaning of the text like summarization or sentiment analysis.

Table 2. Token Speed Comparison for Text Processing:

| Device | Model | Token Speed (tokens/second) |

|---|---|---|

| Apple M2 Pro 200 GB 16 Cores | Llama 2 7B F16 Processing | 312.65 |

| Apple M2 Pro 200 GB 16 Cores | Llama 2 7B Q8_0 Processing | 288.46 |

| Apple M2 Pro 200 GB 16 Cores | Llama 2 7B Q4_0 Processing | 294.24 |

| NVIDIA RTX 4000 Ada 20 GB x4 | Llama 3 8B F16 Processing | 4366.64 |

| NVIDIA RTX 4000 Ada 20 GB x4 | Llama 3 8B Q4KM Processing | 3369.24 |

Observations:

- NVIDIA RTX 4000 Ada excels in Llama 3 8B processing: The RTX 4000 Ada card significantly outperforms the M2 Pro in processing texts for

Llama 3 8Bmodel. This is a huge difference in performance, showcasing the GPU’s power in handling complex computations. - Apple M2 Pro holds its own in Llama 2 7B: The Apple M2 Pro still delivers solid processing speeds for Llama 2 7B.

- Important: We have no data on

Llama3 70Bperformance for the RTX 4000 Ada in F16 and Q4KM formats, so we cannot compare the devices for this model.

Implications:

- Natural Language Processing (NLP) tasks: For NLP tasks such as sentiment analysis, document summarization, and information extraction, the NVIDIA RTX 4000 Ada is the preferred choice due to its impressive processing speed.

- Large Language Models: Developers working with larger language models (like the Llama 3 8B) will find the RTX 4000 Ada’s processing power invaluable.

Memory Capacity: A Tie for M2 Pro and RTX 4000 Ada (200GB vs. 80GB)

Both devices offer significant memory capacity. The Apple M2 Pro boasts a 200 GB capacity, which can accommodate even the most demanding large language models. The NVIDIA RTX 4000 Ada, with its 80 GB memory capacity, can also manage larger models but has a smaller footprint.

- Apple M2 Pro: 200 GB

- NVIDIA RTX 4000 Ada: 80 GB

Implications:

- Large Language Models: Developers who want to run large language models locally can do so with both devices. The Apple M2 Pro offers more memory flexibility, allowing for experimentation with even larger models.

- Multiple Models: Having ample memory allows for running multiple models simultaneously, providing a more diverse set of functionalities within your applications.

Power Consumption: NVIDIA RTX 4000 Ada Consumes More Power

Power consumption is an important consideration, especially for deployments involving server applications or long-term use.

- Apple M2 Pro: lower power consumption due to its energy-efficient design.

- NVIDIA RTX 4000 Ada: Higher power consumption due to its more powerful GPU architecture.

Implications:

- Cost-Sensitive Applications: For applications that need to run efficiently on a budget, the Apple M2 Pro could be more attractive.

- Data Center Deployment: In a data center deployment where power consumption is crucial, the NVIDIA RTX 4000 Ada might demand more careful consideration regarding cooling and electricity costs.

Cost: Apple M2 Pro Offers Better Value for Money

The cost of these devices plays a significant role in purchase decisions.

- Apple M2 Pro (200 GB): You can expect a price range that is more affordable than the NVIDIA RTX 4000 Ada, especially considering the 200 GB of memory.

- NVIDIA RTX 4000 Ada (80 GB): The NVIDIA RTX 4000 Ada is more expensive, reflecting its superior processing power.

Implications:

- Budget-Constrained Developers: For budget-conscious developers, the Apple M2 Pro presents a compelling value proposition. It offers a powerful combination of performance and affordability.

- High-Performance Applications: When performance is paramount and cost is less of a concern, the NVIDIA RTX 4000 Ada might be the more suitable option.

Practical Use Cases: Choosing the Right Device for Your Needs

Here's a breakdown of use cases that best match each device's strengths:

Apple M2 Pro:

- Chatbots and Conversational AI: The faster token generation speed makes it ideal for creating engaging and responsive chatbots.

- Real-time Text Generation: Applications requiring on-the-fly text generation, like live translation services or creative writing tools, benefit from the M2 Pro's speed.

- Budget-Constrained Development: For developers working with limited resources, the M2 Pro offers a strong balance of performance and affordability.

NVIDIA RTX 4000 Ada:

- Large Language Models (LLM) Research: The incredible processing power allows researchers to explore and experiment with extremely large language models.

- High-Performance Natural Language Processing (NLP): For tasks like sentiment analysis, summarization, and information extraction, the RTX 4000 Ada’s processing speed is a game-changer.

- Server Applications: Despite the higher power consumption, the RTX 4000 Ada's processing capabilities make it suitable for demanding server applications.

Quantization: Making LLMs More Accessible

Quantization is like a special trick that packs a lot of information into a smaller space. Imagine you have a huge book filled with words. To make it easier to carry, you can compress the text, using shorter codes for common words. This allows you to fit more information in a smaller book.

In LLM models, quantization compresses the model's parameters (the knowledge stored in the model) to use less memory and energy. This means you can run bigger LLMs on devices with limited memory without sacrificing too much accuracy.

Key Points:

- Smaller Memory Footprint: Quantization allows you to run larger models on devices with limited memory.

- Lower Power Consumption: With smaller models, you consume less energy, which can be crucial for mobile devices or applications that need to run for extended periods.

- Faster Inference: Quantized models can sometimes execute faster, resulting in quicker responses.

Example:

Imagine you are running a chatbot on your phone. Without quantization, you might need a very powerful phone to handle the large model. But with quantization, you can run the chatbot on a simpler phone without a noticeable drop in performance.

Conclusion: The Best Device Depends on Your Project

Ultimately, the choice between the Apple M2 Pro and the NVIDIA RTX 4000 Ada depends on your specific project requirements and budget. The Apple M2 Pro excels in token speed generation, making it an ideal choice for user-facing applications that prioritize responsiveness. The NVIDIA RTX 4000 Ada, on the other hand, offers incredible processing power, which is essential for tasks like NLP and running larger language models.

FAQ

What are Large Language Models (LLMs)?

Large language models are AI systems trained on massive amounts of text data, allowing them to generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. They are the backbone of many popular AI applications like chatbots, language translators, and AI writing assistants.

What are Tokens?

Tokens are the smallest units of text that a language model processes. Think of them as the building blocks of a sentence: each word, punctuation mark, or special character is a token for an LLM.

What is Quantization?

Quantization is a technique used to reduce the size of a language model by compressing its parameters (the knowledge stored in the model). Think of it as squeezing a lot of data into a smaller package. This allows you to run larger language models on devices with limited memory and power resources.

What are Processing and Generation in LLMs?

- Processing: Refers to how quickly an LLM can process and understand input text. It’s like reading and comprehending a sentence.

- Generation: Refers to how quickly an LLM can generate new text. It’s like writing a response based on its understanding of the input.

What are the Benefits of Running LLMs Locally?

Running LLM models locally offers several benefits:

- Privacy: Your data remains on your own device, enhancing data security and privacy.

- Offline Functionality: You can use LLMs without an internet connection, enabling applications in remote locations or situations with limited connectivity.

- Low Latency: Direct access to the model results in faster responses and a more seamless user experience.

Keywords:

Apple M2 Pro, NVIDIA RTX 4000 Ada, Large Language Models (LLMs), Llama 2 7B, Llama 3 8B, Token Speed, Generation, Processing, Memory Capacity, Power Consumption, Cost, Quantization, AI Inference, NLP, Chatbots, AI Assistants, Real-time Text Generation, Server Applications, Local LLMs, Offine LLMs, Privacy, Latency.