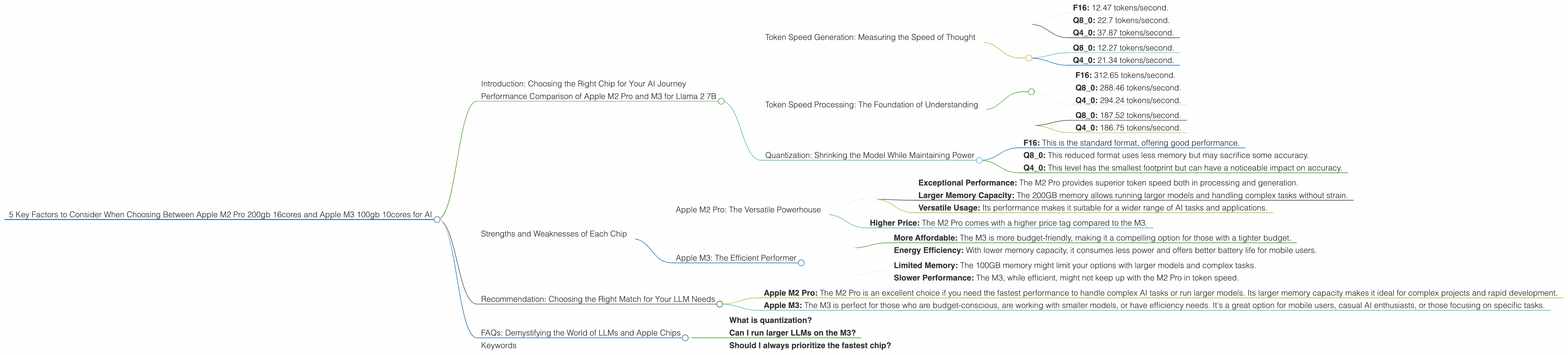

5 Key Factors to Consider When Choosing Between Apple M2 Pro 200gb 16cores and Apple M3 100gb 10cores for AI

Introduction: Choosing the Right Chip for Your AI Journey

The world of large language models (LLMs) is buzzing with excitement, and you're probably looking for the best device to unleash their power. Apple's M-series chips are known for their exceptional performance, and the M2 Pro and M3 are both compelling choices for running LLMs locally. But how do you know which one is right for you?

This article dives deep into the performance of the Apple M2 Pro 200GB 16-core and M3 100GB 10-core chips when running Llama 2 7B models. Using real-world benchmark data, we'll analyze key factors like token speed, quantization, processing vs. generation, and memory limitations. By the end, you'll be equipped to make an informed decision based on your specific needs and budget.

Performance Comparison of Apple M2 Pro and M3 for Llama 2 7B

Let's get down to the numbers and see how these two powerful chips stack up. We'll be focusing on the Llama 2 7B model, a popular choice for those who want a balance of performance and resource consumption.

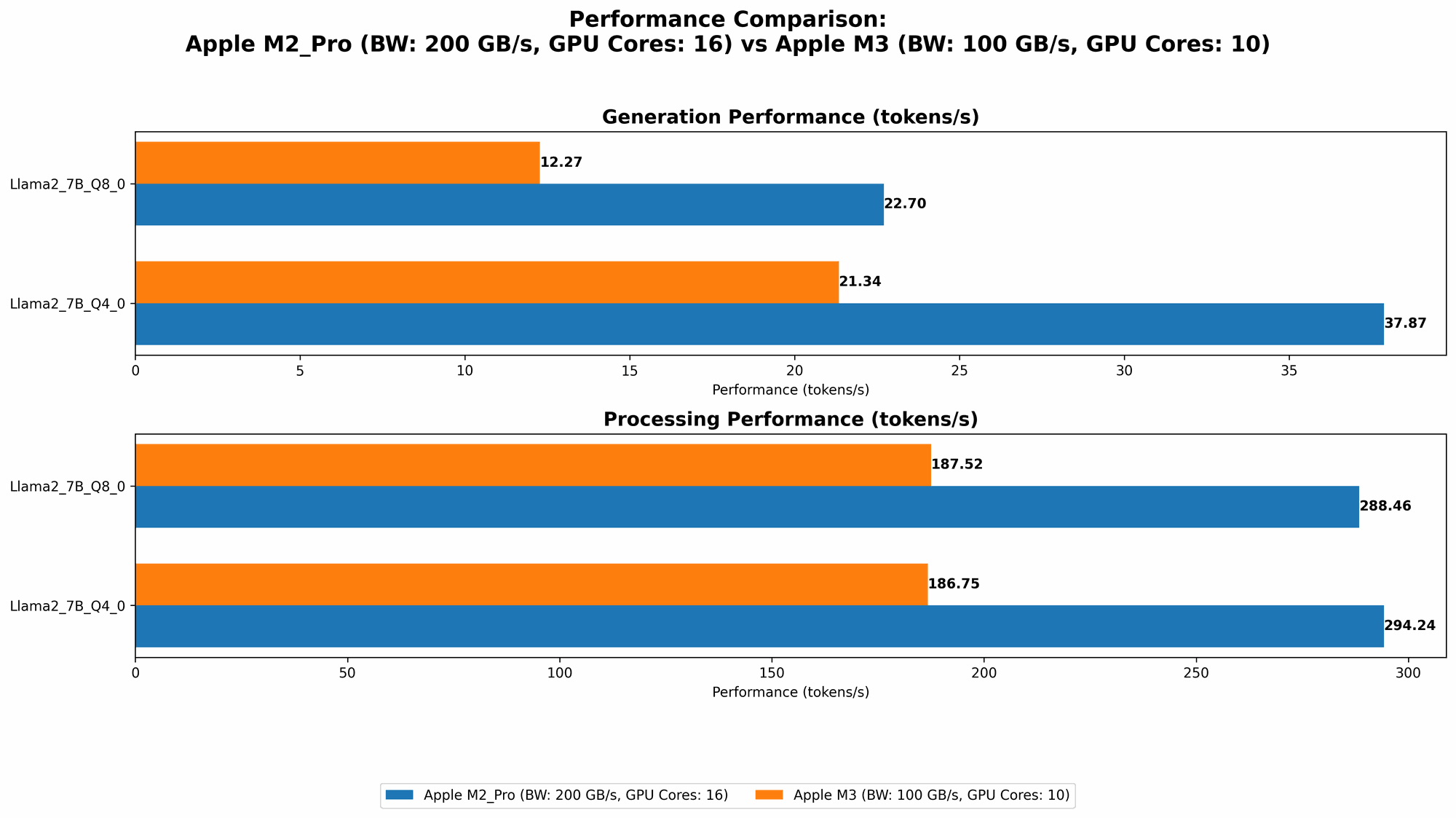

Token Speed Generation: Measuring the Speed of Thought

One key metric for comparing LLM performance is token speed. It represents the number of tokens processed per second, directly impacting how fast your LLM can generate text or perform tasks.

Apple M2 Pro:

- F16: 12.47 tokens/second.

- Q8_0: 22.7 tokens/second.

- Q4_0: 37.87 tokens/second.

Apple M3:

- Q8_0: 12.27 tokens/second.

- Q4_0: 21.34 tokens/second.

Analysis: The M2 Pro demonstrably outperforms the M3 in token speed generation across all quantization formats. This means you'll get faster responses and smoother interactions with the Llama 2 7B model on the M2 Pro. Think of it like this: the M2 Pro is like a seasoned marathon runner, smoothly churning out tokens, while the M3 is a sprinter, capable of bursts of speed, but not quite as consistent for longer tasks.

Token Speed Processing: The Foundation of Understanding

The processing speed is equally important as it determines how quickly the model understands the input. The higher the number, the faster the model can process the input and generate the output.

Apple M2 Pro:

- F16: 312.65 tokens/second.

- Q8_0: 288.46 tokens/second.

- Q4_0: 294.24 tokens/second.

Apple M3:

- Q8_0: 187.52 tokens/second.

- Q4_0: 186.75 tokens/second.

Analysis: This is where the M2 Pro's muscle truly shows. It's significantly ahead in processing speed, regardless of the quantization level. Imagine it as a super-fast calculator understanding your questions and commands in a flash. The M3, on the other hand, seems to be taking a more relaxed approach, but still gets the job done.

Quantization: Shrinking the Model While Maintaining Power

Quantization is a technique to reduce the model's size, which benefits memory usage and speed. Think of it like compressing a picture to take up less storage space without sacrificing quality. We'll look at three quantization levels:

- F16: This is the standard format, offering good performance.

- Q8_0: This reduced format uses less memory but may sacrifice some accuracy.

- Q4_0: This level has the smallest footprint but can have a noticeable impact on accuracy.

Analysis: The M2 Pro performs well across all quantization levels, while the M3 lacks F16 data. The M3's Q80 and Q40 results are a bit slower than the M2 Pro, but still respectable. Since the M3 has less memory capacity, Q80 or Q40 are likely to be more appealing choices.

Strengths and Weaknesses of Each Chip

Apple M2 Pro: The Versatile Powerhouse

Strengths:

- Exceptional Performance: The M2 Pro provides superior token speed both in processing and generation.

- Larger Memory Capacity: The 200GB memory allows running larger models and handling complex tasks without strain.

- Versatile Usage: Its performance makes it suitable for a wider range of AI tasks and applications.

Weaknesses:

- Higher Price: The M2 Pro comes with a higher price tag compared to the M3.

Apple M3: The Efficient Performer

Strengths:

- More Affordable: The M3 is more budget-friendly, making it a compelling option for those with a tighter budget.

- Energy Efficiency: With lower memory capacity, it consumes less power and offers better battery life for mobile users.

Weaknesses:

- Limited Memory: The 100GB memory might limit your options with larger models and complex tasks.

- Slower Performance: The M3, while efficient, might not keep up with the M2 Pro in token speed.

Recommendation: Choosing the Right Match for Your LLM Needs

For those who prioritize speed and have a larger budget:

- Apple M2 Pro: The M2 Pro is an excellent choice if you need the fastest performance to handle complex AI tasks or run larger models. Its larger memory capacity makes it ideal for complex projects and rapid development.

For those who prioritize efficiency and affordability:

- Apple M3: The M3 is perfect for those who are budget-conscious, are working with smaller models, or have efficiency needs. It's a great option for mobile users, casual AI enthusiasts, or those focusing on specific tasks.

FAQs: Demystifying the World of LLMs and Apple Chips

What is quantization?

Quantization is a process that reduces the size of an LLM by converting its parameters (the model's "knowledge") into a smaller format. This generally involves rounding off the values in the model to use fewer bits for storage and processing. Think of it like compressing a picture to take up less space without sacrificing too much quality. The downside is potentially a slight decrease in accuracy.

Can I run larger LLMs on the M3?

You might be able to run a larger model depending on the model's memory requirements. However, the 100GB memory capacity of the M3 will likely limit your options compared to the M2 Pro.

Should I always prioritize the fastest chip?

Not necessarily. Consider your budget, the complexity of your AI tasks, and the size of the model you want to use. If you're working with a smaller model and don't need blazing speed, the M3 could be a better choice.

Keywords

Apple M2 Pro, Apple M3, LLM, Llama 2 7B, token speed, quantization, memory capacity, performance, processing, generation, F16, Q80, Q40, AI, machine learning, developers, geeks, AI models, GPU, CPUs, performance benchmark, inference speed.