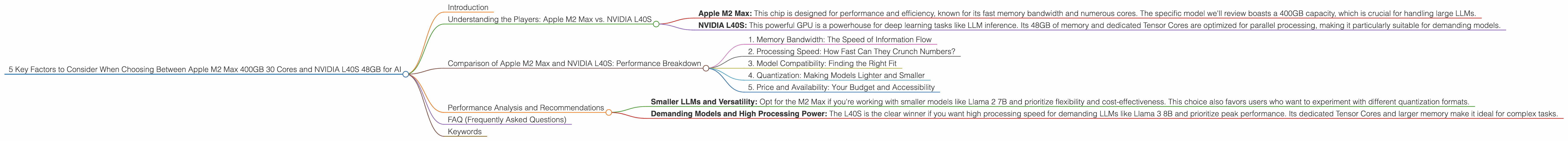

5 Key Factors to Consider When Choosing Between Apple M2 Max 400gb 30cores and NVIDIA L40S 48GB for AI

Introduction

Choosing the right hardware for running large language models (LLMs) can be a daunting task, especially for developers and enthusiasts venturing into the fascinating world of local AI. The choice between a powerful Apple M2 Max chip with 400GB of memory and 30 cores and an NVIDIA L40S GPU with 48GB of memory is a common dilemma. Both offer impressive performance, but each shines in different areas. This article dives deep into the performance of these two devices, exploring key factors like memory bandwidth, processing speed, and model compatibility.

Understanding the Players: Apple M2 Max vs. NVIDIA L40S

Let's introduce the hardware contenders:

- Apple M2 Max: This chip is designed for performance and efficiency, known for its fast memory bandwidth and numerous cores. The specific model we'll review boasts a 400GB capacity, which is crucial for handling large LLMs.

- NVIDIA L40S: This powerful GPU is a powerhouse for deep learning tasks like LLM inference. Its 48GB of memory and dedicated Tensor Cores are optimized for parallel processing, making it particularly suitable for demanding models.

Comparison of Apple M2 Max and NVIDIA L40S: Performance Breakdown

1. Memory Bandwidth: The Speed of Information Flow

Memory Bandwidth: This refers to the rate at which data can be transferred between the CPU/GPU and the RAM. Higher bandwidth equates to faster data access, essential for model loading and data processing.

Winner: M2 Max

The M2 Max boasts impressive memory bandwidth, surpassing the L40S in this regard. This advantage is particularly crucial for loading large models and processing substantial chunks of data. Think of it like a super-fast highway for data compared to a regular road – the data travels much quicker on the M2 Max.

2. Processing Speed: How Fast Can They Crunch Numbers?

Speed: We measure processing speed in "tokens per second," indicating how quickly the device can process text data for LLM tasks.

Winner: L40S

The L40S shines in this area with a significant lead in tokens per second, especially for larger models like Llama 3 8B, indicating a clear advantage in processing speed for demanding models.

Practical Example: Imagine you have a long text document you want to analyze with an LLM. With an M2 Max, it's like analyzing chapters one at a time, while the L40S can handle the whole book at once, and even faster.

3. Model Compatibility: Finding the Right Fit

Model Compatibility: Different hardware platforms support various model formats, including weights quantized for reduced memory footprint (like Q4KM) and FP16 (half-precision floating-point) formats.

Winner: It's a Tie!

Both devices show compatibility with different model formats, including quantized models for larger models and FP16 formats for smaller ones. However, support depends on the specific LLM and its implementation. For instance, the L40S excels with Llama 3 8B, both in Q4KM and F16 format, while the M2 Max shines for smaller Llama 2 7B models in various formats.

Remember: This is where the "it depends" factor comes into play. The best device for you depends on the specific LLM you're using.

4. Quantization: Making Models Lighter and Smaller

Quantization: A Simplified Explanation

Imagine a large language model like a huge library filled with books. Each book represents a piece of information or "weight" that the model uses to process text. Quantization is like using smaller, compressed versions of these books so we can fit more into the library and read them faster.

Winner: It's Complicated!

Both devices offer support for quantization, enabling models to be smaller and faster, but different quantization formats (Q4KM, Q8, Q4) affect performance. For example, the L40S handles Q4KM quantized models exceptionally well for Llama 3 8B, while the M2 Max shines with Q8 and Q4 for Llama 2 7B.

Practical Example: You have a big book that takes up a lot of space in your library. Quantization is like using smaller versions of the same book, allowing you to fit more books on your shelves (memory) and find them faster.

5. Price and Availability: Your Budget and Accessibility

Price and Availability: The cost of the hardware plays a crucial role in the decision-making process.

Winner: It Depends!

The M2 Max is generally considered more cost-effective than the L40S, but it's important to factor in the additional cost of external GPU enclosures for the M2 Max. The L40S is usually incorporated into a complete system, making its "out-of-the-box" cost higher.

Performance Analysis and Recommendations

M2 Max: Offers excellent memory bandwidth and versatility, making it a great choice for smaller LLMs and tasks requiring fast data access. This is a good option for users who prioritize flexibility and cost-effectiveness. The M2 Max shines with its ability to handle various quantization formats for smaller models.

L40S: Powerful GPU with exceptional processing speed, ideal for demanding models like Llama 3 8B and tasks requiring high computational power. Its dedicated Tensor Cores and larger memory make it a strong contender for users who prioritize performance. While the L40S has fewer supported quantization formats, its speed and performance for Q4KM models make it a valuable asset for high-performance applications.

Key Recommendations:

- Smaller LLMs and Versatility: Opt for the M2 Max if you're working with smaller models like Llama 2 7B and prioritize flexibility and cost-effectiveness. This choice also favors users who want to experiment with different quantization formats.

- Demanding Models and High Processing Power: The L40S is the clear winner if you want high processing speed for demanding LLMs like Llama 3 8B and prioritize peak performance. Its dedicated Tensor Cores and larger memory make it ideal for complex tasks.

FAQ (Frequently Asked Questions)

Q: What are LLMs, and why are they so important?

A: LLMs, or Large Language Models, are powerful AI systems trained on massive datasets of text and code. They have a wide range of applications, from generating creative content to answering complex questions and even translating languages.

Q: How do I choose the best device for running LLMs?

A: Consider the size of the model, the required processing speed, the type of tasks you'll perform, and your budget. Don't forget about the importance of memory bandwidth and compatibility with different model formats.

Q: What's quantization, and how does it benefit me?

A: Quantization is a technique for reducing the size of LLMs by representing their "weights" with fewer bits, making models more efficient and faster. It's like using smaller, compressed versions of the model's information, allowing it to run smoother and faster.

Q: What are some good resources for learning more about LLMs and hardware?

A: Check out the Hugging Face website, the llama.cpp project on GitHub, and publications from Google AI, OpenAI, and other leading research labs.

Keywords

Apple M2 Max, NVIDIA L40S, LLM, Llama 2, Llama 3, Large Language Models, AI, Memory Bandwidth, Processing Speed, Token Speed, Model Compatibility, Quantization, Q4KM, F16, GPU, CPU, Inference, Deep Learning