5 Key Factors to Consider When Choosing Between Apple M2 100gb 10cores and NVIDIA 4090 24GB for AI

Introduction

The world of large language models (LLMs) is exploding, with new models and capabilities emerging daily. Running these models on your local machine opens up a world of possibilities for developers, researchers, and anyone who wants to explore the power of AI.

But choosing the right hardware for your LLM needs can be a daunting task. You need a device that can handle the computational demands of these massive models while offering a balance between performance and cost. Two popular options for running LLMs locally are the Apple M2 100GB 10-core chip and the NVIDIA 4090 24GB GPU. This article dives into the key factors to consider when deciding between these powerful devices.

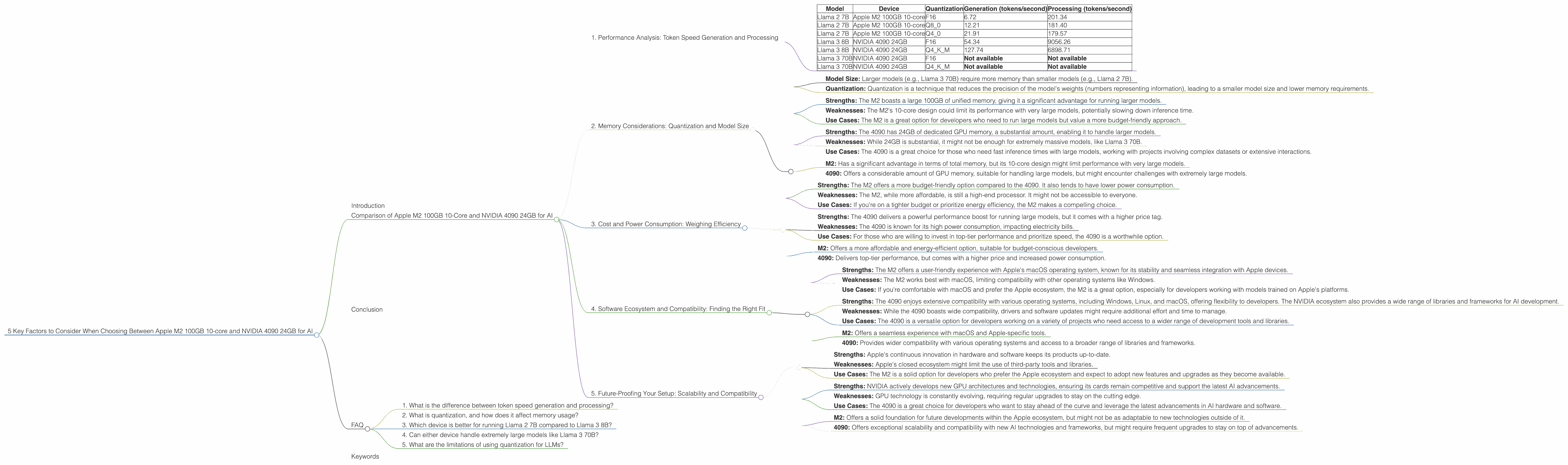

Comparison of Apple M2 100GB 10-Core and NVIDIA 4090 24GB for AI

1. Performance Analysis: Token Speed Generation and Processing

Let's kick off by comparing the token speed generation and processing capabilities of these two devices.

Token speed refers to the number of tokens a device can process per second. Higher token speeds mean faster inference times and quicker responses from your AI model.

Apple M2 100GB 10-Core:

- Strengths: The M2 excels with its impressive performance in processing smaller models. It offers a remarkable token speed for the Llama 2 7B model when using various quantization levels.

- Weaknesses: The M2 doesn't shine as brightly with larger models like Llama 3 70B, as there's no data available on its performance. This implies a potential bottleneck with larger models, impacting efficiency.

- Use Cases: The M2 is a fantastic choice for running smaller LLMs, like Llama 2 7B, for tasks such as text generation, translation, and basic code completion, especially for those who prioritize cost-effectiveness.

NVIDIA 4090 24GB:

- Strengths: The NVIDIA 4090 is a powerhouse when dealing with larger models. The data shows its exceptional performance with the Llama 3 8B model, delivering high token speeds for both processing and generation, even with the more memory-demanding Q4KM quantization.

- Weaknesses: The 4090's performance with Llama 3 70B remains unknown. This might be due to insufficient memory or limitations in current benchmarks.

- Use Cases: The 4090 is best for researchers and developers working with larger models, where speed and accuracy are critical. This includes tasks like generating intricate text formats, multi-turn conversations, and complex code completion.

Token Speed Generation and Processing Comparison:

| Model | Device | Quantization | Generation (tokens/second) | Processing (tokens/second) |

|---|---|---|---|---|

| Llama 2 7B | Apple M2 100GB 10-core | F16 | 6.72 | 201.34 |

| Llama 2 7B | Apple M2 100GB 10-core | Q8_0 | 12.21 | 181.40 |

| Llama 2 7B | Apple M2 100GB 10-core | Q4_0 | 21.91 | 179.57 |

| Llama 3 8B | NVIDIA 4090 24GB | F16 | 54.34 | 9056.26 |

| Llama 3 8B | NVIDIA 4090 24GB | Q4KM | 127.74 | 6898.71 |

| Llama 3 70B | NVIDIA 4090 24GB | F16 | Not available | Not available |

| Llama 3 70B | NVIDIA 4090 24GB | Q4KM | Not available | Not available |

Summary:

- M2: Outperforms the 4090 in token speed with smaller, lighter models (Llama 2 7B).

- 4090: Surpasses the M2 with larger models (Llama 3 8B), but data is unavailable for larger models (Llama 3 70B).

2. Memory Considerations: Quantization and Model Size

Let's dive into the world of memory. LLMs require a significant amount of RAM to function properly. To understand memory requirements, we need to consider two key aspects:

- Model Size: Larger models (e.g., Llama 3 70B) require more memory than smaller models (e.g., Llama 2 7B).

- Quantization: Quantization is a technique that reduces the precision of the model's weights (numbers representing information), leading to a smaller model size and lower memory requirements.

Think of quantization like a simplified version of a map. Imagine a highly detailed map with every single tree, road, and building meticulously marked. It would require an enormous amount of memory to store. Now, imagine a simplified map with only major roads and landmarks. It's easier to store!

Apple M2 100GB 10-Core:

- Strengths: The M2 boasts a large 100GB of unified memory, giving it a significant advantage for running larger models.

- Weaknesses: The M2's 10-core design could limit its performance with very large models, potentially slowing down inference time.

- Use Cases: The M2 is a great option for developers who need to run large models but value a more budget-friendly approach.

NVIDIA 4090 24GB:

- Strengths: The 4090 has 24GB of dedicated GPU memory, a substantial amount, enabling it to handle larger models.

- Weaknesses: While 24GB is substantial, it might not be enough for extremely massive models, like Llama 3 70B.

- Use Cases: The 4090 is a great choice for those who need fast inference times with large models, working with projects involving complex datasets or extensive interactions.

Memory Considerations Summary:

- M2: Has a significant advantage in terms of total memory, but its 10-core design might limit performance with very large models.

- 4090: Offers a considerable amount of GPU memory, suitable for handling large models, but might encounter challenges with extremely large models.

3. Cost and Power Consumption: Weighing Efficiency

Let's talk about money and energy! Both the M2 and the 4090 are powerful devices, but they come with different price tags and energy consumption.

Apple M2 100GB 10-Core:

- Strengths: The M2 offers a more budget-friendly option compared to the 4090. It also tends to have lower power consumption.

- Weaknesses: The M2, while more affordable, is still a high-end processor. It might not be accessible to everyone.

- Use Cases: If you're on a tighter budget or prioritize energy efficiency, the M2 makes a compelling choice.

NVIDIA 4090 24GB:

- Strengths: The 4090 delivers a powerful performance boost for running large models, but it comes with a higher price tag.

- Weaknesses: The 4090 is known for its high power consumption, impacting electricity bills.

- Use Cases: For those who are willing to invest in top-tier performance and prioritize speed, the 4090 is a worthwhile option.

Cost and Power Consumption Summary:

- M2: Offers a more affordable and energy-efficient option, suitable for budget-conscious developers.

- 4090: Delivers top-tier performance, but comes with a higher price and increased power consumption.

4. Software Ecosystem and Compatibility: Finding the Right Fit

The software ecosystem and compatibility can impact your LLM workflow.

Apple M2 100GB 10-Core:

- Strengths: The M2 offers a user-friendly experience with Apple's macOS operating system, known for its stability and seamless integration with Apple devices.

- Weaknesses: The M2 works best with macOS, limiting compatibility with other operating systems like Windows.

- Use Cases: If you're comfortable with macOS and prefer the Apple ecosystem, the M2 is a great option, especially for developers working with models trained on Apple's platforms.

NVIDIA 4090 24GB:

- Strengths: The 4090 enjoys extensive compatibility with various operating systems, including Windows, Linux, and macOS, offering flexibility to developers. The NVIDIA ecosystem also provides a wide range of libraries and frameworks for AI development.

- Weaknesses: While the 4090 boasts wide compatibility, drivers and software updates might require additional effort and time to manage.

- Use Cases: The 4090 is a versatile option for developers working on a variety of projects who need access to a wider range of development tools and libraries.

Software Ecosystem and Compatibility Summary:

- M2: Offers a seamless experience with macOS and Apple-specific tools.

- 4090: Provides wider compatibility with various operating systems and access to a broader range of libraries and frameworks.

5. Future-Proofing Your Setup: Scalability and Compatibility

As the field of LLMs rapidly evolves, it's crucial to consider future-proofing your setup.

Apple M2 100GB 10-Core:

- Strengths: Apple's continuous innovation in hardware and software keeps its products up-to-date.

- Weaknesses: Apple's closed ecosystem might limit the use of third-party tools and libraries.

- Use Cases: The M2 is a solid option for developers who prefer the Apple ecosystem and expect to adopt new features and upgrades as they become available.

NVIDIA 4090 24GB:

- Strengths: NVIDIA actively develops new GPU architectures and technologies, ensuring its cards remain competitive and support the latest AI advancements.

- Weaknesses: GPU technology is constantly evolving, requiring regular upgrades to stay on the cutting edge.

- Use Cases: The 4090 is a great choice for developers who want to stay ahead of the curve and leverage the latest advancements in AI hardware and software.

Future-Proofing Summary:

- M2: Offers a solid foundation for future developments within the Apple ecosystem, but might not be as adaptable to new technologies outside of it.

- 4090: Offers exceptional scalability and compatibility with new AI technologies and frameworks, but might require frequent upgrades to stay on top of advancements.

Conclusion

The choice between the Apple M2 100GB 10-core and the NVIDIA 4090 24GB for running LLMs depends on your priorities, budget, and specific use case.

The M2 is an excellent choice for budget-conscious developers who prioritize efficiency and ease of use within the Apple ecosystem, especially for lighter models. It offers a balance of performance and affordability.

The 4090 is a power player for developers who prioritize performance and scalability, especially for larger models. It handles complex tasks with speed and efficiency, but comes with a higher price tag and increased power consumption.

Ultimately, the best device for you depends on your unique needs and priorities. Consider your project scope, your budget, and future plans to make the decision that suits you best.

FAQ

1. What is the difference between token speed generation and processing?

Token speed generation refers to the speed at which a device can generate new tokens. It's essentially how fast your model can create new text, code, or other output.

Token speed processing refers to the speed at which a device can process existing tokens. It's how quickly your model can analyze and understand the input it receives.

2. What is quantization, and how does it affect memory usage?

Quantization is a technique that reduces the precision of a model's weights, the numbers representing information in a neural network. Think of it as using a smaller number of bits to represent a number. This leads to a smaller model size and lower memory requirements, making it possible to run larger models on devices with limited memory.

3. Which device is better for running Llama 2 7B compared to Llama 3 8B?

For Llama 2 7B, the Apple M2 100GB 10-core is a more suitable option due to its impressive token speeds and adequate memory.

For Llama 3 8B, the NVIDIA 4090 24GB is the better choice, offering higher token speeds and sufficient memory for its larger size.

4. Can either device handle extremely large models like Llama 3 70B?

While the M2 has 100GB of memory, its performance with extremely large models like Llama 3 70B remains unclear. The 4090, with its 24GB of GPU memory, could potentially struggle with these models due to memory limitations.

5. What are the limitations of using quantization for LLMs?

While quantization reduces model size and memory requirements, it can also lead to a slight reduction in accuracy. The trade-off between accuracy and reduced memory usage needs to be carefully considered based on your specific needs.

Keywords

Apple M2, NVIDIA 4090, LLM, AI, deep learning, token speed, generation, processing, quantization, memory, cost, power consumption, software ecosystem, compatibility, future-proofing, Llama 2, Llama 3, model size