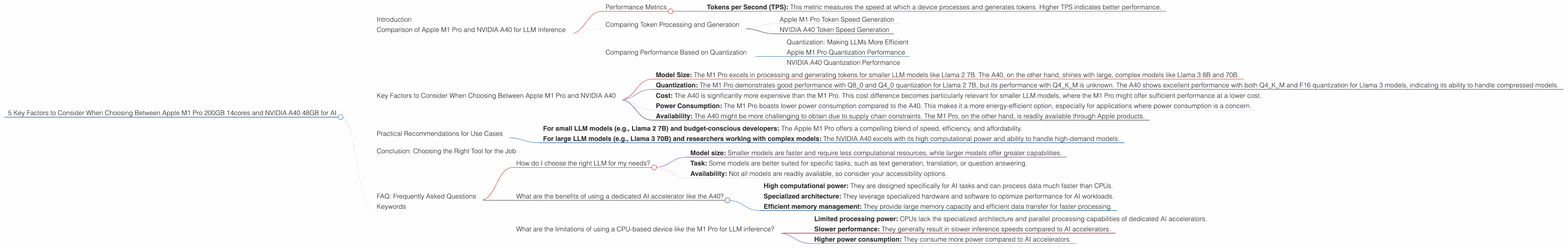

5 Key Factors to Consider When Choosing Between Apple M1 Pro 200gb 14cores and NVIDIA A40 48GB for AI

Introduction

The world of artificial intelligence (AI) is evolving at a breakneck pace, with Large Language Models (LLMs) taking center stage. These powerful models, capable of generating human-like text, translating languages, and writing different kinds of creative content, require significant computational resources to operate efficiently. Selecting the right hardware for your LLM needs is crucial, and two popular choices are the Apple M1 Pro 200GB 14cores and the NVIDIA A40 48GB.

This article will delve into the key factors to consider when choosing between these two devices, analyzing their performance in processing and generating tokens for various LLM models. By comparing their strengths and weaknesses, we'll aim to provide you with a clear understanding of which device best suits your specific AI needs.

Comparison of Apple M1 Pro and NVIDIA A40 for LLM Inference

Let's dig into the nitty-gritty of comparing the Apple M1 Pro 200GB 14cores and the NVIDIA A40 48GB in the context of LLM inference. We'll focus on their performance in processing and generating tokens for different LLM models and configurations.

Performance Metrics

- Tokens per Second (TPS): This metric measures the speed at which a device processes and generates tokens. Higher TPS indicates better performance.

Comparing Token Processing and Generation

Apple M1 Pro Token Speed Generation

The M1 Pro, known for its efficiency and power, performs remarkably well with smaller LLMs. It shines when processing and generating tokens for the Llama 2 7B model, especially with quantized formats. This suggests that for smaller models, the M1 Pro offers a compelling combination of speed and affordability.

However, it's important to note that the M1 Pro's performance drops significantly with larger LLMs. We lack data on its performance with Llama 3 models, making it difficult to draw conclusions for larger model inference.

NVIDIA A40 Token Speed Generation

The A40, a powerhouse GPU designed for heavy-duty tasks, excels in handling larger LLM models. It demonstrates superior performance in processing and generating tokens for Llama 3 8B and 70B models, even with the computationally intensive Q4KM quantization format. This makes it a suitable choice for researchers and developers working with large, complex models.

While the A40 outshines the M1 Pro in processing larger models, it falls short in handling smaller LLMs like Llama 2 7B. In the context of performance, the A40 proves to be a specialized tool best suited for larger models.

Comparing Performance Based on Quantization

Quantization: Making LLMs More Efficient

Think of quantization as a way to slim down a large LLM, making it more manageable and faster to run. It's like compressing a large file to fit it on a smaller device – less storage space, but the same essential information.

There are different levels of quantization, with Q4KM being the most compressed, followed by Q80, Q40, and F16. The more compressed the quantization, the faster the processing speed but potentially a slight drop in accuracy.

Apple M1 Pro Quantization Performance

The M1 Pro demonstrates impressive performance with Q80 and Q40 quantization for Llama 2 7B. It exhibits a consistent improvement in TPS compared to the F16 format, showcasing its efficiency in handling compressed models.

We don't have data for the M1 Pro's performance with Q4KM quantization, so we cannot compare it to the A40's performance. It's probable that the M1 Pro might struggle with this level of compression, especially for larger LLMs.

NVIDIA A40 Quantization Performance

The A40 demonstrates excellent performance with both Q4KM and F16 quantization for Llama 3 8B and 70B. Interestingly, for Llama 3 8B, it performs slightly better with Q4KM quantization compared to F16, showcasing its ability to effectively manage highly compressed models.

Again, the lack of data for the A40's performance with Q80 and Q40 quantization for Llama 2 7B prevents a complete comparison. We can infer that its performance might be significantly lower for smaller models compared to the M1 Pro, as it's designed for heavier computational tasks.

Key Factors to Consider When Choosing Between Apple M1 Pro and NVIDIA A40

Here's a breakdown of key factors to consider when choosing between the Apple M1 Pro and the NVIDIA A40:

- Model Size: The M1 Pro excels in processing and generating tokens for smaller LLM models like Llama 2 7B. The A40, on the other hand, shines with large, complex models like Llama 3 8B and 70B.

- Quantization: The M1 Pro demonstrates good performance with Q80 and Q40 quantization for Llama 2 7B, but its performance with Q4KM is unknown. The A40 shows excellent performance with both Q4KM and F16 quantization for Llama 3 models, indicating its ability to handle compressed models.

- Cost: The A40 is significantly more expensive than the M1 Pro. This cost difference becomes particularly relevant for smaller LLM models, where the M1 Pro might offer sufficient performance at a lower cost.

- Power Consumption: The M1 Pro boasts lower power consumption compared to the A40. This makes it a more energy-efficient option, especially for applications where power consumption is a concern.

- Availability: The A40 might be more challenging to obtain due to supply chain constraints. The M1 Pro, on the other hand, is readily available through Apple products.

Practical Recommendations for Use Cases

Here's a guide to help you choose the right device based on your use case:

- For small LLM models (e.g., Llama 2 7B) and budget-conscious developers: The Apple M1 Pro offers a compelling blend of speed, efficiency, and affordability.

- For large LLM models (e.g., Llama 3 70B) and researchers working with complex models: The NVIDIA A40 excels with its high computational power and ability to handle high-demand models.

Conclusion: Choosing the Right Tool for the Job

The choice between the Apple M1 Pro and the NVIDIA A40 depends on your specific needs and priorities. The M1 Pro offers a practical and cost-effective solution for smaller LLMs, while the A40 provides the firepower to handle large, state-of-the-art models. By understanding the key factors and their implications, you can make an informed decision and choose the device that best aligns with your LLM journey.

FAQ: Frequently Asked Questions

How do I choose the right LLM for my needs?

The choice of LLM depends on your specific application. Consider factors like:

- Model size: Smaller models are faster and require less computational resources, while larger models offer greater capabilities.

- Task: Some models are better suited for specific tasks, such as text generation, translation, or question answering.

- Availability: Not all models are readily available, so consider your accessibility options.

What are the benefits of using a dedicated AI accelerator like the A40?

AI accelerators like the A40 offer the following benefits:

- High computational power: They are designed specifically for AI tasks and can process data much faster than CPUs.

- Specialized architecture: They leverage specialized hardware and software to optimize performance for AI workloads.

- Efficient memory management: They provide large memory capacity and efficient data transfer for faster processing.

What are the limitations of using a CPU-based device like the M1 Pro for LLM inference?

While CPUs can be used for LLM inference, they have limitations:

- Limited processing power: CPUs lack the specialized architecture and parallel processing capabilities of dedicated AI accelerators.

- Slower performance: They generally result in slower inference speeds compared to AI accelerators.

- Higher power consumption: They consume more power compared to AI accelerators.

Keywords

Apple M1 Pro, NVIDIA A40, LLM, Llama 2, Llama 3, token processing, token generation, TPS, quantization, F16, Q4KM, Q80, Q40, cost, power consumption, AI accelerator, CPU, inference speed, use cases, recommendations, AI, machine learning, natural language processing, deep learning.