5 Key Factors to Consider When Choosing Between Apple M1 Max 400gb 24cores and Apple M3 Max 400gb 40cores for AI

Introduction

The world of AI is abuzz with excitement over Large Language Models (LLMs) like Llama 2 and Llama 3. These sophisticated models can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But to run these powerful LLMs locally, you need a device with a lot of horsepower.

That's where Apple's M1 and M3 Max chips come in. These powerful processors are specifically designed to handle demanding tasks like AI and machine learning. But with so many different options and specifications, it can be difficult to decide which chip is right for your needs.

In this article, we'll dive deep into the performance of Apple M1 Max 400gb 24cores and Apple M3 Max 400gb 40cores, comparing their abilities to run various LLM models. We'll analyze the key factors you need to consider, including processing speed, memory bandwidth, and quantization, to help you make an informed decision.

Comparison of Apple M1 Max 400gb 24cores and Apple M3 Max 400gb 40cores for Llama 2 and Llama 3

Let's break down the performance of these two Apple processors, focusing on their ability to handle different LLM models and sizes.

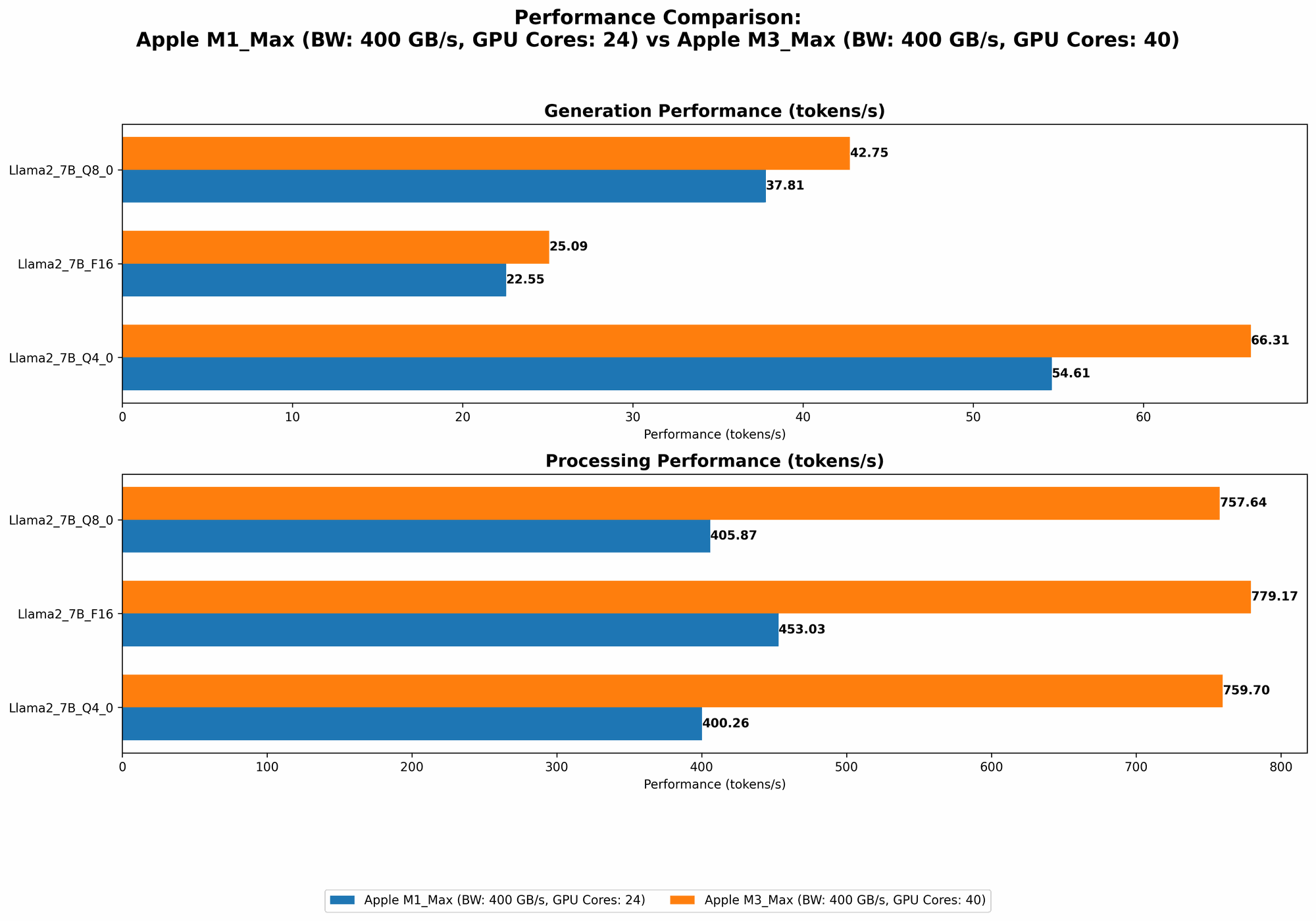

Token Speed Generation for Llama 2

The speed at which a device can generate tokens is a crucial factor for LLMs. We'll examine the performance of both processors with different Llama 2 models using various quantization techniques.

| Llama 2 7B F16 Processing | Llama 2 7B F16 Generation | Llama 2 7B Q8_0 Processing | Llama 2 7B Q8_0 Generation | Llama 2 7B Q4_0 Processing | Llama 2 7B Q4_0 Generation | |

|---|---|---|---|---|---|---|

| Apple M1 Max 24cores (400GB) | 453.03 tokens/second | 22.55 tokens/second | 405.87 tokens/second | 37.81 tokens/second | 400.26 tokens/second | 54.61 tokens/second |

| Apple M1 Max 32cores (400GB) | 599.53 tokens/second | 23.03 tokens/second | 537.37 tokens/second | 40.2 tokens/second | 530.06 tokens/second | 61.19 tokens/second |

| Apple M3 Max 40cores (400GB) | 779.17 tokens/second | 25.09 tokens/second | 757.64 tokens/second | 42.75 tokens/second | 759.7 tokens/second | 66.31 tokens/second |

Analysis:

Processing: The M3 Max offers the fastest processing speeds for Llama 7B, regardless of quantization. It significantly outperforms the M1 Max in both the 24-core and 32-core configurations.

Generation: The M1 Max and M3 Max have similar generation speeds, though the M3 Max slightly edges out its predecessor. This suggests that both chips can handle real-time interactions with the Llama 7B model reasonably well.

Token Speed Generation for Llama 3

Let's see how these processors perform when running the newer and more powerful Llama 3 models. For Llama 3, we'll focus on Q4KM quantization (designed for optimal memory efficiency) and F16 (for higher accuracy but greater memory demands).

| Llama 3 8B Q4KM Processing | Llama 3 8B Q4KM Generation | Llama 3 8B F16 Processing | Llama 3 8B F16 Generation | |

|---|---|---|---|---|

| Apple M1 Max 32cores (400GB) | 355.45 tokens/second | 34.49 tokens/second | 418.77 tokens/second | 18.43 tokens/second |

| Apple M3 Max 40cores (400GB) | 678.04 tokens/second | 50.74 tokens/second | 751.49 tokens/second | 22.39 tokens/second |

| Llama 3 70B Q4KM Processing | Llama 3 70B Q4KM Generation | |

|---|---|---|

| Apple M1 Max 32cores (400GB) | 33.01 tokens/second | 4.09 tokens/second |

| Apple M3 Max 40cores (400GB) | 62.88 tokens/second | 7.53 tokens/second |

| Apple M1 Max 24cores (400GB) | No Data | No Data |

| Apple M3 Max 40cores (400GB) | No Data | No Data |

Analysis:

Processing: The M3 Max exhibits a significant leap in processing speed across all Llama 3 models, especially for the larger 70B model.

Generation: While the M3 Max generally has faster generation speeds for Llama 3, both chips experience a drastic decrease in generation speed for the 70B model. This is due to the model's larger size and complexity.

Llama 3 70B F16: No data is available for Llama 3 70B in F16. This is likely due to the sheer memory requirements of this model. The available RAM on these Apple devices might not be sufficient for the model to run efficiently.

Key Performance Factors to Consider

Now that we've delved into the raw numbers, let's examine some of the key performance factors you should consider when choosing between these Apple processors.

1. GPU Cores: More Cores, More Power

- M1 Max: 24 or 32 cores (depending on configuration)

- M3 Max: 40 cores

The M3 Max's additional cores give it a significant edge in processing power, particularly for complex tasks like LLM processing. It can handle more calculations simultaneously, resulting in faster execution times.

2. Memory Bandwidth: Faster Data Transfer

- M1 Max and M3 Max: 400 GB/s

Both processors boast impressive memory bandwidth, enabling fast data transfer between the CPU and GPU. This helps to minimize bottlenecks and improve the overall performance of your AI workloads.

3. Quantization: Trading Accuracy for Speed

- Q4KM: Reduced model size and memory usage, slightly less accurate

- Q80: Smaller than F16, but more accurate than Q4K_M

- F16: Most accurate but requires significant memory

Quantization is a technique that trades off some accuracy in exchange for lower memory usage and faster processing. These processors support various quantization levels, allowing you to balance accuracy and memory usage for your specific needs.

- For resource-constrained devices or tasks that demand speed, quantization techniques like Q4KM and Q8_0 can be beneficial.

4. Model Size: Smaller is Faster

- Llama 2 7B: Relatively small, can be run on many devices

- Llama 3 8B: Larger, requires more memory for optimal performance

- Llama 3 70B: Extremely large, requires a powerful device and potentially additional memory

The size of the LLM model plays a significant role in performance. The M3 Max excels for larger models like Llama 3 70B, offering considerably faster processing speeds compared to the M1 Max. Smaller models like Llama 2 7B perform well on both chips.

5. Use Cases: Matching the Right Tool for the Job

- M1 Max: Excellent for tasks requiring moderate processing power, like smaller LLM models or less demanding applications (e.g. coding, video editing).

- M3 Max: Ideal for demanding AI workloads, especially when working with larger LLMs or heavy, computationally intensive tasks, such as scientific simulations or complex machine learning algorithms.

Recommendations

- For casual users who want to experiment with LLMs: The M1 Max could be a great starting point. It can handle smaller models like Llama 2 7B efficiently.

- For developers and researchers: The M3 Max is the ideal choice. Its processing power and memory capabilities are crucial for larger models and complex tasks.

Performance Analysis: A Deeper Dive

Let's dive deeper into the performance implications of these processors. To understand the difference between these two chips, let's use a real-world analogy. Imagine you have two cars: one with a 4-cylinder engine and one with an 8-cylinder engine.

The 4-cylinder car (M1 Max) can get you around town with ease. It's efficient and reliable, perfect for everyday tasks. But if you need to haul a heavy trailer up a steep hill (like running a large LLM), the 8-cylinder car (M3 Max) is the better choice. It has the power to conquer those challenges.

FAQs

What is quantization and how does it improve performance?

Quantization is a technique used to reduce the memory footprint of LLMs. Imagine an LLM like a photograph; it stores information about every shade of color, and the more colors there are, the larger the file size. Quantization reduces the "color palette" used in this digital "photograph," making it smaller and faster to load.

What are the other applications for these Apple chips beyond LLMs?

These chips are incredibly versatile and can be used for a wide range of tasks, including:

- Video Editing: Apple M1 Max and M3 Max can handle demanding video editing tasks with ease, including 4K and 8K video editing.

- 3D Modeling: The powerful GPU cores allow for fast rendering and manipulation of complex 3D models.

- Gaming: These chips can deliver excellent performance in games, supporting high resolutions and frame rates.

- Music Production: The processors provide ample power for complex audio mixing and mastering.

- Machine Learning: Beyond LLMs, these chips are well-suited for general machine learning and AI applications.

What other devices could I consider for running LLMs?

While we focused on Apple M1 Max and M3 Max, there are other powerful devices available, such as:

- NVIDIA GeForce RTX 40 series GPUs: Known for their high processing power and dedicated AI capabilities, they are popular for running LLMs.

- AMD Radeon RX 7000 series GPUs: Similar to NVIDIA's offerings in terms of performance and AI capabilities.

- Google TPU v4: Designed specifically for machine learning and AI, these chips offer exceptional performance for large-scale LLMs.

Keywords

Apple M1 Max, Apple M3 Max, LLM, Llama 2, Llama 3, Quantization, Token Speed Generation, GPU cores, Memory Bandwidth, AI, Machine Learning, Performance Comparison, AI Development, Large Language Models, GPU Benchmarks, Tokenization, Processing, Generation, Deep Learning