5 Key Factors to Consider When Choosing Between Apple M1 68gb 7cores and NVIDIA RTX 5000 Ada 32GB for AI

Introduction

The world of Large Language Models (LLMs) is rapidly evolving, with exciting new models like Llama 2 and Llama 3 constantly emerging. For AI enthusiasts and developers, the ability to run these models locally has become increasingly crucial. This is where the choice of hardware becomes critical.

This article delves into the performance of two popular options for running LLMs - Apple M1 68gb 7cores and NVIDIA RTX5000Ada_32GB. Think of this like choosing the right engine for your AI race car. We'll explore the strengths and weaknesses of each device, providing you with the necessary information to make an informed decision based on your specific needs and budget.

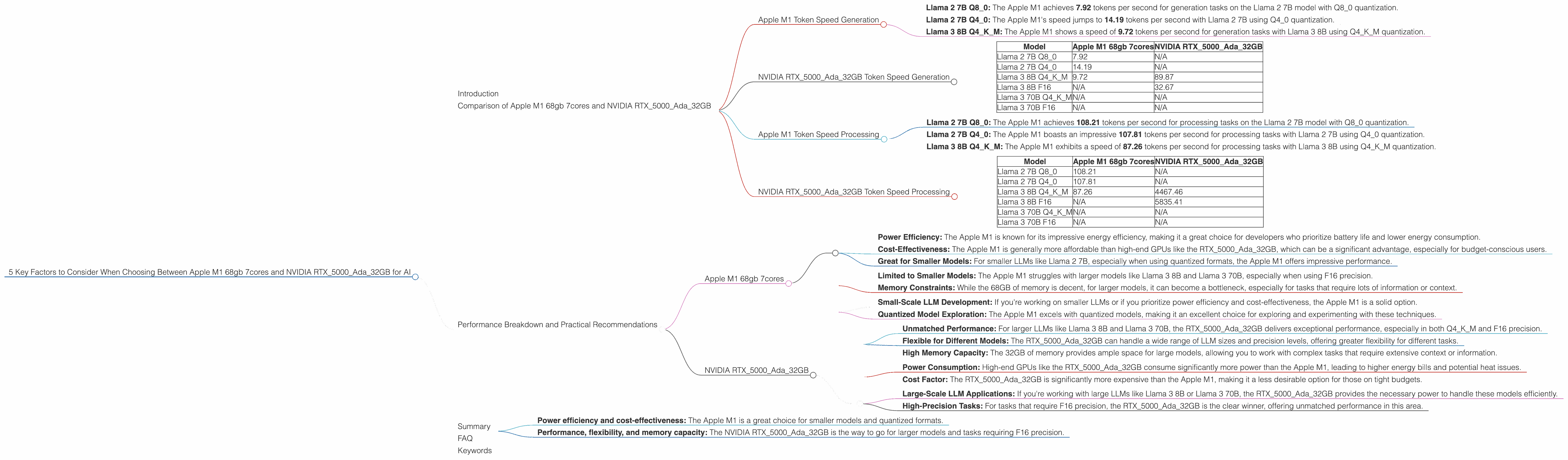

Comparison of Apple M1 68gb 7cores and NVIDIA RTX5000Ada_32GB

Let's dive into the core of our comparison, focusing on the performance metrics for different LLMs, especially Llama 2 and Llama 3. We'll use token speed, measured in tokens per second, as our yardstick.

Apple M1 Token Speed Generation

The Apple M1 is a powerful chip, especially for processing smaller LLMs. Its performance with quantized models is impressive.

- Llama 2 7B Q80: The Apple M1 achieves 7.92 tokens per second for generation tasks on the Llama 2 7B model with Q80 quantization.

- Llama 2 7B Q40: The Apple M1's speed jumps to 14.19 tokens per second with Llama 2 7B using Q40 quantization.

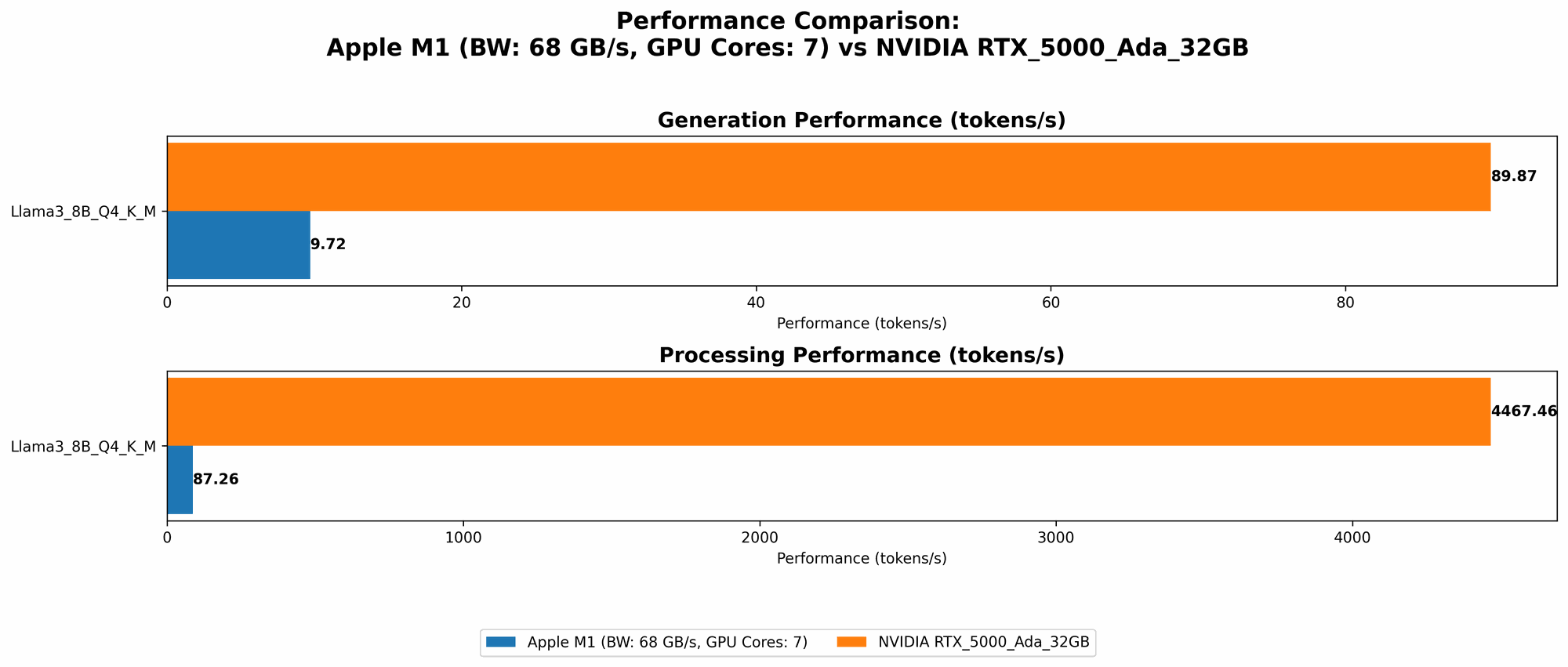

- Llama 3 8B Q4KM: The Apple M1 shows a speed of 9.72 tokens per second for generation tasks with Llama 3 8B using Q4KM quantization.

NVIDIA RTX5000Ada_32GB Token Speed Generation

The NVIDIA RTX5000Ada_32GB, on the other hand, is a powerhouse when it comes to larger models and F16 precision.

- Llama 3 8B Q4KM: The RTX5000Ada32GB boasts a speed of 89.87 tokens per second for generation tasks with Llama 3 8B using Q4K_M quantization.

- Llama 3 8B F16: The RTX5000Ada_32GB delivers a speed of 32.67 tokens per second for generation tasks with Llama 3 8B using F16 precision.

Table 1: Token Speed Comparison for Generation Tasks

| Model | Apple M1 68gb 7cores | NVIDIA RTX5000Ada_32GB |

|---|---|---|

| Llama 2 7B Q8_0 | 7.92 | N/A |

| Llama 2 7B Q4_0 | 14.19 | N/A |

| Llama 3 8B Q4KM | 9.72 | 89.87 |

| Llama 3 8B F16 | N/A | 32.67 |

| Llama 3 70B Q4KM | N/A | N/A |

| Llama 3 70B F16 | N/A | N/A |

Analysis: The NVIDIA RTX5000Ada32GB clearly outperforms the Apple M1 when it comes to larger models like Llama 3 8B and when using F16 precision, as it can handle these models at a much faster pace. However, the Apple M1 still shines with smaller models like Llama 2 7B, especially when using quantized formats like Q80 and Q4_0.

Apple M1 Token Speed Processing

Turning our attention to processing speed, the Apple M1 again demonstrates its strength with smaller models and quantized formats.

- Llama 2 7B Q80: The Apple M1 achieves 108.21 tokens per second for processing tasks on the Llama 2 7B model with Q80 quantization.

- Llama 2 7B Q40: The Apple M1 boasts an impressive 107.81 tokens per second for processing tasks with Llama 2 7B using Q40 quantization.

- Llama 3 8B Q4KM: The Apple M1 exhibits a speed of 87.26 tokens per second for processing tasks with Llama 3 8B using Q4KM quantization.

NVIDIA RTX5000Ada_32GB Token Speed Processing

For processing, the NVIDIA RTX5000Ada_32GB truly unleashes its power, especially when dealing with larger models and F16 precision.

- Llama 3 8B Q4KM: The RTX5000Ada32GB achieves an astounding 4467.46 tokens per second for processing tasks with Llama 3 8B using Q4K_M quantization.

- Llama 3 8B F16: The RTX5000Ada_32GB delivers a speed of 5835.41 tokens per second for processing tasks with Llama 3 8B using F16 precision.

Table 2: Token Speed Comparison for Processing Tasks

| Model | Apple M1 68gb 7cores | NVIDIA RTX5000Ada_32GB |

|---|---|---|

| Llama 2 7B Q8_0 | 108.21 | N/A |

| Llama 2 7B Q4_0 | 107.81 | N/A |

| Llama 3 8B Q4KM | 87.26 | 4467.46 |

| Llama 3 8B F16 | N/A | 5835.41 |

| Llama 3 70B Q4KM | N/A | N/A |

| Llama 3 70B F16 | N/A | N/A |

Analysis: The NVIDIA RTX5000Ada32GB significantly outpaces the Apple M1 when it comes to processing larger models, especially when using Q4KM or F16 precision. This is due to the RTX5000Ada32GB's powerful GPU capabilities, which are optimized for these tasks. However, the Apple M1 remains competitive with smaller models and quantized formats.

Performance Breakdown and Practical Recommendations

Apple M1 68gb 7cores

Strengths

- Power Efficiency: The Apple M1 is known for its impressive energy efficiency, making it a great choice for developers who prioritize battery life and lower energy consumption.

- Cost-Effectiveness: The Apple M1 is generally more affordable than high-end GPUs like the RTX5000Ada_32GB, which can be a significant advantage, especially for budget-conscious users.

- Great for Smaller Models: For smaller LLMs like Llama 2 7B, especially when using quantized formats, the Apple M1 offers impressive performance.

Weaknesses

- Limited to Smaller Models: The Apple M1 struggles with larger models like Llama 3 8B and Llama 3 70B, especially when using F16 precision.

- Memory Constraints: While the 68GB of memory is decent, for larger models, it can become a bottleneck, especially for tasks that require lots of information or context.

Use Cases:

- Small-Scale LLM Development: If you're working on smaller LLMs or if you prioritize power efficiency and cost-effectiveness, the Apple M1 is a solid option.

- Quantized Model Exploration: The Apple M1 excels with quantized models, making it an excellent choice for exploring and experimenting with these techniques.

NVIDIA RTX5000Ada_32GB

Strengths

- Unmatched Performance: For larger LLMs like Llama 3 8B and Llama 3 70B, the RTX5000Ada32GB delivers exceptional performance, especially in both Q4K_M and F16 precision.

- Flexible for Different Models: The RTX5000Ada_32GB can handle a wide range of LLM sizes and precision levels, offering greater flexibility for different tasks.

- High Memory Capacity: The 32GB of memory provides ample space for large models, allowing you to work with complex tasks that require extensive context or information.

Weaknesses

- Power Consumption: High-end GPUs like the RTX5000Ada_32GB consume significantly more power than the Apple M1, leading to higher energy bills and potential heat issues.

- Cost Factor: The RTX5000Ada_32GB is significantly more expensive than the Apple M1, making it a less desirable option for those on tight budgets.

Use Cases:

- Large-Scale LLM Applications: If you're working with large LLMs like Llama 3 8B or Llama 3 70B, the RTX5000Ada_32GB provides the necessary power to handle these models efficiently.

- High-Precision Tasks: For tasks that require F16 precision, the RTX5000Ada_32GB is the clear winner, offering unmatched performance in this area.

Summary

The choice between the Apple M1 68gb 7cores and NVIDIA RTX5000Ada_32GB ultimately depends on your specific needs and priorities.

If you prioritize:

- Power efficiency and cost-effectiveness: The Apple M1 is a great choice for smaller models and quantized formats.

- Performance, flexibility, and memory capacity: The NVIDIA RTX5000Ada_32GB is the way to go for larger models and tasks requiring F16 precision.

FAQ

Q: What is quantization in the context of LLMs?

A: Quantization is a technique used to reduce the size of LLM models while maintaining their performance. Imagine converting a detailed photo into a pixelated version, but still recognizable. Quantization does something similar, reducing the precision of the numbers used by the model without drastically impacting its accuracy.

Q: What are the trade-offs between F16 and quantized formats?

A: F16 (half-precision floating-point) offers higher accuracy but requires more memory and processing power. Quantized formats like Q80 or Q40 reduce the size of the model and optimize for lower-power devices like the Apple M1, but sometimes sacrifice a small amount of accuracy.

Q: Can I run these LLMs on a standard CPU?

A: You can, but the performance will be significantly slower, especially for larger models. A dedicated GPU, like the RTX5000Ada_32GB, or a specialized chip like the Apple M1 offers much faster speeds.

Q: How do I choose the right LLM for my project?

A: Consider the size of your project, the required accuracy, and the available resources. Smaller models like Llama 2 7B can be used for smaller tasks, while larger models like Llama 3 8B or Llama 3 70B are better suited for complex projects.

Keywords

Apple M1, NVIDIA RTX5000Ada_32GB, LLM, Llama 2, Llama 3, Token speed, GPU, CPU, Quantization, F16, Generation, Processing, AI, Deep Learning, Machine Learning, Performance, Cost, Power Consumption, Memory, LLM Selection, Use Cases, Development, Research.