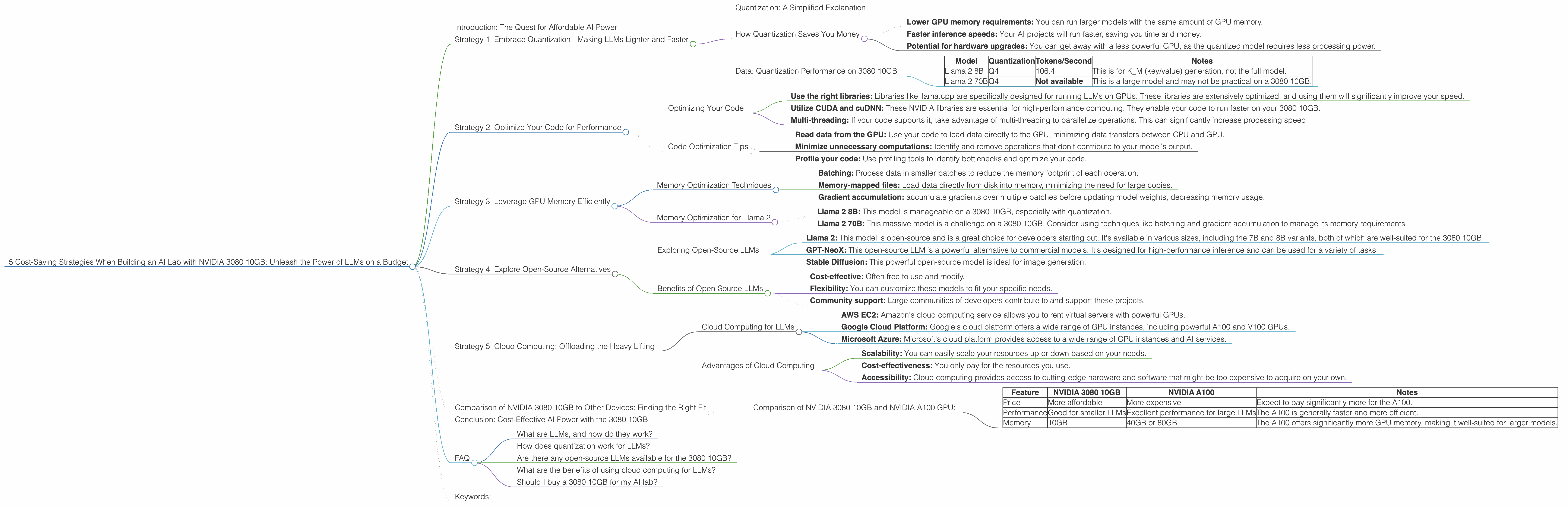

5 Cost Saving Strategies When Building an AI Lab with NVIDIA 3080 10GB

Introduction: The Quest for Affordable AI Power

The world of artificial intelligence (AI) is exploding with new possibilities, thanks to the rise of large language models (LLMs). LLMs like Llama 2, GPT-3, and Stable Diffusion can generate creative text, translate languages, write different kinds of creative content, and answer your questions in an informative way, opening doors to a world of possibilities. But the reality is that running these models on your own machine often requires a dedicated, high-end GPU, and that can be expensive.

This article is for you, the developer with a passion for AI, who wants to set up a local AI lab without breaking the bank. We'll explore five key cost-saving strategies using the popular NVIDIA 3080 10GB GPU, specifically for running Llama 2 models. We'll dive into the performance of this GPU, compare it to other options, and provide practical tips for maximizing your investment. Let's get started!

Strategy 1: Embrace Quantization - Making LLMs Lighter and Faster

Imagine your LLM as a giant, detailed map. To navigate it quickly, you can simplify the map by using a lower resolution (quantization), meaning you sacrifice some detail but gain speed. This is exactly what quantization does for LLMs. It reduces the size of the model by using fewer bits to represent the weights, increasing inference speeds and decreasing memory requirements.

Quantization: A Simplified Explanation

Think of it like reducing the number of colors in an image. A high-resolution photo has many colors (like a high-precision LLM), while a compressed image has fewer colors (like a quantized LLM). The compressed image may have slightly less detail, but it takes up less space and loads faster.

How Quantization Saves You Money

The NVIDIA 3080 10GB excels in its ability to handle quantized models. For example, by using 4-bit quantization (Q4) for the Llama 2 8B model, you can achieve significant gains in memory efficiency and speed. This translates to:

- Lower GPU memory requirements: You can run larger models with the same amount of GPU memory.

- Faster inference speeds: Your AI projects will run faster, saving you time and money.

- Potential for hardware upgrades: You can get away with a less powerful GPU, as the quantized model requires less processing power.

Data: Quantization Performance on 3080 10GB

| Model | Quantization | Tokens/Second | Notes |

|---|---|---|---|

| Llama 2 8B | Q4 | 106.4 | This is for K_M (key/value) generation, not the full model. |

| Llama 2 70B | Q4 | Not available | This is a large model and may not be practical on a 3080 10GB. |

Note: F16 performance data for this GPU and model combination is not available currently.

Strategy 2: Optimize Your Code for Performance

Once you've chosen your hardware and quantization strategy, the next step is to tune your code for maximum performance. This involves optimizing your code to take advantage of the unique features of your GPU, ensuring smooth and efficient processing.

Optimizing Your Code

- Use the right libraries: Libraries like llama.cpp are specifically designed for running LLMs on GPUs. These libraries are extensively optimized, and using them will significantly improve your speed.

- Utilize CUDA and cuDNN: These NVIDIA libraries are essential for high-performance computing. They enable your code to run faster on your 3080 10GB.

- Multi-threading: If your code supports it, take advantage of multi-threading to parallelize operations. This can significantly increase processing speed.

Code Optimization Tips

- Read data from the GPU: Use your code to load data directly to the GPU, minimizing data transfers between CPU and GPU.

- Minimize unnecessary computations: Identify and remove operations that don't contribute to your model's output.

- Profile your code: Use profiling tools to identify bottlenecks and optimize your code.

Strategy 3: Leverage GPU Memory Efficiently

The 3080 10GB has a decent amount of GPU memory, but it's still crucial to manage it efficiently to avoid running into memory-related issues. Large language models can be memory hogs, so you need to plan carefully.

Memory Optimization Techniques

- Batching: Process data in smaller batches to reduce the memory footprint of each operation.

- Memory-mapped files: Load data directly from disk into memory, minimizing the need for large copies.

- Gradient accumulation: accumulate gradients over multiple batches before updating model weights, decreasing memory usage.

Memory Optimization for Llama 2

- Llama 2 8B: This model is manageable on a 3080 10GB, especially with quantization.

- Llama 2 70B: This massive model is a challenge on a 3080 10GB. Consider using techniques like batching and gradient accumulation to manage its memory requirements.

Strategy 4: Explore Open-Source Alternatives

The AI landscape is bursting with open-source projects and offerings that can save you money. You may be able to find an open-source LLM that meets your needs without the high costs associated with commercial models.

Exploring Open-Source LLMs

- Llama 2: This model is open-source and is a great choice for developers starting out. It's available in various sizes, including the 7B and 8B variants, both of which are well-suited for the 3080 10GB.

- GPT-NeoX: This open-source LLM is a powerful alternative to commercial models. It's designed for high-performance inference and can be used for a variety of tasks.

- Stable Diffusion: This powerful open-source model is ideal for image generation.

Benefits of Open-Source LLMs

- Cost-effective: Often free to use and modify.

- Flexibility: You can customize these models to fit your specific needs.

- Community support: Large communities of developers contribute to and support these projects.

Strategy 5: Cloud Computing: Offloading the Heavy Lifting

Sometimes, even with a capable GPU like the 3080 10GB, you may need more power for demanding projects. Cloud computing offers a flexible and cost-effective way to access high-performance computing resources on demand, allowing you to scale up your resources quickly and efficiently.

Cloud Computing for LLMs

- AWS EC2: Amazon's cloud computing service allows you to rent virtual servers with powerful GPUs.

- Google Cloud Platform: Google's cloud platform offers a wide range of GPU instances, including powerful A100 and V100 GPUs.

- Microsoft Azure: Microsoft's cloud platform provides access to a wide range of GPU instances and AI services.

Advantages of Cloud Computing

- Scalability: You can easily scale your resources up or down based on your needs.

- Cost-effectiveness: You only pay for the resources you use.

- Accessibility: Cloud computing provides access to cutting-edge hardware and software that might be too expensive to acquire on your own.

Comparison of NVIDIA 3080 10GB to Other Devices: Finding the Right Fit

The 3080 10GB is a good choice for many developers wanting an affordable, powerful, and performant GPU. However, it's always useful to compare it to other options to see how it measures up.

Comparison of NVIDIA 3080 10GB and NVIDIA A100 GPU:

| Feature | NVIDIA 3080 10GB | NVIDIA A100 | Notes |

|---|---|---|---|

| Price | More affordable | More expensive | Expect to pay significantly more for the A100. |

| Performance | Good for smaller LLMs | Excellent performance for large LLMs | The A100 is generally faster and more efficient. |

| Memory | 10GB | 40GB or 80GB | The A100 offers significantly more GPU memory, making it well-suited for larger models. |

Note: The NVIDIA A100 is a high-end GPU, designed for demanding AI workloads. It's likely to be more expensive than the 3080 10GB. If you're working with large models, the extra memory and performance of the A100 may be worth the investment.

Conclusion: Cost-Effective AI Power with the 3080 10GB

You don't need to spend a fortune to build a powerful AI lab. The NVIDIA 3080 10GB is a versatile and cost-effective GPU that can handle most LLM needs. By implementing cost-saving strategies like quantization, code optimization, and leveraging open-source resources, you can create a powerful AI development environment without breaking the bank.

Remember, AI is constantly evolving, so stay informed about new technologies and techniques. And most importantly, have fun exploring the exciting world of AI!

FAQ

What are LLMs, and how do they work?

LLMs are a type of AI model trained on massive datasets of text and code. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. It's like having a super-smart assistant who can process information and respond in a human-like way.

How does quantization work for LLMs?

Quantization is a technique that reduces the size of a model's weights by using fewer bits to represent them. Think of it like reducing the number of colors in a photo – you lose some detail, but the file size becomes smaller and loads faster. The same principle applies to LLMs: quantization makes them faster and more efficient.

Are there any open-source LLMs available for the 3080 10GB?

Yes! Llama 2 (especially the 7B and 8B variants) is a popular open-source LLM that works well on the 3080 10GB. It's a great choice if you're looking for a free and customizable LLM.

What are the benefits of using cloud computing for LLMs?

Cloud computing offers flexibility and scalability. You can easily scale your resources up or down based on your needs, and you only pay for what you use. This is a cost-effective way to run large and demanding LLMs without investing in expensive hardware.

Should I buy a 3080 10GB for my AI lab?

If you're on a budget and want a powerful GPU for running smaller and medium-sized LLMs, the NVIDIA 3080 10GB is a great option. If you plan to work with massive LLMs, consider a more powerful GPU like the A100 or leverage cloud computing.

Keywords:

NVIDIA 3080 10GB, LLM, Llama 2, GPU, AI, Quantization, Open-source, AI lab, Cloud computing, Deep Learning, Model Optimization, Memory Efficiency, Cost-Effective, AI development.