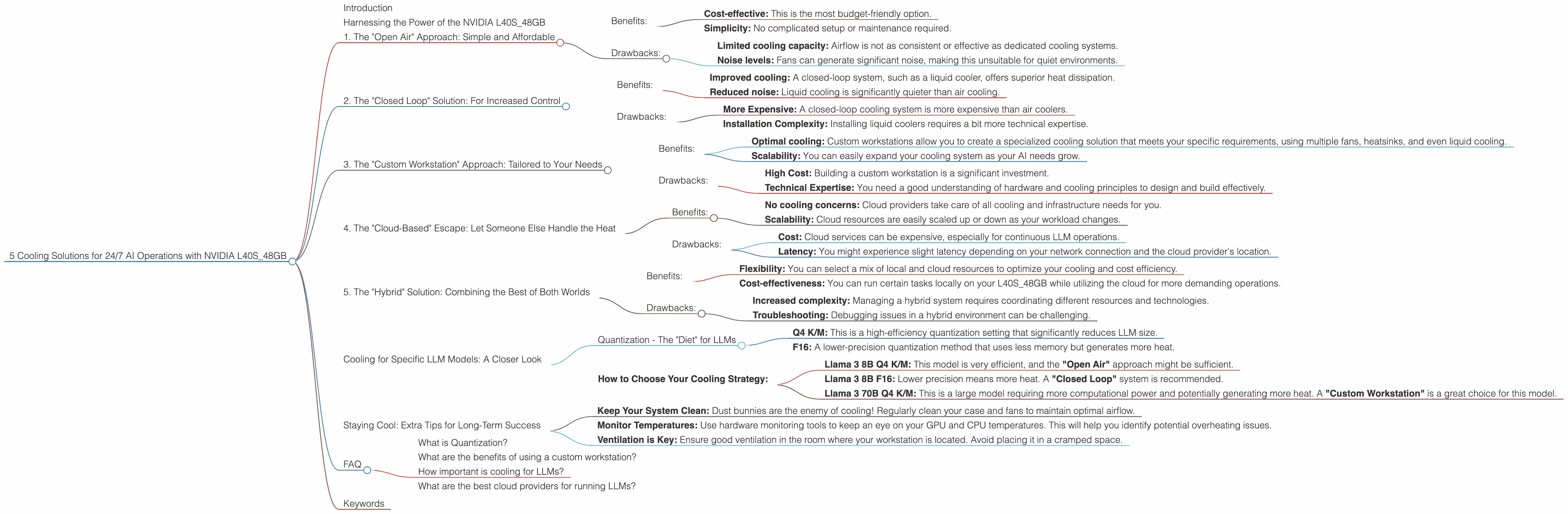

5 Cooling Solutions for 24 7 AI Operations with NVIDIA L40S 48GB

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason. These powerful AI systems can generate human-quality text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these LLMs locally, especially for continuous operation, can be a real challenge.

Think of it like this: LLMs are like high-performance supercars. They're incredibly powerful, but they need a lot of horsepower to run smoothly. This horsepower comes in the form of computing power and, most importantly, a lot of heat. In this article, we'll dive into how to keep your NVIDIA L40S_48GB cool and running smoothly while you unleash the power of LLMs for round-the-clock applications.

Harnessing the Power of the NVIDIA L40S_48GB

The NVIDIA L40S_48GB is a beast of a GPU specifically designed for AI workloads. It's packed with 48GB of high-bandwidth memory, making it a powerhouse for running large language models. But with great power comes great heat!

To keep your L40S_48GB humming without overheating, you need to implement a cooling strategy. We'll explore five different approaches, each with its pros and cons.

1. The "Open Air" Approach: Simple and Affordable

Benefits:

- Cost-effective: This is the most budget-friendly option.

- Simplicity: No complicated setup or maintenance required.

Drawbacks:

- Limited cooling capacity: Airflow is not as consistent or effective as dedicated cooling systems.

- Noise levels: Fans can generate significant noise, making this unsuitable for quiet environments.

2. The "Closed Loop" Solution: For Increased Control

Benefits:

- Improved cooling: A closed-loop system, such as a liquid cooler, offers superior heat dissipation.

- Reduced noise: Liquid cooling is significantly quieter than air cooling.

Drawbacks:

- More Expensive: A closed-loop cooling system is more expensive than air coolers.

- Installation Complexity: Installing liquid coolers requires a bit more technical expertise.

3. The "Custom Workstation" Approach: Tailored to Your Needs

Benefits:

- Optimal cooling: Custom workstations allow you to create a specialized cooling solution that meets your specific requirements, using multiple fans, heatsinks, and even liquid cooling.

- Scalability: You can easily expand your cooling system as your AI needs grow.

Drawbacks:

- High Cost: Building a custom workstation is a significant investment.

- Technical Expertise: You need a good understanding of hardware and cooling principles to design and build effectively.

4. The "Cloud-Based" Escape: Let Someone Else Handle the Heat

Benefits:

- No cooling concerns: Cloud providers take care of all cooling and infrastructure needs for you.

- Scalability: Cloud resources are easily scaled up or down as your workload changes.

Drawbacks:

- Cost: Cloud services can be expensive, especially for continuous LLM operations.

- Latency: You might experience slight latency depending on your network connection and the cloud provider's location.

5. The "Hybrid" Solution: Combining the Best of Both Worlds

Benefits:

- Flexibility: You can select a mix of local and cloud resources to optimize your cooling and cost efficiency.

- Cost-effectiveness: You can run certain tasks locally on your L40S_48GB while utilizing the cloud for more demanding operations.

Drawbacks:

- Increased complexity: Managing a hybrid system requires coordinating different resources and technologies.

- Troubleshooting: Debugging issues in a hybrid environment can be challenging.

Cooling for Specific LLM Models: A Closer Look

Now that you know the different approaches to cooling, let's see how they apply to specific LLM models running on your NVIDIA L40S_48GB.

Note: The performance data provided in the table is for informational purposes only. Actual results may vary depending on your system configuration and other factors.

Tokens per second are a measure of speed, like miles per hour for a car. The higher the number, the faster your LLM can generate and process text.

| Model & Quantization | Generation (Tokens/second) | Processing (Tokens/second) | Cooling Solution |

|---|---|---|---|

| Llama 3 8B Q4 K/M | 113.6 | 5908.52 | "Open Air" Good option for this model! |

| Llama 3 8B F16 | 43.42 | 2491.65 | "Closed Loop" Needed for F16 lower precision due to higher heat generation |

| Llama 3 70B Q4 K/M | 15.31 | 649.08 | "Custom Workstation" Highly recommended for smooth and efficient operation |

Llama 3 70B F16: No data is currently available for Llama 3 70B F16 on the L40S_48GB.

Explanation:

Quantization - The "Diet" for LLMs

Quantization is a way to make LLMs "diet" by reducing the size of their memory footprint. Think of it like compressing a large image file. It allows LLMs to run faster and more efficiently, but it can also increase heat generation, making cooling solutions even more important.

- Q4 K/M: This is a high-efficiency quantization setting that significantly reduces LLM size.

- F16: A lower-precision quantization method that uses less memory but generates more heat.

How to Choose Your Cooling Strategy:

Llama 3 8B Q4 K/M: This model is very efficient, and the "Open Air" approach might be sufficient.

Llama 3 8B F16: Lower precision means more heat. A "Closed Loop" system is recommended.

Llama 3 70B Q4 K/M: This is a large model requiring more computational power and potentially generating more heat. A "Custom Workstation" is a great choice for this model.

Staying Cool: Extra Tips for Long-Term Success

Here are some additional tips to ensure your L40S_48GB stays cool and your LLM operations run smoothly:

- Keep Your System Clean: Dust bunnies are the enemy of cooling! Regularly clean your case and fans to maintain optimal airflow.

- Monitor Temperatures: Use hardware monitoring tools to keep an eye on your GPU and CPU temperatures. This will help you identify potential overheating issues.

- Ventilation is Key: Ensure good ventilation in the room where your workstation is located. Avoid placing it in a cramped space.

FAQ

What is Quantization?

Quantization is a technique used to compress the size of a large language model. It involves converting the model's data from high-precision floating-point numbers to lower-precision integers. This results in a smaller model that requires less memory and processing power.

What are the benefits of using a custom workstation?

Custom workstations allow you to create a cooling system that is specifically tailored to your needs. You can choose the components, fans, and liquid cooling systems that best suit your hardware and AI workloads. This gives you greater control over your cooling solution and ensures optimal performance.

How important is cooling for LLMs?

Cooling is essential for the stability and longevity of your LLM operations. It helps prevent overheating, which can lead to performance degradation, errors, and even hardware damage.

What are the best cloud providers for running LLMs?

Major cloud providers like AWS, Azure, and Google Cloud offer powerful infrastructure and services for running LLMs. However, the best provider for your specific needs depends on factors such as cost, location, and features. Research different providers and compare their offerings before choosing one.

Keywords

NVIDIA L40S_48GB, LLM Cooling, AI Operations, GPU Heat, Open Air, Closed Loop, Custom Workstation, Cloud Computing, Hybrid Solutions, Llama 3, Quantization, Tokens per second, Temperature Monitoring, System Ventilation, Cloud Providers, AWS, Azure, Google Cloud