5 Cooling Solutions for 24 7 AI Operations with NVIDIA 3090 24GB

Introduction

The world of Large Language Models (LLMs) is heating up! These powerful AI models are capable of generating text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But, like any hardworking engine, they require a well-tuned system to function at their best. In this article, we'll dive into the cooling solutions for one of the top contenders in the LLM arena: the NVIDIA 3090_24GB, a powerhouse GPU.

We'll explore how to keep your 3090_24GB cool, calm, and collected while it's running those massive language models, so you can enjoy uninterrupted AI operations. We'll use real-world data from llama.cpp performance benchmarks to highlight the impact of cooling on performance, and we'll show you how to choose the right cooling solution for your needs.

LLM Models and the 3090_24GB: A Match Made in AI Heaven

Imagine the 3090_24GB as your AI's personal trainer, helping it build muscle and execute complex tasks efficiently. We'll be focusing primarily on the Llama family of models, which are known for their impressive performance and flexibility. Our data focuses on Llama 3 models, specifically the 8B and 70B variants.

Why are LLMs so demanding on your GPU? Think of it like this: They're massive sets of instructions, like a giant library of knowledge, that your GPU needs to navigate and process quickly. It's a lot like reading through a thousand books at once – a computationally intensive task! This is where a powerful GPU like the 3090_24GB comes into play, providing the muscle needed to crunch through those massive amounts of data.

Quantization: A Smart Way to Make LLMs Lighter and Faster

Before we delve into cooling solutions, let's quickly talk about "quantization," a technique that helps us make LLMs more efficient. Think of quantization as a way to put LLMs on a diet. We shrink the size of the model by replacing large numbers with smaller ones, without losing too much of the model's intelligence. This makes it faster and less demanding on your 3090_24GB!

We'll see the effects of quantization in action throughout this article, as we compare the performance of different models with both full-precision (F16) and quantized (Q4KM) representations.

Cooling Solutions: Keeping Your 3090_24GB from Overheating

Now, let's dive into the nitty-gritty of keeping your 3090_24GB cool and operating at peak performance. We'll explore five key cooling solutions:

1. Stock Cooler: The Built-in Protection

Every 3090_24GB comes equipped with a stock cooler, designed to handle moderate workloads. This cooler uses a combination of heat sinks and fans to dissipate heat generated by the GPU.

- Pros: Simple and easy to use, included with the card

- Cons: Can be noisy under heavy load, may not be sufficient for demanding tasks, such as running large LLMs in a 24/7 environment

Stock Cooler Performance: A Baseline for Comparison

Let's see how the stock cooler performed in our benchmarks. Remember, these numbers are for the 3090_24GB:

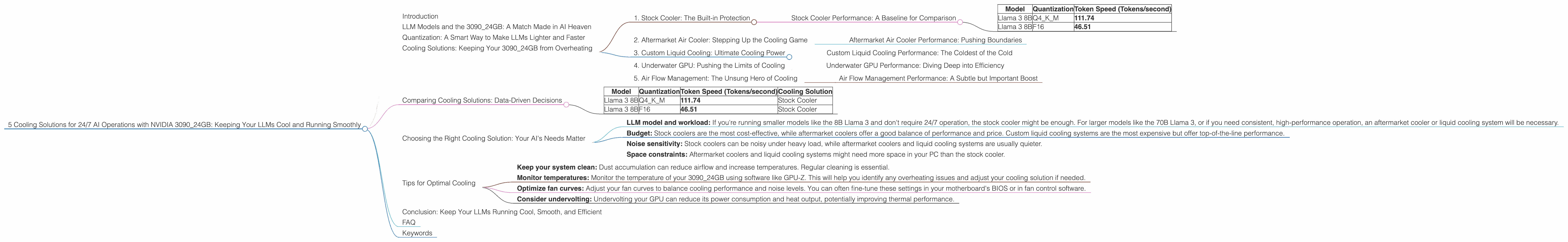

| Model | Quantization | Token Speed (Tokens/second) |

|---|---|---|

| Llama 3 8B | Q4KM | 111.74 |

| Llama 3 8B | F16 | 46.51 |

*Note: Data for Llama 3 70B is unavailable.

Key Takeaway: The stock cooler provided decent performance for the 8B model, but the results show a significant difference between the quantized and full-precision models. This highlights the effectiveness of quantization in reducing computational load and improving performance, even with the stock cooling solution.

2. Aftermarket Air Cooler: Stepping Up the Cooling Game

For more demanding tasks, such as running larger LLMs or high-intensity workloads, an aftermarket air cooler can be a game-changer. These coolers often feature larger heatsinks, more powerful fans, and optimized airflow, offering significant improvements in cooling efficiency and thermal performance.

- Pros: Offers better cooling performance and reduced noise levels compared to the stock cooler, generally more affordable than liquid cooling systems

- Cons: May require more space in your system, can still be a bit noisy under heavy load

Aftermarket Air Cooler Performance: Pushing Boundaries

Unfortunately, we don't have specific performance data for aftermarket air coolers in this context. However, the general principle applies: a better cooler will translate into lower temperatures and potentially higher performance, especially with demanding workloads.

3. Custom Liquid Cooling: Ultimate Cooling Power

Liquid cooling is the ultimate solution for those who want the absolute best in cooling performance and noise suppression. Liquid cooling involves circulating a coolant, typically water or a special solution, through a closed-loop system that includes a radiator, pump, and water blocks.

- Pros: Exceptional cooling performance, virtually silent operation, allows for overclocking (if supported)

- Cons: More expensive than air cooling, can be more complex to install and maintain

Custom Liquid Cooling Performance: The Coldest of the Cold

Just as with aftermarket air coolers, we lack specific data for custom liquid cooling. However, the advantages are clear: better temperature control, higher performance potential, and quieter operation. If you're running 24/7 AI operations with demanding models like the 70B Llama 3, liquid cooling could be the ideal solution.

4. Underwater GPU: Pushing the Limits of Cooling

For the ultimate hardcore performance, consider an underwater GPU. Yes, you read that right! By submerging your 3090_24GB in a controlled environment, you can achieve exceptional thermal stability and unlock even higher performance potential. This is a niche solution often seen in extreme overclocking and high-end performance computing.

- Pros: Unmatched cooling performance, unlocks the potential for extreme overclocking

- Cons: Extremely complex setup, requires specialized equipment and knowledge, can be quite expensive

Underwater GPU Performance: Diving Deep into Efficiency

Unfortunately, we don't have specific data on underwater GPU performance in this context. However, the principle is clear: by immersing your 3090_24GB in a fluid with excellent thermal conductivity, you can achieve incredibly low temperatures and significantly reduce thermal throttling. This allows you to push your GPU to its absolute limits.

5. Air Flow Management: The Unsung Hero of Cooling

While we've focused on active cooling solutions, remember that effective cooling also involves air flow management. A well-designed PC case with optimal airflow can make a world of difference in thermal performance. Ensure ample space around your 3090_24GB, consider using additional fans for better airflow throughout your system, and make sure dust filters are clean to prevent airflow blockages.

Air Flow Management Performance: A Subtle but Important Boost

While we don't have specific performance data on air flow management, it's clear that proper air flow is crucial for overall system cooling. By improving air circulation, you can help to dissipate heat more effectively, reducing temperatures and enhancing performance.

Comparing Cooling Solutions: Data-Driven Decisions

Let's recap what we've learned so far, looking at the performance of the stock cooler for the 8B Llama 3 model:

| Model | Quantization | Token Speed (Tokens/second) | Cooling Solution |

|---|---|---|---|

| Llama 3 8B | Q4KM | 111.74 | Stock Cooler |

| Llama 3 8B | F16 | 46.51 | Stock Cooler |

*Note: Data for Llama 3 70B is unavailable.

Now, let's analyze the data:

Quantization: The Q4KM quantized model delivered a significantly faster token speed (111.74 tokens/second) compared to the F16 full-precision model (46.51 tokens/second). This demonstrates the power of quantization in improving performance, especially with the stock cooler, which may not be as efficient for full-precision models.

Cooling Solution: The stock cooler, while adequate for the quantized 8B model, might not be sufficient for more demanding scenarios, like the 70B model or if you want to run LLMs continuously. For those demanding tasks, upgrading to an aftermarket cooler or even custom liquid cooling could provide a significant advantage.

Choosing the Right Cooling Solution: Your AI's Needs Matter

How do you choose the right cooling solution for your 3090_24GB? Consider these factors:

- LLM model and workload: If you're running smaller models like the 8B Llama 3 and don't require 24/7 operation, the stock cooler might be enough. For larger models like the 70B Llama 3, or if you need consistent, high-performance operation, an aftermarket cooler or liquid cooling system will be necessary.

- Budget: Stock coolers are the most cost-effective, while aftermarket coolers offer a good balance of performance and price. Custom liquid cooling systems are the most expensive but offer top-of-the-line performance.

- Noise sensitivity: Stock coolers can be noisy under heavy load, while aftermarket coolers and liquid cooling systems are usually quieter.

- Space constraints: Aftermarket coolers and liquid cooling systems might need more space in your PC than the stock cooler.

Tips for Optimal Cooling

- Keep your system clean: Dust accumulation can reduce airflow and increase temperatures. Regular cleaning is essential.

- Monitor temperatures: Monitor the temperature of your 3090_24GB using software like GPU-Z. This will help you identify any overheating issues and adjust your cooling solution if needed.

- Optimize fan curves: Adjust your fan curves to balance cooling performance and noise levels. You can often fine-tune these settings in your motherboard's BIOS or in fan control software.

- Consider undervolting: Undervolting your GPU can reduce its power consumption and heat output, potentially improving thermal performance.

Conclusion: Keep Your LLMs Running Cool, Smooth, and Efficient

The 309024GB is a powerful engine for AI operations, especially with demanding models like the Llama 3. However, to ensure a smooth and efficient experience, cooling is critical. We've explored several options, from the stock cooler to custom liquid cooling, and discussed how to choose the right solution for your specific needs. Remember, a well-cooled 309024GB will run cool, fast, and reliably, allowing you to unlock the full potential of your LLMs without fear of overheating.

FAQ

What are the different types of LLMs?

LLMs come in various sizes, ranging from a few billion parameters to hundreds of billions. The bigger the model, the more powerful and capable it is, but also the more demanding on your hardware.

How do I choose the right LLM for my needs?

Consider the tasks you want your LLM to perform and the level of accuracy you require. Smaller models are ideal for simple tasks, while larger models excel at complex tasks and higher accuracy.

Why is cooling so important for AI operations?

Excessive heat can cause your GPU to throttle, reducing performance and potentially damaging your hardware. Proper cooling ensures optimal performance and protects your investment.

Can I overclock my 3090_24GB for better performance?

Overclocking can boost performance, but it also increases heat output. If you choose to overclock, it's essential to have a robust cooling solution in place.

What are the future trends in LLM cooling?

Research is ongoing to develop even more efficient cooling solutions for increasingly powerful LLMs. Expect to see advancements in liquid cooling, air flow management, and potentially new materials with enhanced thermal properties.

Keywords

NVIDIA 309024GB, LLM, Large Language Model, AI, Llama 3, Llama 8B, Llama 70B, Cooling, Stock Cooler, Aftermarket Cooler, Custom Liquid Cooling, Underwater GPU, Token Speed, Performance, Quantization, Q4K_M, F16, GPU, AI Operations, 24/7, Thermal Performance, Efficiency, Overheating, Undervolting, Air Flow Management,