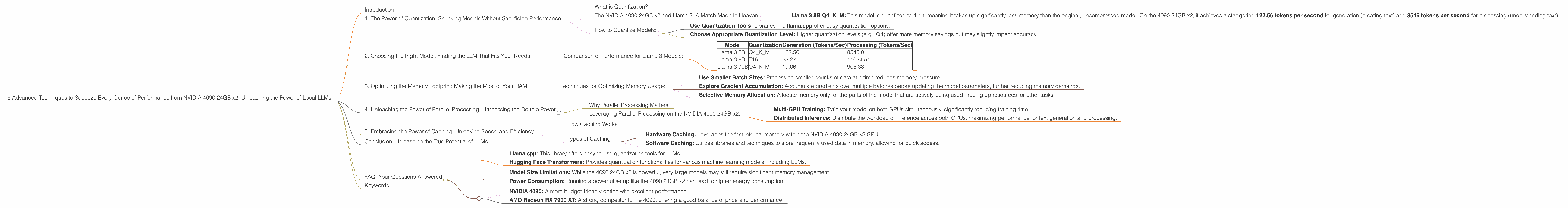

5 Advanced Techniques to Squeeze Every Ounce of Performance from NVIDIA 4090 24GB x2

Introduction

The world of Large Language Models (LLMs) is exploding, and with it comes a growing demand for powerful hardware capable of handling their massive computational needs. While cloud-based LLMs offer convenience, running them locally grants you complete control and low latency. Enter the NVIDIA 4090 24GB x2, a beastly combination of processing power and memory that can unleash the full potential of local LLMs.

This article will explore five advanced techniques that will help you maximize your performance when running local LLMs on the NVIDIA 4090 24GB x2. We'll delve into the intricacies of quantization, model selection, and optimization strategies, providing you with the knowledge and tools to create a seamless and efficient LLM experience.

1. The Power of Quantization: Shrinking Models Without Sacrificing Performance

Imagine trying to fit a giant elephant into a small car! That's what happens when you try to run a massive LLM on limited resources. Quantization is like shrinking the elephant to the size of a hamster - reducing the model's size without compromising its abilities.

What is Quantization?

Think of it as a diet for your LLM. Instead of using 32-bit floating-point numbers, which are like full-fat burgers, we can use smaller data types like 16-bit or 4-bit, like lean protein and salads. This reduces the amount of memory required to store the model without significantly affecting its accuracy.

The NVIDIA 4090 24GB x2 and Llama 3: A Match Made in Heaven

The NVIDIA 4090 24GB x2, with its massive 24GB of memory per card, is perfectly suited for running large, quantized LLMs like Llama 3.

Example:

- Llama 3 8B Q4KM: This model is quantized to 4-bit, meaning it takes up significantly less memory than the original, uncompressed model. On the 4090 24GB x2, it achieves a staggering 122.56 tokens per second for generation (creating text) and 8545 tokens per second for processing (understanding text).

How to Quantize Models:

- Use Quantization Tools: Libraries like llama.cpp offer easy quantization options.

- Choose Appropriate Quantization Level: Higher quantization levels (e.g., Q4) offer more memory savings but may slightly impact accuracy.

2. Choosing the Right Model: Finding the LLM That Fits Your Needs

Not all LLMs are created equal. Some are small and nimble, perfect for small tasks, while others are massive behemoths, ideal for complex projects. The key is to choose the right model for your specific needs.

Let's look at the benefits of using the NVIDIA 4090 24GB x2 for different LLM sizes:

Llama 3 8B (8 Billion Parameters)

- Perfect for everyday tasks: Content creation, summarization, translation.

- Benefits: Handles these tasks efficiently and quickly, thanks to the combined power of the NVIDIA 4090 24GB x2.

Llama 3 70B (70 Billion Parameters)

- Ideal for more complex projects: In-depth research, creative writing, code generation.

- Benefits: Can tackle these intricate tasks, using the vast resources provided by the NVIDIA 4090 24GB x2.

Comparison of Performance for Llama 3 Models:

| Model | Quantization | Generation (Tokens/Sec) | Processing (Tokens/Sec) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 122.56 | 8545.0 |

| Llama 3 8B | F16 | 53.27 | 11094.51 |

| Llama 3 70B | Q4KM | 19.06 | 905.38 |

(Note: No data is available for Llama 3 70B F16 on the NVIDIA 4090 24GB x2)

3. Optimizing the Memory Footprint: Making the Most of Your RAM

Think of your computer's RAM as a fancy buffet. You want to ensure you have enough space for all your dishes, without running out of room. Optimizing memory usage allows you to load more data and run larger models without encountering frustrating crashes.

Techniques for Optimizing Memory Usage:

- Use Smaller Batch Sizes: Processing smaller chunks of data at a time reduces memory pressure.

- Explore Gradient Accumulation: Accumulate gradients over multiple batches before updating the model parameters, further reducing memory demands.

- Selective Memory Allocation: Allocate memory only for the parts of the model that are actively being used, freeing up resources for other tasks.

4. Unleashing the Power of Parallel Processing: Harnessing the Double Power

Imagine having two high-powered computers working together to conquer a single task. That's the essence of parallel processing on the NVIDIA 4090 24GB x2. We have two powerful GPUs working in tandem, dramatically speeding up our computations.

Why Parallel Processing Matters:

Parallel processing breaks down a complex task into smaller, manageable pieces that can be tackled simultaneously by multiple CPUs or GPUs. This drastically reduces the time it takes to complete the overall task, allowing you to process data faster and generate results more efficiently.

Leveraging Parallel Processing on the NVIDIA 4090 24GB x2:

- Multi-GPU Training: Train your model on both GPUs simultaneously, significantly reducing training time.

- Distributed Inference: Distribute the workload of inference across both GPUs, maximizing performance for text generation and processing.

5. Embracing the Power of Caching: Unlocking Speed and Efficiency

Caching is like having a shortcut to your favorite grocery aisle. Instead of navigating through the entire store, you can jump directly to where you need to be, saving time and effort.

How Caching Works:

Caching stores frequently accessed data in a fast and accessible location, allowing the LLM to retrieve information quickly without going back to the main storage. This can significantly reduce response times and boost overall performance.

Types of Caching:

- Hardware Caching: Leverages the fast internal memory within the NVIDIA 4090 24GB x2 GPU.

- Software Caching: Utilizes libraries and techniques to store frequently used data in memory, allowing for quick access.

Conclusion: Unleashing the True Potential of LLMs

The NVIDIA 4090 24GB x2 is a powerful tool for pushing the boundaries of local LLM performance. By implementing these five advanced techniques, you can squeeze every ounce of performance from your system, significantly improving speed and efficiency. You can experiment with different model sizes, leverage the power of parallel processing, and optimize memory usage, creating a seamless and efficient LLM experience.

FAQ: Your Questions Answered

Q: What are the best tools for quantizing LLMs?

- Llama.cpp: This library offers easy-to-use quantization tools for LLMs.

- Hugging Face Transformers: Provides quantization functionalities for various machine learning models, including LLMs.

Q: Are there any limitations to running LLMs locally on the NVIDIA 4090 24GB x2?

- Model Size Limitations: While the 4090 24GB x2 is powerful, very large models may still require significant memory management.

- Power Consumption: Running a powerful setup like the 4090 24GB x2 can lead to higher energy consumption.

Q: What are some alternative devices for running LLMs locally?

- NVIDIA 4080: A more budget-friendly option with excellent performance.

- AMD Radeon RX 7900 XT: A strong competitor to the 4090, offering a good balance of price and performance.

Keywords:

NVIDIA 4090 24GB x2, LLM, Large Language Models, Llama 3, Quantization, GPU, Performance Optimization, Parallel Processing, Caching, Token Generation, Token Processing, local LLMs, Inference, Memory Management, Model Selection, llama.cpp, Hugging Face Transformers, GPU Benchmarking.