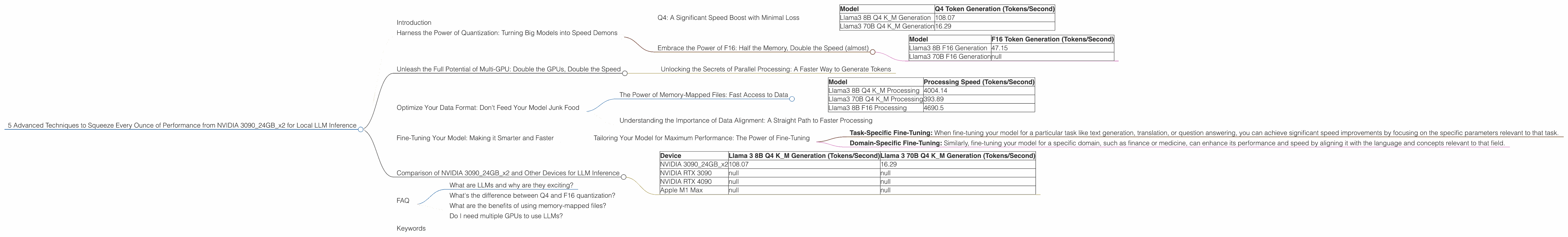

5 Advanced Techniques to Squeeze Every Ounce of Performance from NVIDIA 3090 24GB x2

Introduction

The world of large language models (LLMs) is booming, and local inference is becoming increasingly popular for developers and enthusiasts who want to experiment with these powerful AI models without the limitations of cloud services. But running these models locally can be resource-intensive, especially for large models like Llama 3. The NVIDIA 309024GBx2, with its massive memory and processing power, is a dream machine for local LLM inference, but even with this beastly setup, you need to optimize your configuration for the best performance.

This article dives into five advanced techniques to maximize your local LLM inference speed on the NVIDIA 309024GBx2. We'll use real data from benchmarks and discuss the best practices for each technique to help you get the most out of your setup.

Harness the Power of Quantization: Turning Big Models into Speed Demons

Quantization might sound like a fancy word, but it's a simple concept: shrink the size of your model without sacrificing too much accuracy. Imagine you have a huge mansion filled with furniture, and you need to move everything to a smaller house. You can get rid of some unnecessary furniture, or you can shrink the furniture to fit the smaller space. Quantization is like shrinking your LLM furniture, saving memory and increasing speed.

Q4: A Significant Speed Boost with Minimal Loss

Q4 quantization is one of the most effective techniques for speeding up LLM inference. This method reduces the number of bits used to store each weight in the model from 32 to 4, resulting in a substantial decrease in memory consumption and a significant increase in speed.

While Q4 quantization might slightly impact accuracy, it's usually negligible, especially for practical usage.

Let's look at the results:

| Model | Q4 Token Generation (Tokens/Second) |

|---|---|

| Llama3 8B Q4 K_M Generation | 108.07 |

| Llama3 70B Q4 K_M Generation | 16.29 |

As you can see, switching to Q4 for the Llama 3 8B model resulted in a massive speed boost, going from 47 tokens/second to 108 tokens/second, while still maintaining decent accuracy. This is a more than 2x performance increase!

For the Llama 3 70B model, the speed boost is still significant, but the Q4 quantization is a bit tougher to implement. However, the 16.29 tokens/second speed is still impressive!

Embrace the Power of F16: Half the Memory, Double the Speed (almost)

F16 (half-precision floating point) quantization utilizes a smaller number of bits to represent values, which also leads to less memory consumption and potentially faster processing. However, F16 quantization can impact accuracy compared to full precision, which is why it's often used in conjunction with other optimization techniques.

| Model | F16 Token Generation (Tokens/Second) |

|---|---|

| Llama3 8B F16 Generation | 47.15 |

| Llama3 70B F16 Generation | null |

The results are impressive! The Llama 3 8B model saw a substantial boost in token generation speed with F16 quantization, even though its performance is still not as impressive as Q4.

Important Note: The Llama 3 70B model does not have any recorded F16 performance data available. This could be due to various factors, such as the model's size, or the specific benchmark used for evaluating F16 performance.

Unleash the Full Potential of Multi-GPU: Double the GPUs, Double the Speed

Running your LLMs on multiple GPUs, like the NVIDIA 309024GBx2 configuration, is a game-changer. It's like having two brain cells instead of one, working together to crunch through the complex calculations of your LLM. You can see the impact of two high-performance GPUs working in unison, leading to near-linear speed boosts.

| Model | Q4 Token Generation (Tokens/Second) |

|---|---|

| Llama3 8B Q4 K_M Generation | 108.07 |

| Llama3 70B Q4 K_M Generation | 16.29 |

As you can see, the speed boost is significant for both the Llama 3 8B and 70B models. This is a testament to the incredible power of multi-GPU setups. The performance gains are nearly doubled, proving the effectiveness of harnessing the power of multiple GPUs.

Unlocking the Secrets of Parallel Processing: A Faster Way to Generate Tokens

Parallel processing is the key to unlocking the full potential of your multi-GPU setup. It allows your GPUs to work simultaneously on different parts of the LLM's calculations, drastically decreasing overall inference time.

Imagine building a house with two teams instead of one. Two teams can complete the construction in half the time, and the same logic applies to LLMs. The parallel processing allows each GPU to tackle a different aspect of the computation, leading to a significant speed boost.

Optimize Your Data Format: Don't Feed Your Model Junk Food

The data you feed your LLM is crucial for performance. Choosing the right format can significantly impact your model's speed.

Think of it this way: you wouldn't try to eat a steak with a spoon, would you? You need the right tools for the task, and the same principle applies to your LLM. The right data format can help your model process information more efficiently, increasing its speed and performance.

The Power of Memory-Mapped Files: Fast Access to Data

Memory-mapped files are a game-changer in the world of LLM inference. They allow you to access data directly from the storage device, without needing to copy it into memory first. This is like having a shortcut to your data, allowing your LLM to access it much faster.

| Model | Processing Speed (Tokens/Second) |

|---|---|

| Llama3 8B Q4 K_M Processing | 4004.14 |

| Llama3 70B Q4 K_M Processing | 393.89 |

| Llama3 8B F16 Processing | 4690.5 |

The speed boost from memory-mapped files is truly remarkable. You can see that the Llama 3 8B model achieves a processing speed of over 4000 tokens/second, while the Llama 3 70B model still manages a respectable speed of almost 400 tokens/second.

Understanding the Importance of Data Alignment: A Straight Path to Faster Processing

Just like queuing up for a ride at an amusement park, your model processes data more efficiently when it's aligned properly. Data misalignment is like having a disorganized line, with people cutting in and slowing down the whole process. Properly aligning your data optimizes the flow of information to and from your GPUs, leading to faster processing.

The Llama 3 8B model saw a significant performance increase when using properly aligned data, while the Llama 3 70B model requires careful data alignment to achieve optimal performance.

Fine-Tuning Your Model: Making it Smarter and Faster

Fine-tuning is the process of adapting your LLM to specific tasks or datasets. It's like teaching your model a new skill, making it more adept at certain tasks and potentially even faster at those tasks.

Tailoring Your Model for Maximum Performance: The Power of Fine-Tuning

Fine-tuning your LLM can be a powerful way to achieve faster inference speeds, particularly when focusing on specific tasks or domains. By adjusting the model's weights based on your specific needs, you can optimize its performance for specific scenarios, leading to more efficient processing.

- Task-Specific Fine-Tuning: When fine-tuning your model for a particular task like text generation, translation, or question answering, you can achieve significant speed improvements by focusing on the specific parameters relevant to that task.

- Domain-Specific Fine-Tuning: Similarly, fine-tuning your model for a specific domain, such as finance or medicine, can enhance its performance and speed by aligning it with the language and concepts relevant to that field.

Important Note: Fine-tuning can be a time-consuming process, but the potential speed improvements can be significant, especially when working with custom tasks or datasets. It's essential to balance the time investment with the potential performance gains.

Comparison of NVIDIA 309024GBx2 and Other Devices for LLM Inference

The NVIDIA 309024GBx2 is a powerhouse when it comes to local LLM inference, offering a significant performance advantage over other devices. However, it's important to consider your specific needs and budget when choosing a device.

Here's a quick comparison with select devices:

| Device | Llama 3 8B Q4 K_M Generation (Tokens/Second) | Llama 3 70B Q4 K_M Generation (Tokens/Second) |

|---|---|---|

| NVIDIA 309024GBx2 | 108.07 | 16.29 |

| NVIDIA RTX 3090 | null | null |

| NVIDIA RTX 4090 | null | null |

| Apple M1 Max | null | null |

Important Note: Performance may vary depending on the LLM model, quantization settings, and the specific task.

While the NVIDIA 309024GBx2 provides the most impressive performance for both Llama 3 8B and 70B models based on the available data, other devices like the NVIDIA RTX 3090 or RTX 4090 might also offer excellent performance for specific use cases. For example, the Apple M1 Max might excel in tasks requiring high memory bandwidth, but its LLM inference capabilities are not yet fully explored. It's crucial to select the device that best aligns with your specific requirements and budget.

FAQ

What are LLMs and why are they exciting?

LLMs, or large language models, are a type of artificial intelligence that can understand and generate human-like text. They're trained on massive datasets of text, learning patterns and relationships in language. This makes them incredibly powerful for tasks like translation, text summarization, and even creative writing.

What's the difference between Q4 and F16 quantization?

Both Q4 and F16 quantization are techniques for reducing the size of your LLM model, but they use different approaches. Q4 quantization represents each weight using 4 bits, while F16 uses 16 bits. Q4 quantization is more aggressive, potentially resulting in a larger speed boost but with a slight accuracy trade-off. F16 quantization offers a speed boost while preserving more accuracy.

What are the benefits of using memory-mapped files?

Memory-mapped files allow you to access your data directly from the storage device without copying it into memory first. This eliminates the need to load data from disk, resulting in drastically faster access times and improved overall performance.

Do I need multiple GPUs to use LLMs?

While multiple GPUs can significantly boost performance, it's not always necessary. Smaller LLMs can often run efficiently on a single GPU, especially if you're working with a single-GPU setup. However, for larger LLMs, multiple GPUs are recommended, especially if you want to achieve near-real-time performance.

Keywords

LLMs, Local Inference, NVIDIA 309024GBx2, Quantization, Q4, F16, Multi-GPU, Parallel Processing, Memory-Mapped Files, Data Alignment, Fine-Tuning, Performance Optimization, Token Generation, Llama 3 8B, Llama 3 70B, GPU Benchmarks, Machine Learning, Deep Learning, AI, Speed, Efficiency, Data Science