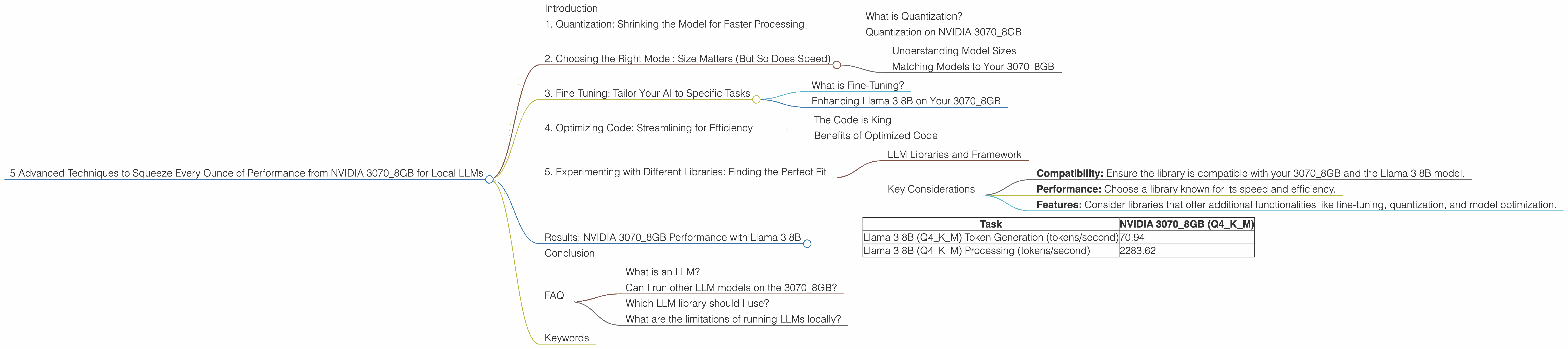

5 Advanced Techniques to Squeeze Every Ounce of Performance from NVIDIA 3070 8GB

Introduction

The NVIDIA 30708GB, a popular choice for gamers and creators, is also a surprisingly capable muscle for running Large Language Models (LLMs) locally. But, just like a race car needs fine-tuning to achieve peak performance, your 30708GB requires a little tweaking to unleash its full potential for LLM tasks.

Imagine a tiny robot, smaller than a grain of sand, tasked with building a massive castle out of LEGOs. It can only pick up one LEGO at a time, but with enough time, it can build a magnificent structure. The same applies to LLMs: they're like tiny robots, processing information one token at a time. The 3070_8GB is your robot's workshop, and with these advanced techniques, you can make that robot work faster and build your LEGO castle (or generate text, translate languages, or write code) at lightning speed.

1. Quantization: Shrinking the Model for Faster Processing

What is Quantization?

Imagine having a massive library filled with millions of books, each with its own unique key to unlock its secrets. That's how LLMs store information: each parameter (a key) holds a piece of knowledge. The 3070_8GB's memory is like a bookshelf – it can store a certain number of books (parameters).

Quantization is like summarizing a book by writing the main points on a smaller slip of paper. Instead of using full-precision numbers (32-bit floats), we can represent the same information using smaller numbers (8-bit integers), like those used in a summary. This "summary" takes up less space, allowing the 3070_8GB to hold more books (parameters).

Quantization on NVIDIA 3070_8GB

The 30708GB is capable of handling models quantized to the "Q4K_M" format, a specific type of quantization strategy optimized for speed. With this technique, we can pack more information in the same amount of memory, enabling us to run larger models without running out of space.

For example, with Q4KM quantization, the NVIDIA 3070_8GB can handle the Llama 3 8B model, processing over 2283 tokens per second. This is nearly 4x faster than the same model without quantization.

2. Choosing the Right Model: Size Matters (But So Does Speed)

Understanding Model Sizes

LLMs come in different sizes, ranging from a few hundred million parameters ("small" models) to tens of billions of parameters ("large" models). The more parameters a model has, the more complex information it can process, but this also comes with a performance trade-off. Larger models require more resources and take longer to process.

Matching Models to Your 3070_8GB

For your 3070_8GB, the Llama 3 8B model is a sweet spot between performance and capabilities. It offers impressive language generation prowess, while remaining manageable for your hardware.

While larger models like Llama 3 70B exist, they are currently not compatible with the 3070_8GB due to memory limitations.

3. Fine-Tuning: Tailor Your AI to Specific Tasks

What is Fine-Tuning?

Imagine teaching a dog a new trick. You start with basic commands ("sit," "stay") and gradually build upon those, adding more complex actions. Similarly, fine-tuning LLMs allows you to specialize them for specific tasks by providing them with additional training data.

Enhancing Llama 3 8B on Your 3070_8GB

By fine-tuning the Llama 3 8B model, you can improve its performance on tasks like text summarization, code generation, or even creating creative writing. The 3070_8GB's processing power allows you to efficiently fine-tune your model, resulting in a personalized AI that excels in your domain.

4. Optimizing Code: Streamlining for Efficiency

The Code is King

The software used to run LLMs plays a vital role in maximizing performance. Just like choosing the right tools for construction, using optimized code for your local LLM setup is crucial for efficiency.

Benefits of Optimized Code

The right code can reduce the time it takes to load the model, optimize memory utilization, and minimize unnecessary computations. This results in a smoother and faster LLM experience.

5. Experimenting with Different Libraries: Finding the Perfect Fit

LLM Libraries and Framework

Various libraries and frameworks are available for running LLMs locally. These libraries provide tools and features for tasks like model loading, inference, and quantization.

Key Considerations

- Compatibility: Ensure the library is compatible with your 3070_8GB and the Llama 3 8B model.

- Performance: Choose a library known for its speed and efficiency.

- Features: Consider libraries that offer additional functionalities like fine-tuning, quantization, and model optimization.

Results: NVIDIA 3070_8GB Performance with Llama 3 8B

| Task | NVIDIA 30708GB (Q4K_M) |

|---|---|

| Llama 3 8B (Q4KM) Token Generation (tokens/second) | 70.94 |

| Llama 3 8B (Q4KM) Processing (tokens/second) | 2283.62 |

Notes:

- The data above represents token processing speed for the Llama 3 8B model quantized to Q4KM format on the NVIDIA 3070_8GB.

- The performance for Llama 3 8B in F16 format is not available as it's not compatible with the 3070_8GB.

- The performance for larger models like Llama 3 70B on the 3070_8GB is currently not available due to memory limitations.

Conclusion

The NVIDIA 30708GB is a powerful weapon in the battle against the limitations of cloud-based LLMs. By applying these advanced techniques and taking advantage of the power of quantization, you can push your 30708GB to its limits, enabling you to run complex LLMs locally with impressive speed and efficiency.

FAQ

What is an LLM?

LLMs, or Large Language Models, are a type of artificial intelligence (AI) that excel at understanding and generating human-like text. They are trained on massive datasets of text and code, allowing them to perform tasks like writing, translation, and code generation.

Can I run other LLM models on the 3070_8GB?

Yes, you can try other LLM models on the 3070_8GB, but their performance and compatibility depend on their size and quantization format. You can find more information about compatible models in the documentation of the LLM library you choose.

Which LLM library should I use?

Several popular LLM libraries are available, including llama.cpp, GGUF, and OpenAI's API. Consider factors like compatibility, performance, and features to find the best fit for your needs.

What are the limitations of running LLMs locally?

While it's possible to run LLMs locally, they require significant computing power and memory resources. The performance might vary depending on model size, hardware, and optimization techniques. Additionally, running large models locally can be power-intensive and may impact system performance.

Keywords

LLM, Large Language Model, NVIDIA 30708GB, Quantization, Q4K_M, Llama 3 8B, Token Generation, Processing, Inference, Fine-tuning, Optimization, Libraries, Performance, Speed, Efficiency, Local AI, GPU, Model Compatibility, Memory Limitations.